How I Built a Mostly Feature-Complete MVP in 3 Months Whilst Working Full-Time

In this post I’ll talk about the features, the tech stack and the globally distributed infrastructure behind building this MVP, and of course, with a sprinkle of learnings too.

The “MVP”

Deciding what makes up an “MVP” is always interesting - I’ve heard some saying if the product isn’t embarrassing you’re releasing it too late, and also if the product is embarrassing, you’re not gonna make it.

For me, I’ve always had the idea of building all the essential features as part of the MVP, with one or two “hero” features that would differentiate the product from the competitions, and then build out more premium features over time.

If you can’t already tell, Persumi is a content creation platform with some social networking features. It may sound bland, but what I believe makes it stand out, is the desire of putting the focus back onto the content, rather than the VC-fuelled, ever increasing appetite for more ads and user hostile features.

The Long Nights and Weekends

There is no magic beans for productivity - especially when I have a full time job. Working on a side hustle means giving up on almost all social and entertainment activities. It’s not for everyone, but I didn’t mind it too much. Being an introvert definitely helped - I was happy to see night by night the MVP gradually taking shape to become more and more real.

Looking back, I spent about three months to build out most of the MVP, then another week or two on infrastructure, and another week or two for polishing, all whilst having a full time job.

It’s been a journey, I’m glad that it “only” took me 3-4 months to get to this stage, as initially I estimated for a 6+ months MVP build.

The Features

With all that in mind, I’ve set out to build the essential features that make a blogging and social networking platform:

- Short form content like a tweet

- Long form content like a blog post or a book chapter

- RSS feeds

- Communities similar to forums and sub-reddits

- Direct messaging between users

- A voting (like/dislike) system

- A bunch of CRUD glue pieces to make all these things work

The “Hero” Features

Beyond these seemly unremarkable features, I’ve also had in mind two key features that would differentiate the platform from the rest:

- The “persona” concept, whereby each user is allowed to have multiple personas to hold different content or topics of interest, e.g. a persona for professional stuff, a persona for gaming stuff and a persona for travel stuff, etc

- AI generated audio content for text (also known as Text-to-Speech)

These two “hero” features are what drove me to build Persumi in the first place. Together, they solve some very real pain points for me, namely:

- Following specific topics of interest from people is difficult, with the algorithms taking over people’s home feeds, there are simply way too much noise, thanks to VC-fuelled “user engagement” metrics

- Content consumption on the go (e.g. during commute or during workouts, etc) is becoming more and more prevalent, but the traditional platforms haven’t adopted to this new lifestyle other than shoving short form content down our throats

There’s also a third “hero” feature: the Aura system. Unlike the upvote/downvote or like/dislike buttons in many social platforms that only serve the algorithm to push more content to you, Persumi’s Aura system keeps track of user’s content quality over time, and would punish the low quality content and promote high quality content using visual cues - lower quality content has a much lower contrast making them easy to ignore. In the age of social media, self-curating content becomes essential to keep a platform healthy, engaging and usable.

The Non-MVP Features, a.k.a. The Future

There are many features that didn’t make the MVP cut, most of these are value-added features that will eventually make their way into paid subscriptions - if Persumi gains enough traction to attract users who don’t mind paying for premium features.

A prime example of such paid features is ones that help users monetise their content, e.g. ad revenue sharing and paid subscribers (like Patreon).

I also have the ambition of building out Persumi’s features so it can eventually compete against the likes of LinkedIn and Tinder.

Wouldn’t it be better for the world to have a platform like Persumi that doesn’t focus on dark patterns and exploiting users? 😉

The Tech Stack

Over the past decade or so I’ve mainly worked with two tech stacks: Ruby and Elixir. So naturally, Persumi was going to be built using one of them.

After some consideration, I’ve decided to go ahead with Elixir, the main reasons were:

- Elixir and Erlang/OTP support distributed systems out of box

- I’ve been writing more Elixir than Ruby lately, so I’m more productive in Elixir

- I really wanted to try and use LiveView in production

- I prefer Phoenix’s application architecture more than Rails’

On top of Elixir, I’ve decided early on a few other things to go with it:

- Tailwind for CSS

- Postgres for database, preferably a serverless option

- A search engine

- An easy to maintain infrastructure that doesn’t cost an arm and a leg

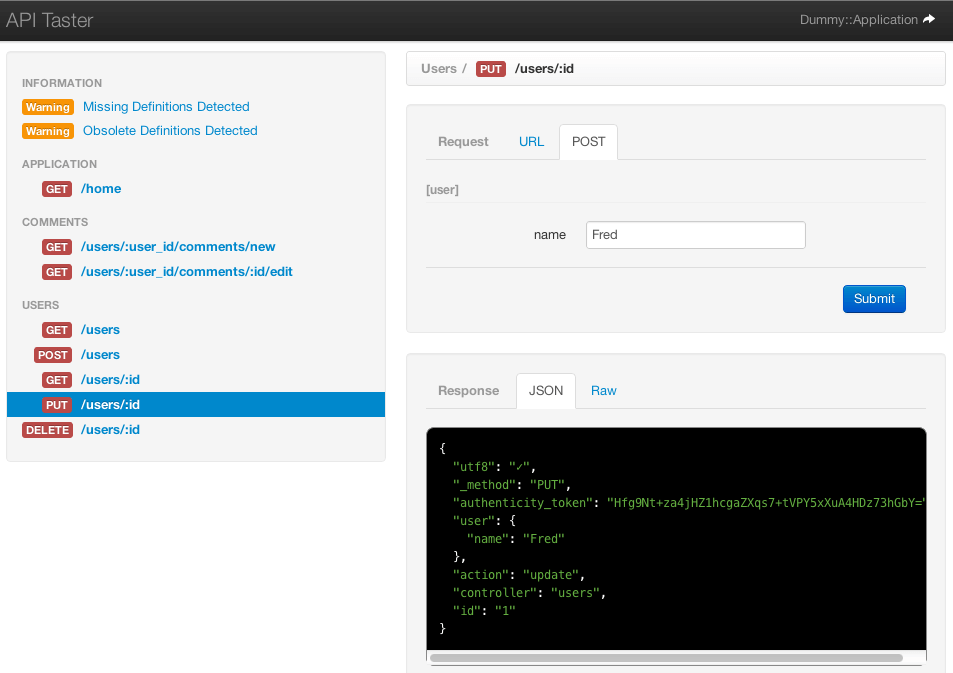

Elixir

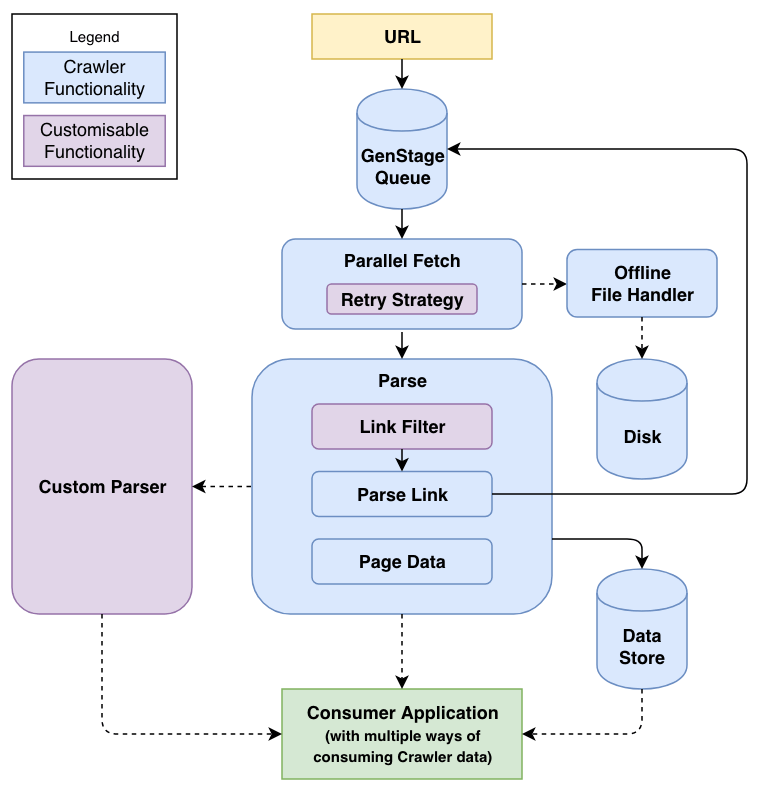

I first discovered Elixir in 2014 while I was still actively involved in the Ruby and Rails communities, but it was two years later that I had the opportunity to really dive into it. I built a few open source libraries to help me learn Elixir and OTP:

- Crawler - a high performance web scraper.

- OPQ: One Pooled Queue - a simple, in-memory FIFO queue with back-pressure support, built for Crawler.

- Simple Bayes - a Naive Bayes machine learning implementation. Hey, I was doing machine learning before it was mainstream! 😆

- Stemmer - an English (Porter2) stemming implementation, built for Simple Bayes.

With a decent amount of experience building Phoenix web apps over the years, I unfortunately never had the opportunity to use LiveView…

I guess that’s finally changed now.

LiveView has been amazing, not only does it drastically reduce the amount of front-end code you have to write, make the entire web app feel super snappy, but it also virtually eliminates any front-end and back-end code/logic duplications. It is such an awesome piece of technology that improves both the user experience and the developer experience.

Petal Pro

During my initial tech research, I came across Petal Pro which is a boilerplate starter template built on top of Phoenix. It handles things like user authentication which almost every web app needs, but is somewhat tedious to build.

Petal Pro isn’t free but it ended up saving me so much time. I also started contributing small bug fixes and features to it too. If you are about to build something in Phoenix, check it out!

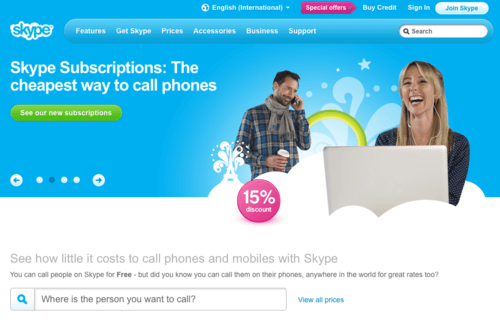

Tailwind CSS

As I kept progressing my career, there have been fewer and fewer opportunities for me to write front-end and CSS code. Last time I rebuilt my blog, I used Bulma - that was 2019. Since then Tailwind has gained a lot more traction, so I wanted an excuse to try finally give it a shot.

There is a debate on how many new things you should try for building your MVP - the more you have to learn, the slower your MVP progresses. That said, given CSS is reasonably straightforward, I figured it wouldn’t slow me down too much, if anything, Tailwind’s flexibility might just eventually make up any time lost in learning.

I’m happy to report that it is indeed true - by using Tailwind, it became significantly easier for me to customise my components and elements. I can see why it became so popular. It’s not for everyone, but I like it.

Postgres

Choosing Postgres as a database was a no brainer, given how popular and versatile it is. I did briefly consider NoSQL options like DynamoDB but quickly wrote them off as I needed an RDBMS to get things off the ground quickly, and the DB is unlikely to be the bottleneck for a long time anyway.

In Elixir, the Ecto library works wonders for Postgres.

Later in the post I’ll touch on how I deploy and run Postgres in production.

Search Engine

For a search engine, my requirements were:

- The ability to search across multiple fields of a schema

- The ability to rank them

- The ability to have typo tolerance, word stemming and other similar language features to make search more intuitive

- The ability to search multiple languages, including CJK (Chinese/Japanese/Korean) characters

- Simple to run

- Cheap to run

Using Postgres’ full text search was probably going to be the simplest but it doesn’t offer all the functionalities I need without a bunch of set up and manual SQL queries so I didn’t pursue it.

Elasticsearch on the other hand, offers good search functionalities but takes a bit of effort to set up and maintain, and can be costly to run.

After doing some more research, I found the following three options that would fit my needs:

Both Meilisearch and Typesense are open source, with commercial SaaS offerings, whilst Algolia is SaaS-only.

It’s been an interesting journey. I started with Typesense as I liked what I read, but I quickly discovered that it doesn’t search Chinese characters properly.

I then turned to Meilisearch. I especially liked the fact that they offered a generous free tier SaaS to get you off the ground running. Spoiler: during my implementation they did a bait and switch and removed the free tier.

At the time the Elixir support for Meilisearch wasn’t up to date, so I ended up contributing to a community library to add the features I needed.

Curious timing, after Meilisearch removed their free tier, I discovered that even though they officially support searching for Chinese characters, the implementation wasn’t perfect. I found some edge cases where characters weren’t detected properly, making the search results unreliable.

So, my last hope was Algolia. Despite them being the more expensive option out of the three, it does offer a free tier. It turns out, their search results for Chinese characters were much better than Meilisearch’s. Luckily, re-implementing the search from Meilisearch to Algolia didn’t take too much effort, it was pretty much done in one night.

Infrastructure

Early on during the development I’d already determined I wanted to try Fly and Neon, for web and DB, respectively.

I am in no way associated with either company, I was curious about Fly due to its tie-in with the Elixir community (Phoenix Framework’s author Chris McCord works there), and Neon due to its serverless nature.

Globally Distributed Infra

With Fly, the infrastructure automatically becomes globally distributed as soon as I started provisioning servers in more than one region. As of the time of writing, Persumi is deployed to US West, Australia and EU.

Despite being simple to use, making Fly work initially actually took quite a bit of finessing due to its incomplete official documentation and flakiness. Some of the services were having issues during the course of my MVP development. Worse, they don’t report (or sometimes even acknowledge) the issues unless they are region-wide outages. To this date, I believe their blue/green deployment strategy which was recently introduced, is still buggy, I often have to use their rolling deployment strategy instead. Deployment logs were provided to Fly but I think they’re too busy with other things…

Still, I’m sticking with them for now due to the ease of use after the initial hurdle, and their globally distributed infrastructure without asking for my kidney.

To augment Fly’s web servers, I also use Cloudflare’s CDN as well as R2 to serve asset files and audio files.

Funny tangent, initially I used Bunny for asset files and CDN, as I misread Cloudflare’s terms and thought I couldn’t serve audio files from Cloudflare. Bunny worked okay but their dashboard for some reason was painfully slow - not a good look for a CDN company. Like the search engine switch, it didn’t take me too long to switch over to Cloudflare.

Serverless Postgres

There are a few options to run Postgres:

- Run on a standard server for maximum portability, but it requires more server maintenance overhead

- Run on AWS RDS/Aurora or a similar managed service, easy but can be costly

- Run on a serverless option such as Aurora Serverless or Neon

For my use case, I think option 2 or 3 are better fitting. As I mentioned earlier, I started the experiment with Neon.

Neon worked well initially, until I started deploying Fly instances in multiple regions. Due to Neon being only available in one region (I chose US West), and I live in Australia, the round trips between Fly’s Australian instance and Neon’s US instance were a show stopper - especially when complex DB transactions were involved. Actions sometimes took seconds to complete, yikes.

Despite Fly not offering a managed Postgres service, I ended up trying it anyway due to its distributed nature. After incorporating Fly Postgres in the app, all DB operations immediately became more responsive. Paired with LiveView, it feels like running the application locally.

The current Persumi infra looks like:

- 1 x Fly instance in US West, always on

- 1 x Fly instance in Australia, auto-shutdown when there’s no traffic

- 1 x Fly instance in Netherlands, auto-shutdown when there’s no traffic

- 1 x Fly Postgres writer instance in US West, always on

- 1 x Fly Postgres read replica instance in Australia, always on

- 1 x Fly Postgres read replica instance in Netherlands, always on

With this setup, I think I’m quite happy with the cost and scalability balance - it costs ~$20/m to run, with the potential of both vertical and horizontal scaling with ease.

The Missteps

The search engine and CDN swaps mentioned earlier certainly took away some of my time, but they were nothing compared to a major misstep I encountered.

And that was: the choice of how machine learning is done.

Let me explain.

Machine Learning, and Inference

Even before I started the first line of code, I already painted a picture in my head on the machine learning needed: a TTS (text-to-speech) model that I could run inference locally on the instance.

The reason being I believed it was the more flexible approach to gradually improve the inference and therefore the end result by training my own AI models over time.

Given I didn’t want to rent expensive GPU instances, I opted for fast TTS models that could do near real-time inference on CPUs. I used Coqui TTS.

The resulting out-of-box audio wasn’t great, but I kept pressing on.

The show stopper came when it was time to deploy everything onto Fly. Due to Fly’s architecture (they deploy small-ish Docker images, < 2GB each, onto their global network), I struggled to keep the Docker image file small enough to be able to deploy. With Coqui TTS, I would need Python and all the dependencies that resulted in a Docker image around 4-5GB in size.

With my tunnel vision, I then chose to offload the entire Python and Coqui TTS dependency tree onto Fly’s persistent volumes. I knew it wasn’t a great option, as that meant my infrastructure (other than the database) was no longer immutable.

Sometimes it’s necessary to take a step back, re-evaluate, and then press on in a different direction. Which thankfully I did.

The new direction is quite simple really: instead of performing inference locally, use an external service instead.

After doing a quick comparison between the offerings from AWS, Azure and GCP, I ended up using Google’s TTS. Honestly I think I would’ve been happy with any of the options, they all seem to have decent neural based TTS.

In hindsight, these giant corporations have much more resources and expertise to train better models than I ever could on my own.

The end result:

- The TTS sounds significantly better than before

- It’s just as cheap to run (Google offers a certain amount of free TTS API calls per month)

- It no longer needs complex Python calls and FFmpeg calls to make local TTS work

- The Fly infrastructure is simple and immutable again

In hindsight, I never should’ve even entertained the idea of running ML locally on CPUs, no matter how simple and efficient a model might be.

That said, with TTS, it wasn’t as simple as just calling the APIs and getting the perfect resulting audio back. Some pre and post processing were needed, but that’s a topic for another time.

More Machine Learning

The cherry on top - now that Google’s APIs were integrated into the app, I ended up also using Google’s PaLM 2 to do text summarisation (it was initially done locally too) as well as for a ChatGPT-like AI prompt service, to power Persumi’s AI writing assistance feature.

The Closing

If you read this far, thank you! I hope you enjoyed reading (or listening) to this post. Please look around and kick tyres, I would love your feedback on how to improve Persumi.

Sign up for an account if you haven’t already, and leave a comment if you have any questions. Until next time!

]]>