MySql入门:高可用与架构设计

MySQL高可用与架构设计

在现代互联网应用中,数据库的高可用性和可扩展性至关重要。单点故障可能导致整个系统瘫痪,性能瓶颈可能影响用户体验。今天,我们将深入探讨MySQL的高可用架构设计,从主从复制到分布式集群,帮助你构建稳定可靠的数据库系统。

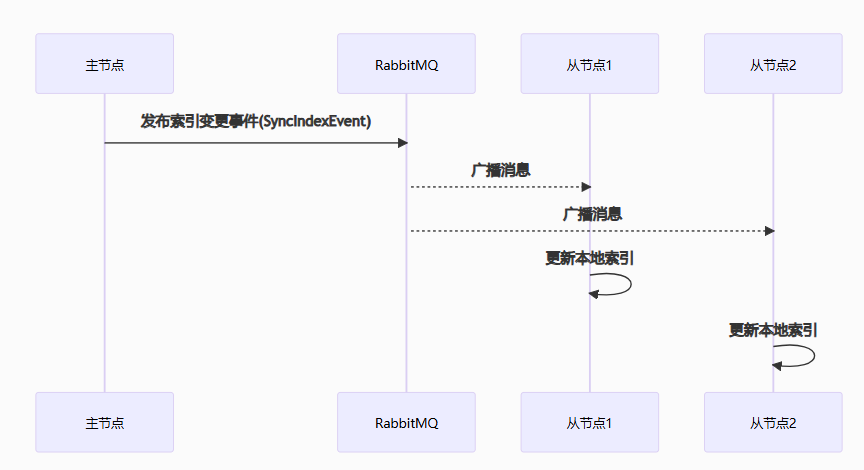

1. 主从复制架构

复制原理与三种复制模式

复制基本原理:

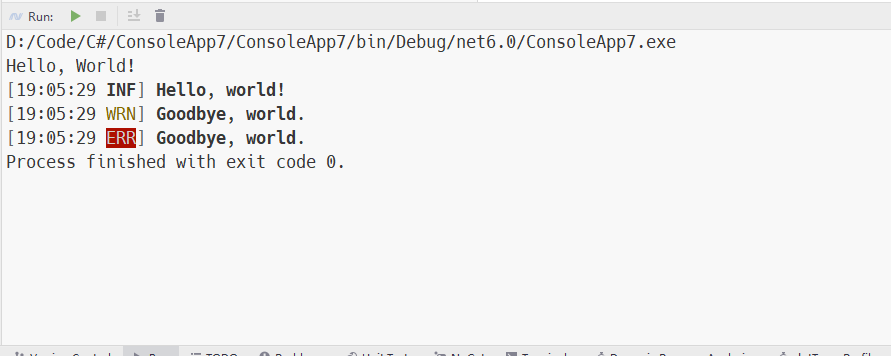

-- 复制过程涉及的关键线程-- 主库:Binlog Dump Thread-- 从库:I/O Thread, SQL Thread-- 查看主库状态SHOW MASTER STATUS;/*+------------------+----------+--------------+------------------+-------------------+| File | Position | Binlog_Do_DB | Binlog_Ignore_DB | Executed_Gtid_Set |+------------------+----------+--------------+------------------+-------------------+| mysql-bin.000003 | 194 | | | |+------------------+----------+--------------+------------------+-------------------+*/-- 查看从库状态SHOW SLAVE STATUS\G/* Slave_IO_State: Waiting for master to send event Master_Host: 192.168.1.100 Master_User: repl Master_Port: 3306 Connect_Retry: 60 Master_Log_File: mysql-bin.000003 Read_Master_Log_Pos: 194 Relay_Log_File: relay-bin.000002 Relay_Log_Pos: 320 Relay_Master_Log_File: mysql-bin.000003 Slave_IO_Running: Yes Slave_SQL_Running: Yes Replicate_Do_DB: Replicate_Ignore_DB: Replicate_Do_Table: Replicate_Ignore_Table: Replicate_Wild_Do_Table: Replicate_Wild_Ignore_Table: Last_Errno: 0 Last_Error: Skip_Counter: 0 Exec_Master_Log_Pos: 194 Relay_Log_Space: 526 Until_Condition: None Until_Log_File: Until_Log_Pos: 0 Master_SSL_Allowed: No Master_SSL_CA_File: Master_SSL_CA_Path: Master_SSL_Cert: Master_SSL_Cipher: Master_SSL_Key: Seconds_Behind_Master: 0Master_SSL_Verify_Server_Cert: No Last_IO_Errno: 0 Last_IO_Error: Last_SQL_Errno: 0 Last_SQL_Error: Replicate_Ignore_Server_Ids: Master_Server_Id: 1 Master_UUID: 6b0f1c1a-5d5e-11eb-ae93-000c29a3a3a3 Master_Info_File: mysql.slave_master_info SQL_Delay: 0 SQL_Remaining_Delay: NULL Slave_SQL_Running_State: Slave has read all relay log; waiting for more updates Master_Retry_Count: 86400 Master_Bind: Last_IO_Error_Timestamp: Last_SQL_Error_Timestamp: Master_SSL_Crl: Master_SSL_Crlpath: Retrieved_Gtid_Set: Executed_Gtid_Set: Auto_Position: 0 Replicate_Rewrite_DB: Channel_Name: Master_TLS_Version: */三种复制模式对比:

-- 1. 基于语句的复制(Statement-Based Replication)-- 配置SET GLOBAL binlog_format = 'STATEMENT';-- 优点:二进制日志较小,网络传输量少-- 缺点:非确定性函数可能导致数据不一致-- 2. 基于行的复制(Row-Based Replication)SET GLOBAL binlog_format = 'ROW';-- 优点:数据一致性更好-- 缺点:二进制日志较大,网络传输量大-- 3. 混合模式复制(Mixed)SET GLOBAL binlog_format = 'MIXED';-- 优点:结合两者优势,自动选择最优方式-- 缺点:配置相对复杂-- 生产环境推荐使用ROW或MIXED模式基于二进制日志的复制机制

二进制日志配置:

-- 查看二进制日志配置SHOW VARIABLES LIKE 'log_bin%';SHOW VARIABLES LIKE 'binlog_format%';SHOW VARIABLES LIKE 'sync_binlog%';SHOW VARIABLES LIKE 'expire_logs_days%';-- 二进制日志配置示例(my.cnf)/*[mysqld]# 启用二进制日志log_bin = /var/lib/mysql/mysql-bin# 日志格式binlog_format = ROW# 每次事务提交都同步到磁盘sync_binlog = 1# 日志保留7天expire_logs_days = 7# 每个日志文件大小max_binlog_size = 100M# 自动清理日志binlog_expire_logs_seconds = 604800*/复制过滤规则:

-- 主库过滤规则-- 在my.cnf中配置/*# 忽略系统库的复制binlog_ignore_db = mysqlbinlog_ignore_db = information_schemabinlog_ignore_db = performance_schemabinlog_ignore_db = sys*/-- 从库过滤规则CHANGE MASTER TO REPLICATE_DO_DB = (app_db), REPLICATE_IGNORE_DB = (test,temp_db), REPLICATE_DO_TABLE = (app_db.important_table), REPLICATE_IGNORE_TABLE = (app_db.log_table);-- 通配符过滤CHANGE MASTER TO REPLICATE_WILD_DO_TABLE = ('app_db.shard_%'), REPLICATE_WILD_IGNORE_TABLE = ('app_db.temp_%');半同步复制配置实战

半同步复制原理:

-- 安装半同步插件(主从库都需要)INSTALL PLUGIN rpl_semi_sync_master SONAME 'semisync_master.so';INSTALL PLUGIN rpl_semi_sync_slave SONAME 'semisync_slave.so';-- 查看插件状态SELECT PLUGIN_NAME, PLUGIN_STATUS FROM INFORMATION_SCHEMA.PLUGINS WHERE PLUGIN_NAME LIKE '%semi%';-- 配置主库半同步SET GLOBAL rpl_semi_sync_master_enabled = 1;SET GLOBAL rpl_semi_sync_master_timeout = 1000; -- 1秒超时-- 配置从库半同步SET GLOBAL rpl_semi_sync_slave_enabled = 1;-- 查看半同步状态SHOW STATUS LIKE 'Rpl_semi_sync%';/*Rpl_semi_sync_master_status | ONRpl_semi_sync_master_clients | 2 -- 连接的半同步从库数量Rpl_semi_sync_master_yes_tx | 1000 -- 成功通过半同步的事务数Rpl_semi_sync_master_no_tx | 5 -- 超时后转为异步的事务数*/半同步复制配置优化:

-- 持久化配置(在my.cnf中)/*[mysqld]# 主库配置plugin_load = "rpl_semi_sync_master=semisync_master.so;rpl_semi_sync_slave=semisync_slave.so"rpl_semi_sync_master_enabled = 1rpl_semi_sync_slave_enabled = 1rpl_semi_sync_master_timeout = 1000rpl_semi_sync_master_wait_point = AFTER_SYNC -- MySQL 5.7+ 推荐*/-- 监控半同步复制SELECT VARIABLE_NAME, VARIABLE_VALUEFROM performance_schema.global_statusWHERE VARIABLE_NAME LIKE 'RPL_SEMI_SYNC%';-- 半同步复制降级监控-- 当从库响应超时或故障时,主库会自动降级为异步复制-- 需要监控降级事件并及时处理多源复制与链式复制

多源复制配置:

-- MySQL 5.7+ 支持多源复制-- 从多个主库复制数据到单个从库-- 配置多源复制通道-- 主库1配置CHANGE MASTER TO MASTER_HOST = 'master1_host', MASTER_USER = 'repl', MASTER_PASSWORD = 'password', MASTER_PORT = 3306, MASTER_AUTO_POSITION = 1FOR CHANNEL 'master1';-- 主库2配置 CHANGE MASTER TO MASTER_HOST = 'master2_host', MASTER_USER = 'repl', MASTER_PASSWORD = 'password', MASTER_PORT = 3306, MASTER_AUTO_POSITION = 1FOR CHANNEL 'master2';-- 启动多源复制START SLAVE FOR CHANNEL 'master1';START SLAVE FOR CHANNEL 'master2';-- 查看多源复制状态SHOW SLAVE STATUS FOR CHANNEL 'master1'\GSHOW SLAVE STATUS FOR CHANNEL 'master2'\G-- 按通道过滤操作STOP SLAVE SQL_THREAD FOR CHANNEL 'master1';START SLAVE SQL_THREAD FOR CHANNEL 'master1';链式复制架构:

-- 三级复制链:Master -> Relay Slave -> Leaf Slave-- 配置中继从库/*Master配置:log_bin = onlog_slave_updates = off -- 默认,中继库不需要记录从库更新Relay Slave配置:log_bin = onlog_slave_updates = on -- 关键:记录从主库接收的更新Leaf Slave配置:log_bin = off -- 或者 on,根据需求log_slave_updates = off*/-- 中继从库的特殊配置/*[mysqld]# 中继从库配置server_id = 2log_bin = mysql-binlog_slave_updates = 1relay_log = relay-binread_only = 1# 过滤规则(可选)replicate_do_db = app_dbreplicate_ignore_db = mysql*/复制故障排查与修复

常见复制错误处理:

-- 1. 主键冲突错误-- 错误信息:Duplicate entry 'X' for key 'PRIMARY'-- 解决方案:STOP SLAVE;SET GLOBAL sql_slave_skip_counter = 1;START SLAVE;-- 或者手动处理冲突数据STOP SLAVE;-- 查看冲突数据SELECT * FROM table_name WHERE primary_key = 'X';-- 删除冲突数据或更新主键DELETE FROM table_name WHERE primary_key = 'X';START SLAVE;-- 2. 数据不存在错误-- 错误信息:Can't find record in 'table_name'-- 解决方案:STOP SLAVE;-- 在从库插入缺失的数据INSERT IGNORE INTO table_name VALUES (...);START SLAVE;-- 3. 网络中断导致的复制延迟-- 监控复制延迟SHOW SLAVE STATUS\G-- 查看Seconds_Behind_Master-- 自动重连配置CHANGE MASTER TO MASTER_CONNECT_RETRY = 60, MASTER_RETRY_COUNT = 86400;GTID复制故障处理:

-- 启用GTID复制-- 在my.cnf中配置/*[mysqld]gtid_mode = ONenforce_gtid_consistency = ON*/-- GTID复制错误处理-- 查看错误的GTIDSHOW SLAVE STATUS\G-- Last_SQL_Error: Coordinator stopped because there were error(s) in the worker(s)...-- Retrieved_Gtid_Set: 6b0f1c1a-5d5e-11eb-ae93-000c29a3a3a3:1-100-- Executed_Gtid_Set: 6b0f1c1a-5d5e-11eb-ae93-000c29a3a3a3:1-95-- 跳过特定GTID事务STOP SLAVE;SET GTID_NEXT = '6b0f1c1a-5d5e-11eb-ae93-000c29a3a3a3:96';BEGIN; COMMIT;SET GTID_NEXT = 'AUTOMATIC';START SLAVE;-- 重置GTID复制-- 注意:这会清除所有复制信息,需要重新配置STOP SLAVE;RESET SLAVE ALL;CHANGE MASTER TO ...;START SLAVE;2. 高可用集群方案

MySQL Router读写分离

MySQL Router部署配置:

# MySQL Router配置文件 (mysqlrouter.conf)[DEFAULT]logging_folder = /var/log/mysqlrouterruntime_folder = /var/run/mysqlrouterconfig_folder = /etc/mysqlrouter[routing:read_write]bind_address = 0.0.0.0bind_port = 6446destinations = metadata-cache://mycluster/?role=PRIMARYrouting_strategy = first-available[routing:read_only]bind_address = 0.0.0.0bind_port = 6447destinations = metadata-cache://mycluster/?role=SECONDARYrouting_strategy = round-robin# 启动MySQL Router# mysqlrouter --config=/etc/mysqlrouter/mysqlrouter.conf &应用程序连接配置:

# Python应用程序连接示例import mysql.connector# 写操作连接(主库)write_config = { 'host': 'router_host', 'port': 6446, # 读写端口 'user': 'app_user', 'password': 'password', 'database': 'app_db'}# 读操作连接(从库)read_config = { 'host': 'router_host', 'port': 6447, # 只读端口 'user': 'app_user', 'password': 'password', 'database': 'app_db'}# 写操作def update_user_profile(user_id, data): conn = mysql.connector.connect(**write_config) # 执行更新操作 conn.close()# 读操作 def get_user_profile(user_id): conn = mysql.connector.connect(**read_config) # 执行查询操作 conn.close()MHA自动故障转移

MHA架构组成:

# MHA组件# 1. MHA Manager - 管理节点# 2. MHA Node - 数据节点代理# MHA Manager配置 (app1.cnf)[server default]manager_log=/var/log/masterha/app1.logmanager_workdir=/var/log/masterha/app1master_binlog_dir=/var/lib/mysqluser=mha_userpassword=mha_passwordping_interval=3remote_workdir=/tmprepl_user=repl_userrepl_password=repl_passwordssh_user=root[server1]hostname=master_hostport=3306[server2] hostname=slave1_hostport=3306candidate_master=1[server3]hostname=slave2_hostport=3306no_master=1# 启动MHA监控masterha_manager --conf=/etc/masterha/app1.cnfMHA故障转移过程:

# 1. 检测主库故障# 2. 选择新主库(优先candidate_master=1的从库)# 3. 应用差异的二进制日志# 4. 提升新主库# 5. 其他从库指向新主库# 6. 虚拟IP切换(可选)# 手动触发故障转移masterha_master_switch --conf=/etc/masterha/app1.cnf --master_state=dead# 检查MHA状态masterha_check_status --conf=/etc/masterha/app1.cnf# MHA监控脚本示例#!/bin/bash# mha_monitor.shCONFIG_FILE="/etc/masterha/app1.cnf"LOG_FILE="/var/log/masterha/monitor.log"while true; do status=$(masterha_check_status --conf=$CONFIG_FILE 2>&1) if [[ $status != *"alive"* ]]; then echo "$(date): MHA manager is not running, restarting..." >> $LOG_FILE nohup masterha_manager --conf=$CONFIG_FILE >> $LOG_FILE 2>&1 & fi sleep 30doneOrchestrator管理工具

Orchestrator部署配置:

// orchestrator.conf.json{ "Debug": false, "EnableSyslog": false, "MySQLTopologyUser": "orchestrator", "MySQLTopologyPassword": "orchestrator_password", "MySQLTopologyCredentialsConfigFile": "", "MySQLTopologySSLPrivateKeyFile": "", "MySQLTopologySSLCertFile": "", "MySQLTopologySSLCAFile": "", "MySQLTopologySSLSkipVerify": true, "MySQLTopologyUseMutualTLS": false, "MySQLOrchestratorHost": "127.0.0.1", "MySQLOrchestratorPort": 3306, "MySQLOrchestratorDatabase": "orchestrator", "MySQLOrchestratorUser": "orchestrator", "MySQLOrchestratorPassword": "orchestrator_password", "RaftEnabled": true, "RaftDataDir": "/var/lib/orchestrator", "RaftBind": "192.168.1.100", "DefaultRaftPort": 10008, "AutoPseudoGTID": false, "DetectClusterAliasQuery": "SELECT SUBSTRING_INDEX(@@hostname, '.', 1)", "DetectInstanceAliasQuery": "SELECT @@hostname", "RecoveryPeriodBlockSeconds": 3600, "RecoveryIgnoreHostnameFilters": [], "PromotionIgnoreHostnameFilters": [], "ApplyMySQLPromotionAfterMasterFailover": true, "PreFailoverProcesses": [ "echo 'Will recover from {failureType} on {failureCluster}' >> /tmp/recovery.log" ], "PostFailoverProcesses": [ "echo 'Recovered from {failureType} on {failureCluster}. Failed: {failedHost}:{failedPort}; Successor: {successorHost}:{successorPort}' >> /tmp/recovery.log" ]}Orchestrator API使用:

# 通过REST API管理集群# 发现并注册实例curl "http://orchestrator:3000/api/discover/192.168.1.101/3306"# 查看集群拓扑curl "http://orchestrator:3000/api/cluster/myapp"# 手动故障转移curl "http://orchestrator:3000/api/force-master-failover/myapp"# 查看恢复信息curl "http://orchestrator:3000/api/audit-recovery"# 维护模式curl "http://orchestrator:3000/api/maintenance/myapp/begin"curl "http://orchestrator:3000/api/maintenance/myapp/end"基于Keepalived的VIP方案

Keepalived配置:

# keepalived.confglobal_defs { router_id MYSQL_HA}vrrp_script chk_mysql { script "/usr/bin/mysqlchk" interval 2 weight 2 fall 2 rise 2}vrrp_instance VI_1 { state BACKUP interface eth0 virtual_router_id 51 priority 100 advert_int 1 authentication { auth_type PASS auth_pass 1111 } virtual_ipaddress { 192.168.1.200 } track_script { chk_mysql } notify_master "/etc/keepalived/notify.sh master" notify_backup "/etc/keepalived/notify.sh backup" notify_fault "/etc/keepalived/notify.sh fault"}MySQL健康检查脚本:

#!/bin/bash# mysqlchk - MySQL健康检查脚本MYSQL_HOST="localhost"MYSQL_PORT="3306"MYSQL_USER="health_check"MYSQL_PASS="health_check_password"MYSQL_CMD="/usr/bin/mysql"# 检查MySQL是否可连接$MYSQL_CMD -h $MYSQL_HOST -P $MYSQL_PORT -u $MYSQL_USER -p$MYSQL_PASS -e "SELECT 1;" > /dev/null 2>&1if [ $? -eq 0 ]; then # 检查复制状态(如果是从库) SLAVE_STATUS=$($MYSQL_CMD -h $MYSQL_HOST -P $MYSQL_PORT -u $MYSQL_USER -p$MYSQL_PASS -e "SHOW SLAVE STATUS\G" 2>/dev/null) if [ -n "$SLAVE_STATUS" ]; then # 是从库,检查复制状态 IO_RUNNING=$(echo "$SLAVE_STATUS" | grep "Slave_IO_Running:" | awk '{print $2}') SQL_RUNNING=$(echo "$SLAVE_STATUS" | grep "Slave_SQL_Running:" | awk '{print $2}') SECONDS_BEHIND=$(echo "$SLAVE_STATUS" | grep "Seconds_Behind_Master:" | awk '{print $2}') if [ "$IO_RUNNING" = "Yes" ] && [ "$SQL_RUNNING" = "Yes" ] && [ "$SECONDS_BEHIND" -lt 60 ]; then exit 0 # 健康 else exit 1 # 不健康 fi else # 是主库,直接健康 exit 0 fielse exit 1 # MySQL不可连接fi状态切换通知脚本:

#!/bin/bash# notify.sh - 状态切换通知TYPE=$1VIP="192.168.1.200"LOG_FILE="/var/log/keepalived.log"log() { echo "$(date): $1" >> $LOG_FILE}case $TYPE in master) log "切换为MASTER状态,绑定VIP: $VIP" # 这里可以添加提升为主库的逻辑 # 比如设置read_only=OFF,通知应用等 mysql -e "SET GLOBAL read_only=OFF;" ;; backup) log "切换为BACKUP状态,释放VIP" # 设置只读模式 mysql -e "SET GLOBAL read_only=ON;" ;; fault) log "进入FAULT状态" ;; *) log "未知状态: $TYPE" ;;esac高可用架构选型指南

架构选型矩阵:

| 方案 | 适用场景 | 优点 | 缺点 | 复杂度 |

|---|---|---|---|---|

| 主从+VIP | 中小型应用,预算有限 | 简单可靠,成本低 | 手动切换,监控复杂 | 低 |

| MHA | 中型应用,需要自动故障转移 | 自动故障转移,成熟稳定 | 需要额外管理节点 | 中 |

| Orchestrator | 复杂拓扑,需要灵活管理 | 拓扑感知,API丰富 | 配置复杂,学习成本高 | 高 |

| MySQL InnoDB Cluster | MySQL 8.0,原生高可用 | 官方方案,集成度高 | 版本要求高,资源消耗大 | 中 |

| 云数据库 | 快速部署,免运维 | 全托管,自动备份 | 成本较高,厂商锁定 | 低 |

选型考虑因素:

-- 业务需求评估-- 1. RTO(恢复时间目标)SELECT CASE WHEN rto_requirement <= 30 THEN '需要自动故障转移' WHEN rto_requirement <= 300 THEN '半自动故障转移' ELSE '手动故障转移可接受' END as ha_levelFROM business_requirements;-- 2. RPO(数据恢复点目标)SELECT CASE WHEN rpo_requirement = 0 THEN '需要同步复制' WHEN rpo_requirement <= 1 THEN '需要半同步复制' WHEN rpo_requirement <= 60 THEN '异步复制可接受' ELSE '数据丢失可接受' END as data_protection_levelFROM business_requirements;-- 3. 读写分离需求SELECT CASE WHEN read_ratio > 0.8 THEN '需要强大的读写分离' WHEN read_ratio > 0.5 THEN '需要基础读写分离' ELSE '读写分离非必需' END as read_write_separationFROM workload_analysis;3. 数据库架构设计

读写分离架构设计

应用层读写分离:

// Java应用层读写分离示例@Componentpublic class DataSourceRouter { @Value("${datasource.master.url}") private String masterUrl; @Value("${datasource.slave.url}") private String slaveUrl; private ThreadLocal<Boolean> readOnly = new ThreadLocal<>(); public void setReadOnly(boolean readOnly) { this.readOnly.set(readOnly); } public DataSource getDataSource() { if (Boolean.TRUE.equals(readOnly.get())) { return createDataSource(slaveUrl); } else { return createDataSource(masterUrl); } } // AOP切面自动设置读写分离 @Aspect @Component public class ReadWriteSeparationAspect { @Around("@annotation(org.springframework.transaction.annotation.Transactional)") public Object handleTransaction(ProceedingJoinPoint joinPoint) throws Throwable { Transactional transactional = ((MethodSignature) joinPoint.getSignature()) .getMethod().getAnnotation(Transactional.class); if (transactional.readOnly()) { DataSourceContextHolder.setReadOnly(true); } try { return joinPoint.proceed(); } finally { DataSourceContextHolder.clear(); } } }}中间件读写分离:

# ShardingSphere配置示例# config-sharding.yamldataSources: master_ds: url: jdbc:mysql://master:3306/db?serverTimezone=UTC&useSSL=false username: root password: password connectionTimeoutMilliseconds: 30000 idleTimeoutMilliseconds: 60000 maxLifetimeMilliseconds: 1800000 maxPoolSize: 50 slave_ds_0: url: jdbc:mysql://slave0:3306/db?serverTimezone=UTC&useSSL=false username: root password: password connectionTimeoutMilliseconds: 30000 idleTimeoutMilliseconds: 60000 maxLifetimeMilliseconds: 1800000 maxPoolSize: 50 slave_ds_1: url: jdbc:mysql://slave1:3306/db?serverTimezone=UTC&useSSL=false username: root password: password connectionTimeoutMilliseconds: 30000 idleTimeoutMilliseconds: 60000 maxLifetimeMilliseconds: 1800000 maxPoolSize: 50rules:- !LOAD_BALANCE loadBalancers: round_robin: type: ROUND_ROBIN dataSources: read_ds: dataSourceNames: - slave_ds_0 - slave_ds_1 loadBalancerName: round_robin - !SINGLE defaultDataSource: master_ds loadBalancers: round_robin: type: ROUND_ROBIN分库分表策略与实现

水平分表策略:

-- 用户表按ID范围分表-- 创建分表CREATE TABLE users_0000 LIKE users_template;CREATE TABLE users_0001 LIKE users_template;CREATE TABLE users_0002 LIKE users_template;-- ... 创建更多分表-- 分表路由函数DELIMITER //CREATE FUNCTION get_user_table_name(user_id BIGINT)RETURNS VARCHAR(64)DETERMINISTICBEGIN DECLARE table_suffix VARCHAR(4); SET table_suffix = LPAD(MOD(user_id, 16), 4, '0'); RETURN CONCAT('users_', table_suffix);END //DELIMITER ;-- 分表查询示例SET @user_id = 123456;SET @table_name = get_user_table_name(@user_id);SET @sql = CONCAT('SELECT * FROM ', @table_name, ' WHERE user_id = ?');PREPARE stmt FROM @sql;EXECUTE stmt USING @user_id;DEALLOCATE PREPARE stmt;垂直分库设计:

-- 业务垂直拆分-- 用户库CREATE DATABASE user_center;USE user_center;CREATE TABLE users ( user_id BIGINT PRIMARY KEY, username VARCHAR(50), email VARCHAR(100), password_hash VARCHAR(255), created_at TIMESTAMP);CREATE TABLE user_profiles ( user_id BIGINT PRIMARY KEY, real_name VARCHAR(100), avatar_url VARCHAR(500), bio TEXT);-- 订单库CREATE DATABASE order_center;USE order_center;CREATE TABLE orders ( order_id BIGINT PRIMARY KEY, user_id BIGINT, -- 跨库关联 total_amount DECIMAL(12,2), status VARCHAR(20), created_at TIMESTAMP);CREATE TABLE order_items ( item_id BIGINT PRIMARY KEY, order_id BIGINT, product_id BIGINT, quantity INT, price DECIMAL(10,2));-- 商品库CREATE DATABASE product_center;USE product_center;CREATE TABLE products ( product_id BIGINT PRIMARY KEY, product_name VARCHAR(200), category_id INT, price DECIMAL(10,2), stock_quantity INT);数据拆分:垂直拆分与水平拆分

垂直拆分实施:

-- 原始大表CREATE TABLE user_comprehensive ( user_id BIGINT PRIMARY KEY, -- 基础信息 username VARCHAR(50), email VARCHAR(100), password_hash VARCHAR(255), -- 个人信息 real_name VARCHAR(100), id_card VARCHAR(20), phone VARCHAR(20), -- 扩展信息 education VARCHAR(50), occupation VARCHAR(50), income_level INT, -- 行为信息 last_login_time TIMESTAMP, login_count INT, -- 其他字段... created_at TIMESTAMP, updated_at TIMESTAMP);-- 垂直拆分后-- 用户基础表(高频访问)CREATE TABLE users_basic ( user_id BIGINT PRIMARY KEY, username VARCHAR(50), email VARCHAR(100), password_hash VARCHAR(255), last_login_time TIMESTAMP, login_count INT, created_at TIMESTAMP);-- 用户详情表(低频访问)CREATE TABLE users_detail ( user_id BIGINT PRIMARY KEY, real_name VARCHAR(100), id_card VARCHAR(20), phone VARCHAR(20), education VARCHAR(50), occupation VARCHAR(50), income_level INT, updated_at TIMESTAMP);-- 创建索引优化查询ALTER TABLE users_basic ADD INDEX idx_username (username);ALTER TABLE users_basic ADD INDEX idx_email (email);ALTER TABLE users_detail ADD INDEX idx_phone (phone);水平拆分策略:

-- 时间范围分表(适用于时间序列数据)-- 按月分表CREATE TABLE logs_2023_01 LIKE logs_template;CREATE TABLE logs_2023_02 LIKE logs_template;CREATE TABLE logs_2023_03 LIKE logs_template;-- 时间分表管理存储过程DELIMITER //CREATE PROCEDURE create_next_month_table()BEGIN DECLARE next_month VARCHAR(7); DECLARE table_name VARCHAR(64); DECLARE create_sql TEXT; SET next_month = DATE_FORMAT(DATE_ADD(NOW(), INTERVAL 1 MONTH), '%Y_%m'); SET table_name = CONCAT('logs_', next_month); SET create_sql = CONCAT('CREATE TABLE IF NOT EXISTS ', table_name, ' LIKE logs_template'); PREPARE stmt FROM create_sql; EXECUTE stmt; DEALLOCATE PREPARE stmt; -- 记录创建日志 INSERT INTO table_creation_log (table_name, created_at) VALUES (table_name, NOW());END //DELIMITER ;-- 地理分表(适用于地域性数据)CREATE TABLE users_north LIKE users_template; -- 北方用户CREATE TABLE users_south LIKE users_template; -- 南方用户CREATE TABLE users_east LIKE users_template; -- 东方用户 CREATE TABLE users_west LIKE users_template; -- 西方用户-- 基于业务特征分表CREATE TABLE users_vip LIKE users_template; -- VIP用户CREATE TABLE users_normal LIKE users_template; -- 普通用户CREATE TABLE users_trial LIKE users_template; -- 试用用户分布式ID生成方案

数据库序列方案:

-- 基于数据库的ID生成器CREATE TABLE sequence_generator ( sequence_name VARCHAR(50) PRIMARY KEY, current_value BIGINT NOT NULL DEFAULT 0, step INT NOT NULL DEFAULT 1, updated_at TIMESTAMP DEFAULT CURRENT_TIMESTAMP ON UPDATE CURRENT_TIMESTAMP);-- 获取下一个ID的存储过程DELIMITER //CREATE FUNCTION next_id(seq_name VARCHAR(50))RETURNS BIGINTBEGIN DECLARE current_val BIGINT; DECLARE retry_count INT DEFAULT 0; DECLARE max_retries INT DEFAULT 3; retry_loop: WHILE retry_count < max_retries DO -- 获取当前值 SELECT current_value INTO current_val FROM sequence_generator WHERE sequence_name = seq_name; IF current_val IS NULL THEN -- 初始化序列 INSERT INTO sequence_generator (sequence_name, current_value) VALUES (seq_name, 1) ON DUPLICATE KEY UPDATE current_value = 1; SET current_val = 1; END IF; -- 尝试更新 UPDATE sequence_generator SET current_value = current_value + step, updated_at = CURRENT_TIMESTAMP WHERE sequence_name = seq_name AND current_value = current_val; IF ROW_COUNT() = 1 THEN RETURN current_val + 1; END IF; SET retry_count = retry_count + 1; DO SLEEP(0.01); -- 短暂等待后重试 END WHILE; -- 重试失败,抛出异常 SIGNAL SQLSTATE '45000' SET MESSAGE_TEXT = 'Failed to generate sequence ID';END //DELIMITER ;Snowflake算法实现:

-- Snowflake ID生成器表CREATE TABLE snowflake_worker ( worker_id INT PRIMARY KEY, datacenter_id INT NOT NULL, worker_name VARCHAR(100), last_timestamp BIGINT, sequence BIGINT DEFAULT 0, created_at TIMESTAMP DEFAULT CURRENT_TIMESTAMP);-- Snowflake ID生成函数DELIMITER //CREATE FUNCTION snowflake_next_id(worker_id INT)RETURNS BIGINTBEGIN DECLARE epoch BIGINT DEFAULT 1609459200000; -- 2021-01-01 DECLARE current_ms BIGINT; DECLARE last_ms BIGINT; DECLARE sequence_val BIGINT; DECLARE datacenter_id_val INT; -- 获取worker信息 SELECT last_timestamp, sequence, datacenter_id INTO last_ms, sequence_val, datacenter_id_val FROM snowflake_worker WHERE worker_id = worker_id FOR UPDATE; -- 加锁防止并发 -- 计算当前时间戳 SET current_ms = (UNIX_TIMESTAMP(NOW(3)) * 1000); IF current_ms < last_ms THEN SIGNAL SQLSTATE '45000' SET MESSAGE_TEXT = 'Clock moved backwards'; END IF; IF current_ms = last_ms THEN SET sequence_val = (sequence_val + 1) & 4095; -- 12位序列号,最大4095 IF sequence_val = 0 THEN -- 序列号耗尽,等待下一毫秒 SET current_ms = wait_next_ms(last_ms); END IF; ELSE SET sequence_val = 0; END IF; -- 更新worker状态 UPDATE snowflake_worker SET last_timestamp = current_ms, sequence = sequence_val WHERE worker_id = worker_id; -- 生成ID: 时间戳(41位) + 数据中心ID(5位) + 工作节点ID(5位) + 序列号(12位) RETURN ((current_ms - epoch) << 22) | (datacenter_id_val << 17) | (worker_id << 12) | sequence_val;END //CREATE FUNCTION wait_next_ms(last_ms BIGINT)RETURNS BIGINTBEGIN DECLARE current_ms BIGINT; SET current_ms = (UNIX_TIMESTAMP(NOW(3)) * 1000); WHILE current_ms <= last_ms DO SET current_ms = (UNIX_TIMESTAMP(NOW(3)) * 1000); END WHILE; RETURN current_ms;END //DELIMITER ;数据迁移与同步方案

在线数据迁移:

-- 双写迁移方案-- 1. 准备阶段:创建新表,建立双写机制CREATE TABLE users_new LIKE users_old;-- 2. 数据同步阶段:存量数据迁移INSERT INTO users_new SELECT * FROM users_old WHERE id > ? AND id <= ?; -- 分批迁移-- 3. 增量数据双写-- 应用程序同时写入users_old和users_new-- 4. 数据验证SELECT COUNT(*) as old_count, (SELECT COUNT(*) FROM users_new) as new_count, COUNT(*) - (SELECT COUNT(*) FROM users_new) as diffFROM users_old;-- 5. 切换阶段:停止写入旧表,全面使用新表-- 6. 清理阶段:删除旧表-- 使用pt-online-schema-change工具-- pt-online-schema-change --alter="ADD COLUMN new_column INT" D=database,t=table --execute数据同步监控:

-- 创建数据同步监控表CREATE TABLE data_sync_monitor ( id BIGINT AUTO_INCREMENT PRIMARY KEY, sync_job VARCHAR(100) NOT NULL, source_count BIGINT, target_count BIGINT, diff_count BIGINT, sync_status ENUM('running', 'completed', 'failed'), started_at TIMESTAMP DEFAULT CURRENT_TIMESTAMP, completed_at TIMESTAMP NULL, error_message TEXT);-- 数据一致性检查存储过程DELIMITER //CREATE PROCEDURE check_data_consistency( IN source_table VARCHAR(64), IN target_table VARCHAR(64), IN primary_key VARCHAR(64))BEGIN DECLARE source_total BIGINT; DECLARE target_total BIGINT; DECLARE diff_count BIGINT; -- 检查记录总数 SET @source_sql = CONCAT('SELECT COUNT(*) INTO @source_count FROM ', source_table); PREPARE stmt1 FROM @source_sql; EXECUTE stmt1; DEALLOCATE PREPARE stmt1; SET @target_sql = CONCAT('SELECT COUNT(*) INTO @target_count FROM ', target_table); PREPARE stmt2 FROM @target_sql; EXECUTE stmt2; DEALLOCATE PREPARE stmt2; SET source_total = @source_count; SET target_total = @target_count; SET diff_count = ABS(source_total - target_total); -- 记录检查结果 INSERT INTO data_sync_monitor (sync_job, source_count, target_count, diff_count, sync_status) VALUES (CONCAT(source_table, '_to_', target_table), source_total, target_total, diff_count, CASE WHEN diff_count = 0 THEN 'completed' ELSE 'failed' END); -- 如果有差异,记录具体差异数据 IF diff_count > 0 THEN -- 这里可以添加更详细的差异分析 INSERT INTO data_diff_log (sync_job, diff_type, diff_details) VALUES (CONCAT(source_table, '_to_', target_table), 'count_mismatch', CONCAT('Source: ', source_total, ', Target: ', target_total)); END IF; END //DELIMITER ;总结

通过本篇的深入学习,我们掌握了MySQL高可用架构设计的核心知识:

- 主从复制:理解了复制原理、配置方法和故障处理

- 高可用方案:掌握了MHA、Orchestrator、Keepalived等工具的使用

- 架构设计:学会了读写分离、分库分表、分布式ID生成等高级技术

- 数据迁移:了解了在线数据迁移和同步的最佳实践

关键架构原则:

- 冗余设计:确保没有单点故障

- 自动故障转移:减少人工干预,提高可用性

- 监控告警:及时发现问题并处理

- 容量规划:提前规划系统扩展能力

- 数据安全:保证数据的一致性和完整性

架构演进路径:

- 单机架构 → 主从复制

- 主从复制 → 读写分离

- 读写分离 → 分库分表

- 分库分表 → 分布式数据库

动手练习:

- 搭建MySQL主从复制环境,并测试故障转移

- 配置MHA或Orchestrator实现自动故障转移

- 设计并实施读写分离架构

- 实践分库分表方案,解决单表数据量过大的问题

- 实现分布式ID生成方案

欢迎在评论区分享你的高可用架构实践经验和遇到的问题!