读 Claude Code 源码 - memory 机制续篇

书接上回, 增补 Auto Memory 保存和召回细节; 以及介绍 auto-dream.

Auto Memory 怎么保存

两条路线

- 主 agent 通过通用 write/edit 工具写入指定 memory 目录

- 如果主 agent 这一轮没写, 结束后再用一个后台 forked subagent 补抽一次

/**

* Whether the extract-memories background agent will run this session.

*

* The main agent's prompt always has full save instructions regardless of

* this gate — when the main agent writes memories, the background agent

* skips that range (hasMemoryWritesSince in extractMemories.ts); when it

* doesn't, the background agent catches anything missed.

*/

/**

* The extraction agent runs as a perfect fork of the main conversation — same

* system prompt, same message prefix. The main agent's system prompt always

* has full save instructions; when the main agent writes memories itself,

* extractMemories.ts skips that turn (hasMemoryWritesSince). This prompt

* fires only when the main agent didn't write, so the save-criteria here

* overlap the system prompt's harmlessly.

*/

主 agent 写入 memory

You have a persistent, file-based memory system at `{memoryDir}`. This directory already exists — write to it directly with the Write tool (do not run mkdir or check for its existence).

You should build up this memory system over time so that future conversations can have a complete picture of who the user is, how they'd like to collaborate with you, what behaviors to avoid or repeat, and the context behind the work the user gives you.

If the user explicitly asks you to remember something, save it immediately as whichever type fits best. If they ask you to forget something, find and remove the relevant entry.

{TYPES_SECTION_INDIVIDUAL}

{WHAT_NOT_TO_SAVE_SECTION}

后台 agent 写入 memory

后台 agent 限制读写目录权限以及限制轮数.

You are now acting as the memory extraction subagent. Analyze the most recent ~{newMessageCount} messages above and use them to update your persistent memory systems.

Available tools: Read, Grep, Glob, read-only Bash, and Edit/Write for paths inside the memory directory only.

You have a limited turn budget. Edit requires a prior Read of the same file, so the efficient strategy is: turn 1 — issue all Read calls in parallel for every file you might update; turn 2 — issue all Write/Edit calls in parallel. Do not interleave reads and writes across multiple turns.

You MUST only use content from the last ~{newMessageCount} messages to update your persistent memories. Do not waste any turns attempting to investigate or verify that content further — no grepping source files, no reading code to confirm a pattern exists, no git commands.

## Existing memory files

{existingMemories}

Check this list before writing — update an existing file rather than creating a duplicate.

/**

* Creates a canUseTool function that allows Read/Grep/Glob (unrestricted),

* read-only Bash commands, and Edit/Write only for paths within the

* auto-memory directory. Shared by extractMemories and autoDream.

*/

// Well-behaved extractions complete in 2-4 turns (read → write).

// A hard cap prevents verification rabbit-holes from burning turns.

maxTurns: 5,

当前 query 的 tool loop 结束后通过 hook 触发.

/**

* Extracts durable memories from the current session transcript

* and writes them to the auto-memory directory (~/.claude/projects/<path>/memory/).

*

* It runs once at the end of each complete query loop (when the model produces

* a final response with no tool calls) via handleStopHooks in stopHooks.ts.

*

* Uses the forked agent pattern (runForkedAgent) — a perfect fork of the main

* conversation that shares the parent's prompt cache.

*/

默认每轮都尝试

// Only run extraction every N eligible turns (tengu_bramble_lintel, default 1).

// Trailing extractions (from stashed contexts) skip this check since they

// process already-committed work that should not be throttled.

/** When a call arrives during an in-progress run, we stash the context here

* and run one trailing extraction after the current one finishes. */

// If a call arrived while we were running, run a trailing extraction

// with the latest stashed context.

如果主 agent 这一段已经自己写过 memory, 后台抽取会直接跳过:

/**

* Returns true if any assistant message after the cursor UUID contains a

* Write/Edit tool_use block targeting an auto-memory path.

*

* The main agent's prompt has full save instructions — when it writes

* memories, the forked extraction is redundant.

*/

保存哪些, 不保存哪些

保存以下四类

user: 用户是谁, 擅长什么, 偏好什么解释方式feedback: 用户对你工作方式的纠正或确认project: 代码库里推不出来的项目背景, 比如 deadline、freeze、事故原因reference: 外部系统入口, 比如 Linear 项目、Grafana 看板、Slack 频道

/**

* `## Types of memory` section for INDIVIDUAL-ONLY mode (single directory).

* No <scope> tags. Examples use plain `[saves X memory: …]`. Prose that

* only makes sense with a private/team split is reworded.

*/

export const TYPES_SECTION_INDIVIDUAL: readonly string[] = [

'## Types of memory',

'',

'There are several discrete types of memory that you can store in your memory system:',

'',

'<types>',

'<type>',

' <name>user</name>',

" <description>Contain information about the user's role, goals, responsibilities, and knowledge. Great user memories help you tailor your future behavior to the user's preferences and perspective. Your goal in reading and writing these memories is to build up an understanding of who the user is and how you can be most helpful to them specifically. For example, you should collaborate with a senior software engineer differently than a student who is coding for the very first time. Keep in mind, that the aim here is to be helpful to the user. Avoid writing memories about the user that could be viewed as a negative judgement or that are not relevant to the work you're trying to accomplish together.</description>",

" <when_to_save>When you learn any details about the user's role, preferences, responsibilities, or knowledge</when_to_save>",

" <how_to_use>When your work should be informed by the user's profile or perspective. For example, if the user is asking you to explain a part of the code, you should answer that question in a way that is tailored to the specific details that they will find most valuable or that helps them build their mental model in relation to domain knowledge they already have.</how_to_use>",

' <examples>',

" user: I'm a data scientist investigating what logging we have in place",

' assistant: [saves user memory: user is a data scientist, currently focused on observability/logging]',

'',

" user: I've been writing Go for ten years but this is my first time touching the React side of this repo",

" assistant: [saves user memory: deep Go expertise, new to React and this project's frontend — frame frontend explanations in terms of backend analogues]",

' </examples>',

'</type>',

'<type>',

' <name>feedback</name>',

' <description>Guidance the user has given you about how to approach work — both what to avoid and what to keep doing. These are a very important type of memory to read and write as they allow you to remain coherent and responsive to the way you should approach work in the project. Record from failure AND success: if you only save corrections, you will avoid past mistakes but drift away from approaches the user has already validated, and may grow overly cautious.</description>',

' <when_to_save>Any time the user corrects your approach ("no not that", "don\'t", "stop doing X") OR confirms a non-obvious approach worked ("yes exactly", "perfect, keep doing that", accepting an unusual choice without pushback). Corrections are easy to notice; confirmations are quieter — watch for them. In both cases, save what is applicable to future conversations, especially if surprising or not obvious from the code. Include *why* so you can judge edge cases later.</when_to_save>',

' <how_to_use>Let these memories guide your behavior so that the user does not need to offer the same guidance twice.</how_to_use>',

' <body_structure>Lead with the rule itself, then a **Why:** line (the reason the user gave — often a past incident or strong preference) and a **How to apply:** line (when/where this guidance kicks in). Knowing *why* lets you judge edge cases instead of blindly following the rule.</body_structure>',

' <examples>',

" user: don't mock the database in these tests — we got burned last quarter when mocked tests passed but the prod migration failed",

' assistant: [saves feedback memory: integration tests must hit a real database, not mocks. Reason: prior incident where mock/prod divergence masked a broken migration]',

'',

' user: stop summarizing what you just did at the end of every response, I can read the diff',

' assistant: [saves feedback memory: this user wants terse responses with no trailing summaries]',

'',

" user: yeah the single bundled PR was the right call here, splitting this one would've just been churn",

' assistant: [saves feedback memory: for refactors in this area, user prefers one bundled PR over many small ones. Confirmed after I chose this approach — a validated judgment call, not a correction]',

' </examples>',

'</type>',

'<type>',

' <name>project</name>',

' <description>Information that you learn about ongoing work, goals, initiatives, bugs, or incidents within the project that is not otherwise derivable from the code or git history. Project memories help you understand the broader context and motivation behind the work the user is doing within this working directory.</description>',

' <when_to_save>When you learn who is doing what, why, or by when. These states change relatively quickly so try to keep your understanding of this up to date. Always convert relative dates in user messages to absolute dates when saving (e.g., "Thursday" → "2026-03-05"), so the memory remains interpretable after time passes.</when_to_save>',

" <how_to_use>Use these memories to more fully understand the details and nuance behind the user's request and make better informed suggestions.</how_to_use>",

' <body_structure>Lead with the fact or decision, then a **Why:** line (the motivation — often a constraint, deadline, or stakeholder ask) and a **How to apply:** line (how this should shape your suggestions). Project memories decay fast, so the why helps future-you judge whether the memory is still load-bearing.</body_structure>',

' <examples>',

" user: we're freezing all non-critical merges after Thursday — mobile team is cutting a release branch",

' assistant: [saves project memory: merge freeze begins 2026-03-05 for mobile release cut. Flag any non-critical PR work scheduled after that date]',

'',

" user: the reason we're ripping out the old auth middleware is that legal flagged it for storing session tokens in a way that doesn't meet the new compliance requirements",

' assistant: [saves project memory: auth middleware rewrite is driven by legal/compliance requirements around session token storage, not tech-debt cleanup — scope decisions should favor compliance over ergonomics]',

' </examples>',

'</type>',

'<type>',

' <name>reference</name>',

' <description>Stores pointers to where information can be found in external systems. These memories allow you to remember where to look to find up-to-date information outside of the project directory.</description>',

' <when_to_save>When you learn about resources in external systems and their purpose. For example, that bugs are tracked in a specific project in Linear or that feedback can be found in a specific Slack channel.</when_to_save>',

' <how_to_use>When the user references an external system or information that may be in an external system.</how_to_use>',

' <examples>',

' user: check the Linear project "INGEST" if you want context on these tickets, that\'s where we track all pipeline bugs',

' assistant: [saves reference memory: pipeline bugs are tracked in Linear project "INGEST"]',

'',

" user: the Grafana board at grafana.internal/d/api-latency is what oncall watches — if you're touching request handling, that's the thing that'll page someone",

' assistant: [saves reference memory: grafana.internal/d/api-latency is the oncall latency dashboard — check it when editing request-path code]',

' </examples>',

'</type>',

'</types>',

'',

]

不保存能从当前 project state 推出来或者容易过期的东西

- 架构/目录结构

- git 历史

- 调 bug 配方

- 当前会话里的临时状态

export const WHAT_NOT_TO_SAVE_SECTION: readonly string[] = [

'- Code patterns, conventions, architecture, file paths, or project structure — these can be derived by reading the current project state.',

'- Git history, recent changes, or who-changed-what — `git log` / `git blame` are authoritative.',

'- Debugging solutions or fix recipes — the fix is in the code; the commit message has the context.',

'- Anything already documented in CLAUDE.md files.',

'- Ephemeral task details: in-progress work, temporary state, current conversation context.',

]

在 prompt 专门强调

These exclusions apply even when the user explicitly asks you to save.

怎么落盘

MEMORY.md 是索引.

## How to save memories

Saving a memory is a two-step process:

**Step 1** — write the memory to its own file (e.g., `user_role.md`, `feedback_testing.md`) using this frontmatter format:

```markdown

---

name:

description:

type:

---

**Step 2** — add a pointer to that file in `MEMORY.md`. `MEMORY.md` is an index, not a memory — each entry should be one line, under ~150 characters: `- [Title](file.md) — one-line hook`. It has no frontmatter. Never write memory content directly into `MEMORY.md`.

- `MEMORY.md` is always loaded into your conversation context — lines after 200 will be truncated, so keep the index concise

- Keep the name, description, and type fields in memory files up-to-date with the content

- Organize memory semantically by topic, not chronologically

- Update or remove memories that turn out to be wrong or outdated

- Do not write duplicate memories. First check if there is an existing memory you can update before writing a new one.

Auto Memory 怎么召回

按 feature gate 分流:

- 一条偏老路径: 把

MEMORY.md内容直接注入上下文- 放在 prepend 里, message list 类似 [prepend(userContext), Q1, A1, Q2, A2, Q3]

- 每次用户发送请求时都会构造 prepend, 为了保持 prefix 稳定, prepend 会直接读缓存. 当 prepend 缓存被清理或者发生 compaction 等动作时 prepend 才会重新算

- 然后主 agent 根据

MEMORY.md提供的细分路径直接 read

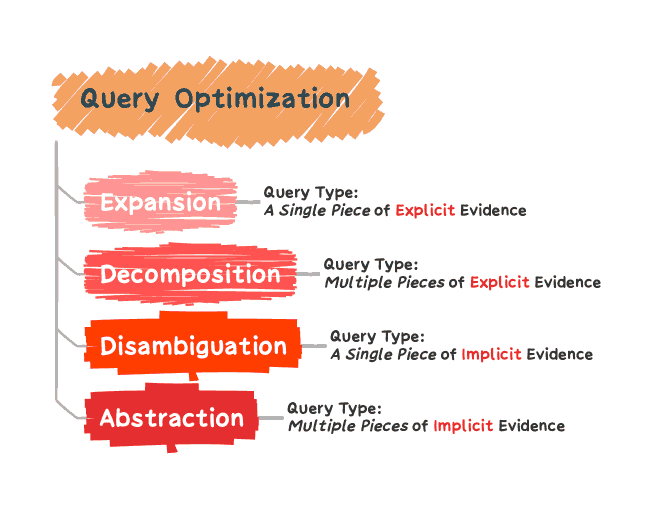

- 一条偏新路径: 按 query 做 relevant-memory prefetch

- 每次 query 时发个 side query (模型用 sonnet) 去 prefetch 相关 memory. 根据每个 memory 文件的 frontmatter 最多召回 5 个文件. 如果召回到了, 就在当前轮次 tool result 返回完后插入到 message list 中 (作为 attachment -> meta user message)

主 agent prompt

export const WHEN_TO_ACCESS_SECTION: readonly string[] = [

'## When to access memories',

'- When memories seem relevant, or the user references prior-conversation work.',

'- You MUST access memory when the user explicitly asks you to check, recall, or remember.',

'- If the user says to *ignore* or *not use* memory: proceed as if MEMORY.md were empty.',

]

把 memory 定位成“历史快照”, 而不是 ground truth.

export const TRUSTING_RECALL_SECTION: readonly string[] = [

'## Before recommending from memory',

'A memory that names a specific function, file, or flag is a claim that it existed *when the memory was written*.',

'- If the memory names a file path: check the file exists.',

'- If the memory names a function or flag: grep for it.',

]

搜索过去的 context

'## Searching past context',

'1. Search topic files in your memory directory:',

memSearch,

'2. Session transcript logs (last resort — large files, slow):',

transcriptSearch,

触发 side query prefetch

每个用户 turn 最多发起一次 prefetch, 不阻塞主回答.

query.ts 的注释:

// Fired once per user turn — the prompt is invariant across loop iterations,

// so per-iteration firing would ask sideQuery the same question N times.

using pendingMemoryPrefetch = startRelevantMemoryPrefetch(

state.messages,

state.toolUseContext,

)

触发需要前置条件:

- 单词太少不查

- 当前 session 已经 surfacing 的 memory 太多也不查

const input = getUserMessageText(lastUserMessage)

// Single-word prompts lack enough context for meaningful term extraction

if (!input || !/\\s/.test(input.trim())) {

return undefined

}

const surfaced = collectSurfacedMemories(messages)

if (surfaced.totalBytes >= RELEVANT_MEMORIES_CONFIG.MAX_SESSION_BYTES) {

return undefined

}

Prompt

You are selecting memories that will be useful to Claude Code as it processes a user's query. You will be given the user's query and a list of available memory files with their filenames and descriptions.

Return a list of filenames for the memories that will clearly be useful to Claude Code as it processes the user's query (up to 5). Only include memories that you are certain will be helpful based on their name and description.

- If you are unsure if a memory will be useful in processing the user's query, then do not include it in your list. Be selective and discerning.

- If there are no memories in the list that would clearly be useful, feel free to return an empty list.

- If a list of recently-used tools is provided, do not select memories that are usage reference or API documentation for those tools (Claude Code is already exercising them). DO still select memories containing warnings, gotchas, or known issues about those tools — active use is exactly when those matter.

Query: ${query}

Available memories:

${manifest}${toolsSection}

Auto-dream

// Background memory consolidation. Fires the /dream prompt as a forked

// subagent when time-gate passes AND enough sessions have accumulated.

//

// Gate order (cheapest first):

// 1. Time: hours since lastConsolidatedAt >= minHours (one stat)

// 2. Sessions: transcript count with mtime > lastConsolidatedAt >= minSessions

// 3. Lock: no other process mid-consolidation

它也是 forked agent. 触发时机:

- 距离上次 consolidation 至少 24 小时

- 期间至少新增了 5 个 session

const DEFAULTS: AutoDreamConfig = {

minHours: 24,

minSessions: 5,

}

而且它会排除当前 session:

// Exclude the current session (its mtime is always recent).

const currentSession = getSessionId()

sessionIds = sessionIds.filter(id => id !== currentSession)

Prompt

export function buildConsolidationPrompt(

memoryRoot: string,

transcriptDir: string,

extra: string,

): string {

return `# Dream: Memory Consolidation

You are performing a dream — a reflective pass over your memory files. Synthesize what you've learned recently into durable, well-organized memories so that future sessions can orient quickly.

Memory directory: \`${memoryRoot}\`

${DIR_EXISTS_GUIDANCE}

Session transcripts: \`${transcriptDir}\` (large JSONL files — grep narrowly, don't read whole files)

---

## Phase 1 — Orient

- \`ls\` the memory directory to see what already exists

- Read \`${ENTRYPOINT_NAME}\` to understand the current index

- Skim existing topic files so you improve them rather than creating duplicates

- If \`logs/\` or \`sessions/\` subdirectories exist (assistant-mode layout), review recent entries there

## Phase 2 — Gather recent signal

Look for new information worth persisting. Sources in rough priority order:

1. **Daily logs** (\`logs/YYYY/MM/YYYY-MM-DD.md\`) if present — these are the append-only stream

2. **Existing memories that drifted** — facts that contradict something you see in the codebase now

3. **Transcript search** — if you need specific context (e.g., "what was the error message from yesterday's build failure?"), grep the JSONL transcripts for narrow terms:

\`grep -rn "<narrow term>" ${transcriptDir}/ --include="*.jsonl" | tail -50\`

Don't exhaustively read transcripts. Look only for things you already suspect matter.

## Phase 3 — Consolidate

For each thing worth remembering, write or update a memory file at the top level of the memory directory. Use the memory file format and type conventions from your system prompt's auto-memory section — it's the source of truth for what to save, how to structure it, and what NOT to save.

Focus on:

- Merging new signal into existing topic files rather than creating near-duplicates

- Converting relative dates ("yesterday", "last week") to absolute dates so they remain interpretable after time passes

- Deleting contradicted facts — if today's investigation disproves an old memory, fix it at the source

## Phase 4 — Prune and index

Update \`${ENTRYPOINT_NAME}\` so it stays under ${MAX_ENTRYPOINT_LINES} lines AND under ~25KB. It's an **index**, not a dump — each entry should be one line under ~150 characters: \`- [Title](file.md) — one-line hook\`. Never write memory content directly into it.

- Remove pointers to memories that are now stale, wrong, or superseded

- Demote verbose entries: if an index line is over ~200 chars, it's carrying content that belongs in the topic file — shorten the line, move the detail

- Add pointers to newly important memories

- Resolve contradictions — if two files disagree, fix the wrong one

---

Return a brief summary of what you consolidated, updated, or pruned. If nothing changed (memories are already tight), say so.${extra ? `\n\n## Additional context\n\n${extra}` : ''}`

}