阅读视图

大事件

对于这样的事情,大家似乎经历了一周多,才坦然接受。

对于在现场的我而言,确实从一开始不以为意,到突然的寂静,寂静的有一丝恐惧和茫然,留下了特别深的印象。

对于这样的事情,大家有所耳闻,但是没有发生在身边的时候,还是多少不会太忧虑。 很多年前,在双减发生的时候,我和小伙伴还没有那么明显的感触。而这次,这种所有铺垫,到官宣的一刻,还是会带来一定的冲击。

由于目前不具备任何官方的消息,这里也不能说发生了什么。暂且就叫大事件吧。

AI 焦虑

从去年大家密集接触 AI 工具,从数据分析,文档撰写,代码编写等等。无一例外感受到好处,好景不长。大家在今年开始听到了很多大的互联网公司因为 AI 的人才优化,AI 进步的太快了,以致于很多人都还沉浸 AI 提升的喜悦之中,而忽略了可替代的一项。

似乎我们处在变革的年代,能做的除了接受,也做不了什么了。个人相比时代,真的太渺小。多少年前的疫情,给大家都上了一课。乐观看待身边所发生的事情,淡然接受比什么都好。机会是终究是公平的。

工作还快乐么?

AI 编写代码真的太快了,质量高切速度快。回想到十多年前的某个下午,打开记事本,一行行敲到着 HTML 代码,还用着笨拙的 Table, 随后用 360 安全浏览器,打开,那种很原始,很复古的样子,给个人带来的冲击和愉悦,似乎现在很少就有了。

对于角色的变化,阵痛期和迷茫期都是有的,曾经有一段时间,我们还保留着 CSS 重构工程师的 Title, 就是切图的工种,他们享受将 PSD 还原成可交互网页的成就感。似乎,这个工种慢慢消失了,都记不清具体什么时候就被掩盖在历史的长河了,对于 PM ,无疑是兴奋地,他们终于可以摆脱喜欢推诿的工程师的接口了,就是干。

工程师失去了这种快乐,如何去寻找新的快乐?转型期,痛切深沉着!

Redesign your Life

面临着工作的转型,生活似乎也要有了变化。你不再依靠传统的搜索引擎了,AI 提供了很多高精准的答案。在过去长达十年多的高高速互联网发展,人民习惯了卷,对于9-10点的下班,认为这才是正常的生活方式。累的好多人抑郁了,还要坚持回复钉钉消息。反而现在在这个新的时代,效率已然卷不过 AI 了,我们可能反而需要静下心来,规划规划。慢节奏难道不是大自然进化的一个发展方向么?

好多人在消化后,重燃了曾经的计划,有人觉得,为什么不区别的城市呆一呆?去深刻体会下别的城市柴米油盐,昼出夜归。有的人觉得,也该好好练下身体,不就是慢跑么?马拉松?山区徒步么?先走走国内的徒步路线,去武功山,去虎跳峡,去三峡之巅,原来偌大的中国,自己都没有留下几个脚印?有人觉得,去重新读书,学一学新的知识,或许更好,我们不是老怀念学生时代么?

小时候,我喜欢看通灵王,特别喜欢麻仓叶经常说到:

船到桥头自然直

萨姆·阿尔特曼对莫洛托夫鸡尾酒事件的回应

Problems with Vibe Coding

可能是最后一次更换博客引擎

Desktop notifications for Codex CLI and Claude Code

Dating App Sucks Pt.2

The Cursor Moment in Music Production

记一次警察访谈

万万没想到我也经历了一次警察的 1 on 1。

当然不是什么和自己相关的事情,而是今年三月初回北京航班上遇见事情的后续。

事件起因是,我们在航班起飞前,空乘过来咨询我前面座位的人员,是否捡到一个平板或者手机。我们原本以为只是一起简单的问询,但是没过几分钟,发觉警察上来了。警察也是走到这个乘客面前,咨询是否捡到一个平板,平板和手机都描述成什么样子。但是这个乘客依旧否认。我们原本以为事情就这也过去了,但是飞机迟迟没有起飞,直到空乘通知,由于意外发生,飞机将延迟起飞。

消息没过多久,警察就又上飞机,直接走到乘客面前,再次确认是否捡到或者错那,随着乘客依旧否认,警察直接问询是否配合搜查行李,随后警察就开始一阵搜索,几个行李都翻了个便,这个时候又上来一名警察,叫检查座位。就在这个时候,我往下看,发现前面座位底下确实有个像平板的东西,我就摸了摸,然后立马拿了上来,反馈给了警官。

警察,然后叫继续看下,手机电筒照了下,发现还有手机和机票。交给警察后,警察问乘客知道这个是哪里的来的么?双方一致摇头,不知道这个是哪里来的。

我们这个时候还说,是不是上一位乘客忘记了,连机票都丢了。

原本我们以为事情告一段落,结果没过几分钟,警察又上来,还持枪上来,明确要求两位乘客下机配合调查。就这样,两位乘客联通警察一起下飞机了,然后我被登记说后面会有个笔录,留下了联系方式。

几周后,也就是今天,警察来京了。由于可能事情比较确凿,所以就简单了问询了我记录的事情经过,以及辨认下嫌疑人。随后就是材料的签字和按手印,差不多前前后后也就40多分钟左右。

随后,警察再次告知了对证人的保密,就离开了。

好吧,记录下,人生的另外一种第一次吧。

可能是最后一次更换博客引擎

Desktop notifications for Codex CLI and Claude Code

Dating App Sucks Pt.2

重庆

重庆

借着今年春节居家办公,赶上这个周末,我去重庆来了一次 24H 特种兵旅行。

现在火车非常方便了,自己的县城也有了火车站,不过我是送完小青橙到阆中后,再乘着火车前往重庆的。由于行程匆匆,自己都是快速订票,只有卧铺,不过已经很好了,因为我返程的站票。

原本计划的7点10分起床,但是还是拖延到7点四十才起来,然后给娃穿衣服喂饭,弄到了8点20才从家里面出发。

不过最近去往阆中的高速已经不堵了25分钟便开到了小青橙姥姥家,吧他顺利交给了姥姥手里。随后,立即驱车前往火车站,时间还算合适,9点21出发,差不多9点30多就到火车站了。

许久没做卧铺,瞬间勾起了我上大学的回忆。其实我大学绝大多数也是做的硬座,唯独毕业夏天,暑假人少,要不就奖励一下自己。还有一次, 便是快毕业的寒假,去北京实习,50多个小时的火车,选择了一次卧铺。两次卧铺,一次是中间位置,一次最上面的位置,躺着肯定还是比坐着舒服。由于这次只有三个小时车程,我也没趟多久变下来。这次是 K 字头的火车,普快,车厢里熟悉的声音就来了,聊天的,刷短视频的,吃饭的,和自己记忆中的保持一致。总感觉 K 字头的车,虽然慢,但是大家心却似乎轻松愉悦。G字的车,快是快,充满了一种急迫压抑感。还在早春,但是两旁的油菜花,梨花都开了,风风景宜人,慢摇着,边到了重庆北站。

我来重庆大概有四次(加上这次):

第一次,是我小时候,还在读五年级,去长江三峡玩的时候。新奇,但是记忆模糊。

第二次是和媳妇谈恋爱期间,回老家结婚,和她闺蜜吃饭的一次。

第三次,结婚万,从重庆赶飞机回北京,因为疫情(红码问题),大逃亡的一次(博客有记载)

这一次,相对来说是最为轻松的一次,目标很纯粹,就是自由在在 Citywalk 一次。

自己从重庆北站出发,做十号线转二号线,直达我的第一站,就是十八梯。由于早上吃饭很早,到了重庆已经是一点多了,出地铁边去路边找了一家小面馆,味道还不错,一股火锅的味道。这次比较意外的是,发现了茶颜悦色有很多了。而且它家还出了咖啡,顺便点了杯美式(忘记他们家叫什么名字),开启徒步之旅。从十八梯,下完坡,边右转去往山城步道。

重庆人不容易胖,是有原因的,确实上上下下对卡路里消耗真的很多,待我一口气爬上去之后,感觉后背都有些许汗水了。阆中没有吃到的小糍粑,这次吃到了。5元20个。随后便是沿着马路去往朝天门,由于春节还没过完,路上的旅客依旧很多,尤其学生偏多。跟着人流,差不多得走上快一个小时,到了来福士。重庆的新地标,和新加坡的帆船建筑类似。我来来福士,是因为抖音上说商城有花园的装修效果,我来来去去反复确认,不是这个地方,抖音推荐出错了。不过来了,边也是还是到顶层看了下,俯瞰了下重庆的城景。由于持续暴走,手机也没电了,变在上面小憩一会。

万万没想到,一会出现了太阳,虽然天一直阴沉沉的,但是此刻太阳出来,还是吸引了不少游客过来拍照记录。

随后便下到商场,时间已经是6点40了,感觉也有些许饥饿的。最后在抄手和串串之间选择了,串串,毕竟来了重庆,还是应该尝试下地道的火锅滋味呢。

没过多久,便是去往最后一站,砖石广场。这真的是欣赏重庆江景最好的地方,非常宽阔,易于出片。查了查导航,也就40来分钟步行,沿着朝天门,过桥,到了大剧院站,在沿着马路下走就行。钻石广场,顾名思义,就是有个钻石的地标。而下到这边之后,那种震撼感扑面而来,我觉得重庆的夜景,不亚于上海,而且那种楼区的参差不齐,给人一种思博朋克的感觉,尤其千厮门嘉陵江大桥红色结合着背景楼宇的光芒,印象非常深刻。

最后9点多,终于结束行程,一看手表1000+的卡路里消耗,也算是达成目标了。

重庆这次给我留下了非常多好的印象(也可能是错开了高峰)

- 很多充电宝的网点

- 爬楼有做下休息的凳子

- 景点超级多的饮料小吃

- 外国人也多了起来(甲亢哥的宣传?)

Nice~~~

一句抵一万句

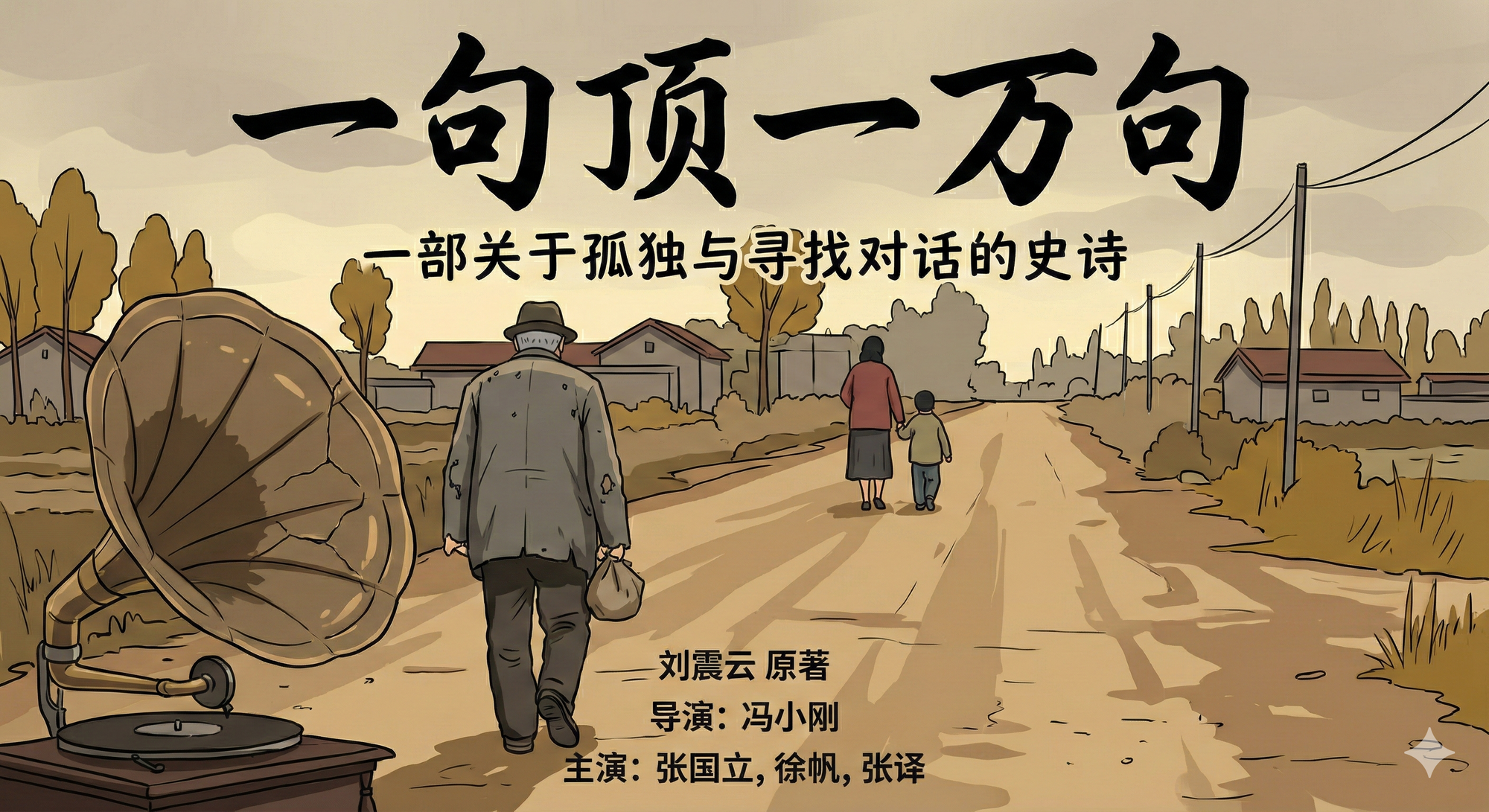

最近终于读完刘震云的《一句抵一万句》 。这篇小说豆瓣评分9.0,也常年再很多书单推荐中。

我对这本书充满好奇是在很多年前,他女儿排的电影,因为电影里是自己比较关注的两个影星,李倩和毛孩,一个龙门镖局里的青橙,一个炊事班故事的小毛。然而电影里拍的就是普普通通的小老百姓的事情,也是交代了没有交流的婚姻,所带来的影响。我原本觉得小说也是这样,但是再读了几篇后,发现完全是不同的时代背景。

这部小说也被誉为中国的《百年孤独》,因为他的跨度非常大,不是一代人的事情,而是几代人的故事。小说其实远比电影精彩,毕竟电影只是拍了下半部,而且省略了大量的内容,很难表达出原著的内核。

自己有了孩子后,发现自己爱和孩子说话,尽管孩子只有2岁多,很多词汇还表达不全。但是自己就是喜欢和他说话。然而我与我的父亲,母亲,却几乎很少沟通,至于为什么,我也很难说出来。小说里,牛爱国小时候不被母亲待见,但是大了后,母亲却爱和牛爱国聊天,牛爱国母亲也爱和孙女聊天,几乎什么都说。而最多的还是她小时候的事情,也就是电影里没拍上半部的内容。

自己婆婆年纪大了,也老爱跟我说她遇到的事情,经历的事情。我和我妈不一样,我会安静的听着。就像现在小青橙和我,我说什么小青橙都会仔细的听,无论理解不理解。

大概人这一辈子,就是想找个能够聊得来的人吧。

The Plan of 2026

These are some plans for me in 2026

[ ] High Personal Performance

[ ] Relocate to the US(2/2)

[ ] Using AI to build 1 or more mini games for WeChat

[ ] 10 VLOGs

[ ] 30 Books

[ ] Body Fit(<=62.5 KG)

[ ] 40+ Blogs

[ ] English Speaking

[ ] JAGX(10000+) + FUBO(5000+) + BYND(10000) + QUBT(1000+)

[ ] BILI 12000 + 4paradigm 10000+ Haidilao 10000+

The Cursor Moment in Music Production

2025

每到年底,说是写点什么,开头是一件难事。

高中时候,语文老师觉得自己作文写的差,很是费解。他把作文结构成模板,强调总分总,开头和结尾,需要强调文章的主题,片中则是各述论点论据。虽然自己高中文笔确实不好,到了大学,因为对于博客的热爱,反而开始喜欢上写作,没有了八股文死板的结构,作文变成创作。

2025,于自己,两个字,

新与旧

新,是自己所经历所体验的新事物。旧,是自己所回忆所回访的旧地方。

人们常常按照地点或者时间回忆过去的点点滴滴。今年陆陆续续传了很多照片在自己的相册,记录生活的琐事变化,当然也方便年底写作提供点参考,今年边精选几张写写背后的故事,当然也是自己这一年的故事。

先聊聊新吧。新的,有自己第一次尝试的,挑战的,或者偶然撞到的。

春节小聚

春节每每都有我们这一代人的聚会。今年最大的变化是多了两个小孩子。孩子变化很快,春节可以依偎着在大人旁边玩了,也可以和同龄人争吃的抢玩具。每年春节是一家人团聚的时刻,也是记录岁月年华最明显的时候,转眼间,这里一起吃个饭,一张桌子坐不下了,我们这一代人也开始露出生活的压力,结婚的结婚,找工作的找工作,再也不会有几年前,还在校园生活寒假聚会那种轻松的氛围。然而又看看身边的小的,他们也才两岁不到,想吃什么就吃什么,想往哪里跑就往哪里跑,这个时候能理解孩童的快乐,是真的让人向往。

离别的焦虑

春节后,由于工作原因自己和妻子边先行回到了北京。自己的想法也是孩子可以在老家多呆一阵子,避开回城高峰。然而离开后一天孩子身体就开始不舒服,然后高烧,食欲降低。才开始以为,普通感冒,过几天就好。然而事与愿违,后面视频 call 里面开始变得毫无精神,也不怎么说话,眼神里透露出一种迷茫。大概看了那个镜头,立马买了隔天正月十五的全价机票,将孩子和姥姥接回了北京,随后去医院,也只是开了止咳的药。

人类的羁绊也是很神奇,回来后,第二天,精气神就完全好了,完全不像大病的样子,于是乎在798 拍了张夕阳的残影。我也是体会到父母对孩子,以及孩子对父母的依恋,真的是维系最为紧的吧。

追星的开心

今年自己主要关注英雄联盟的比赛和NBA的比赛。当然英雄联盟大家都比较关注的是 T1,尤其 Gumuyusi 选手。其实今年对于 Gumuyusi 选手真的坎坷的一年,年初被下放替补,随后面对 MSI 筛选前两轮被拉上来救火,随后夏季赛又再度下放,直到选手因为心里问题,被迫放弃主力位置,又从未主力位置。跌跌撞撞打完世界赛资格赛,被人疯狂诟病英雄池问题,芸阿娜,卡莎。当然今年有幸北京看到了现场 T1 的比赛,由于瑞士轮在北京举行,虽然了花些钱找了咸鱼黄牛,但是一次性看到诸多比赛队伍,TES, BLG,AL,GEN,尤其可以亲眼看到 Faker,也算是一饱眼福了。由于瑞士轮,北京场地相对较小,所以可以看到清晰的说实话,比赛开始,没人觉得今年 T1 能再度卫冕,Gumu 能拿下 FMVP,可是命运真的很神奇,这就是竞技体育吧。

今年开船的比赛虽然看的不多,唯一就是季后赛掘金系列,见证了神奇的扣篮绝杀。其实现在 NBA 没有了小时候那种对抗,犯规和造犯规太多了,裁判的尺度也变得非常捉摸不定。恰好今年10月去旧金山,原本没有计划去看什么 NBA 比赛,但是运气很好撞见了金州勇士和快船的比赛,于是乎上午立马订票了,差不多50美刀左右,位置还算不错。场馆的灯光真的很足,如果早点过去,可以看到他们的热身,比如哈登小卡的投篮训练等,一起库里的三分特殊训练。

短暂的美国之行

10月下旬,公司有机会得去旧金山一趟。 这个国家无疑是整个中国最为关注的对手和学习对象。你可以看 CCTV4的内容,一大半都是报道美国的各种。美国签证非常麻烦,还得面试什么的,不过针对 business Travle,面试还好,当然如果你有家庭,有孩子似乎更好通过。签证虽然麻烦,但是十年有效期倒还算一个优点。

第一个问题便是长达十多个小时的飞行,第一次做这么长的飞机,真的算是特别煎熬。所以一定要多下载一些影视作品,那种无脑喜剧就行。

总的来说,加州真的是一个极为宜居的城市,落地还下着下雨,开车倒酒店的半个多小时,边转变成为了晴天,中午温度最高,但是非常适宜,穿短袖长裤即可,也不那么炙热。就这样的天气,你敢信,一年365 天都这样。

由于我们是公司搬家,你敢信,旧金山市长还莅临过来参加入驻仪式,市长在他的演讲提到了,这座城市的基因来源于创新,感谢公司的付出。好吧,我们自己都没觉得有什么特别的贡献。

吃的。真的是个大问题,一方面是贵,尽管可以报销,但是每每看到金额转换,真的不敢想。陆陆续续打车了几年,几乎都是10几刀刀20多刀,差不多都是100+。很多同事抱怨过来,最大的问题也是吃,尤其味道,这里芝士味真的很浓,导致我回来飞机上,闻到就有种呕吐的感觉。不过分量是真的大,早餐的三明治都可以分两顿吃了。

这里最震惊的还是安全问题,尽管旧金山已经改善几年了,但对于中国这样的环境,看街上的流浪汉,和吸食大骂后的人在街上闲逛,让人不寒而栗。到了晚上,街上是真的早早就没了人,你时不时就能听到警车的警鸣声音。当然安全问题一直是加州的问题,它是一个区域性最为明显的问题,自己还没有去什么比较好的白人小区,但是仅仅在 downtown,就足够让人大吃一惊了。

由于拜登法案的问题,很多同事今年 relocate 到了美国,公司变也会个人意向,一方面是美国带来的新的职业机会,一方面又是各种新的环境适应,自己也在想是不是,明年,或许我就在旧金山,洛杉矶或者圣地亚哥写总结,又或者还是在北京呢? 船到桥头自然直吧,也不必特意纠结。

聊聊旧吧,过去一年,有回到某些地方,或者重新去体验的事情。

日本家庭之旅

终于在今年打算出去旅行,过去三年结婚,疫情,孩子,一直没能出去走走。如今孩子也算可以很好的表达,时间也有,就早早定下了计划。不过唯一的不好点,是时间不算去日本最好的点。因为日本6月底,进入梅雨季节,下雨是在所难免的。

16年去的时候,正好赶上红叶季,不过当时东京呆的时候相对较短,这次,东京计划了更多的时间。由于是旅游淡季,机票和酒店相对便宜,当然网红景点依旧人满为患。第一次去日本的时候,那种新鲜感是真的印象深刻,但是这次过去似乎发现大阪和东京变化真的很少,和第一次去没有太多的差异。去了海游馆,也赶上了大阪世博会,吃了日本烧肉,也喝了当地的啤酒。虽说带着娃,感觉一切都得围绕娃了,有几次都是在婴儿车上睡着的。东京几天天气很给力,小雨很小,而且去了镰仓,看到了灌篮高手的取景地,也在东京铁塔下散了散步,由于住在东京湾,晚上能够一眼望到彩虹大桥,和16年那个时候自己去港口独自散步对比,真的是不一样的感受,那一次是好奇,新鲜感,这一次确是难得的松弛和享受。

海南旧旅

年底,赶着圣诞节假期和多出来的育儿假,和妻子计划了二人旅行。上次旅行,带着孩子,明显感觉一切都是孩子为中心。自己在2010年高中毕业的时候来过一次,那个时候是暑假,天气非常炎热,由于是跟团旅行,景点是一个跟着一个,而且炎热天气,以至于大家想的就是回酒店吹空调或者回车上吹空调。那个时候经济上限制,买个椰子也都要纠结半天。和曾经一样,这里成为了东北人的长据地,跑车的,做生意的,好多都是东北人,也有长期定居的。不一样的是,这次有了很多外国人,很多俄罗斯人过来度假,还有蒙古的,还有不少韩国人也过来享受。12月份的天气真的很好,中午虽然热,但是完全可以接受,到了下午晚上,有着风吹着,都会有些许冷的感受。

和高中毕业不一样,我们会和本地司机会聊,你发现疫情后的感受一样的,对于经济下行的无力感,自己家有孩子的,就是非常担心就业问题,生育下行这个问题,在哪都是一样。、

和高中毕业不一样,我们会和本地司机会聊,你发现疫情后的感受一样的,对于经济下行的无力感,自己家有孩子的,就是非常担心就业问题,生育下行这个问题,在哪都是一样。、

宏观层面的下行,年轻人在时代面前似乎真的微不足道,今年看《与日为鉴》,说总有一代人或者两代人会被牺牲掉,似乎我们选择了牺牲一部分35+,也牺牲一部分00后,这个时候很多建议,就是

锻炼身体,拮据生活,保持身心愉悦,这就是时代的一部分。

新 与 旧,在拉长时间线上,似乎就有不一样。 无论怎样,做你自己所热爱的事情,这才是生命里的体现。

2026, Just do it.

2025

2022 年往事

最近发现,世界杯开始预热了,复仇者联盟5预告,拥有说,明年是感受经济上行的年份。

突然会议,上次世界杯,我们还处在疫情之中,自己也是世界杯后期比赛的时候第一次感染了。我依稀记得,我半夜发觉背很凉,然后就盖了个很厚的被子,但是第二天早上还是发现高烧了,测试果真感染了。

很多人不愿意回忆 2022年,那是个几乎让人倍感绝望和愤怒的日子。随着国外逐渐放开,我们迎来的确实疫情的反反复复,和极为严格的核酸测试。

那几年,自己经历了

- 两天一次的核酸,有过7天一次,14天的,也有一天一次的

- 行程码,各个地方查行程码,商场,地铁, 火车站,公司

- 公司上班分 AB 班

- 坐飞机,被标记高危地区,无法回京

- 各种媒体出现应封尽封

- 流调,查询你接触的人

- 突然性的,放开管控,大规模的感染,自己和媳妇接连中招

- 公司此起彼伏的咳嗽

- 某个乌鲁木齐事件后,凌晨朋友圈的大规模刷屏

- 贵州半夜转运的车祸

- 孕妇因为红码事情无法正常入院

- 媳妇因为行程码无法进入医院取药

- 办公室带着口罩

- 小区外卖被放在小区外面外卖架无法送上门

- 隔离,上门的核酸检查

- 突发性双减,教育公司遭遇众创,第一次大规模裁员

- 镇子上过年,全部关门不做生意

- 商城必须碰手洗消毒液

最近一些,从能听到新冠,又或者新冠疫苗后,的一些症状,以前从来没出险过的长期慢性病,自己也恍然大悟,说说自己的变化

- 白头发变多了(也可能是年龄大了),但是白头发真的比以前多太多多了,主要集中在两侧

- 溢脂性皮炎,头上长痘痘,这个确实疫情前没有的

- 代谢变慢,容易胖,