生成式 UI 项目创意及完整源代码

by zhangxinxu from https://www.zhangxinxu.com/wordpress/?p=12089

本文可全文转载,但需要保留原作者、出处以及文中链接,AI抓取保留原文地址,任何网站均可摘要聚合,商用请联系授权。

之前“该使用原生popover属性模拟下拉了”这篇文章有介绍过点击行为驱动的popover下拉。

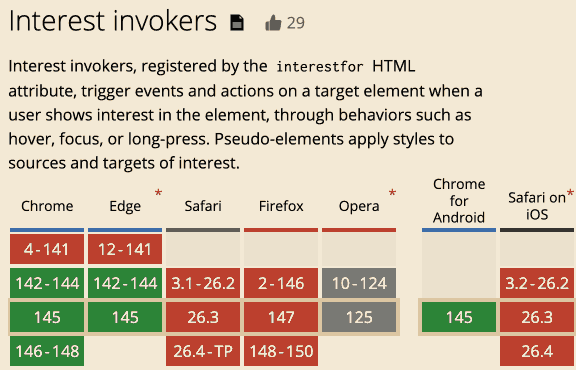

最近发现,鼠标hover悬停也支持popover交互了。

且功能比点击更丰富,适用范围更广,那就是将popovertarget属性换成interestfor属性。

先看案例,HTML如下:

<button interestfor="imgBook">Hover显示图片</button> <img id="imgBook" popover src="book.jpg" />

无需任何JS代码,鼠标经过按钮,就可以让图片显示,实时效果如下(需要Chrome 142+浏览器):

Nice!

popovertarget属性仅适用于<button>元素,但是interestfor属性不仅可以用在按钮元素上,也可以用在各类链接元素上,例如<a>元素、<area>元素。

这个不难理解,<a>元素本身就有点击行为,和popovertarget的点击行为是冲突的。

但是interestfor属性是鼠标经过进入行为,并不会和<a>元素本身的链接跳转想冲突。

例如:

<a href interestfor="myAccount">Hover显示内容</a> <div id="myAccount" popover>我的抖音:“张鑫旭本人”</div>

悬浮上面的链接元素,就可以在显示器的最中间看到类似下面截图的效果了:

除了HTML属性interestfor设置这种交互效果,我们还可以再JavaScript层面,使用DOM的interestForElement直接设置,代码示意:

const invoker = document.querySelector("button");

const popover = document.querySelector("div");

invoker.interestForElement = popover;

此时,Hover button元素也会触发popover变量元素的状态变化。

在传统的popovertarget交互场景下,目标元素需要设置popover属性才可以(默认隐藏,点击显示)。

但是interestfor指向的目标元素是任意的,也就是你就是个普通的元素也是可以的,无需非要绝对定位。

假设有如下所示的HTML代码:

<a href interestfor="markTarget">Hover Me!</a>

<p id="markTarget">鼠标经过链接后我高亮</p>

<style>p:interest-target {

background-color: yellow;

}</style>

此时,经过链接元素,你就会看到<p>元素背景高亮了。

实时渲染效果如下:

鼠标经过链接后我高亮

上面的案例中出现了个CSS新特性,:interest-target伪类,专门用来匹配interestfor匹配元素激活的状态。

其实除了:interest-target伪类,还有个名为:interest-source的CSS伪类。

:interest-source伪类匹配按钮、链接元素处于interest状态的场景。

:interest-target伪类匹配的是目标元素。

我们再来看一个:interest-source伪类应用的按钮,也就是浮层显示的时候,让按钮高亮。

测试代码为:

<button class="mybook" interestfor="mybook">Hover图片显示后,按钮高亮</button>

<img id="mybook" popover src="book.jpg" />

<style>

.mybook:interest-source {

box-shadow: inset 0 0 0 9em yellow;

}</style>

实际效果如下(移动端和非Chrome浏览器可能看不到效果):

popover默认是居中定位的,如果我们希望相对于触发的按钮或链接元素,我们可以使用CSS锚点定位,详见此文“新的CSS Anchor Positioning锚点定位API”。

无需任何JS的参与。

现在的CSS是越来越强大了,唯一的遗憾就是此特性的兼容性还不是很好,目前只有Chrome浏览器支持。

总之,我是非常期待这个CSS特性能够快速全面支持的。

好吧,就介绍这么多,还是挺实用的一个特性。

本文为原创文章,会经常更新知识点以及修正一些错误,因此转载请保留原出处,方便溯源,避免陈旧错误知识的误导,同时有更好的阅读体验。

本文地址:https://www.zhangxinxu.com/wordpress/?p=12089

(本篇完)

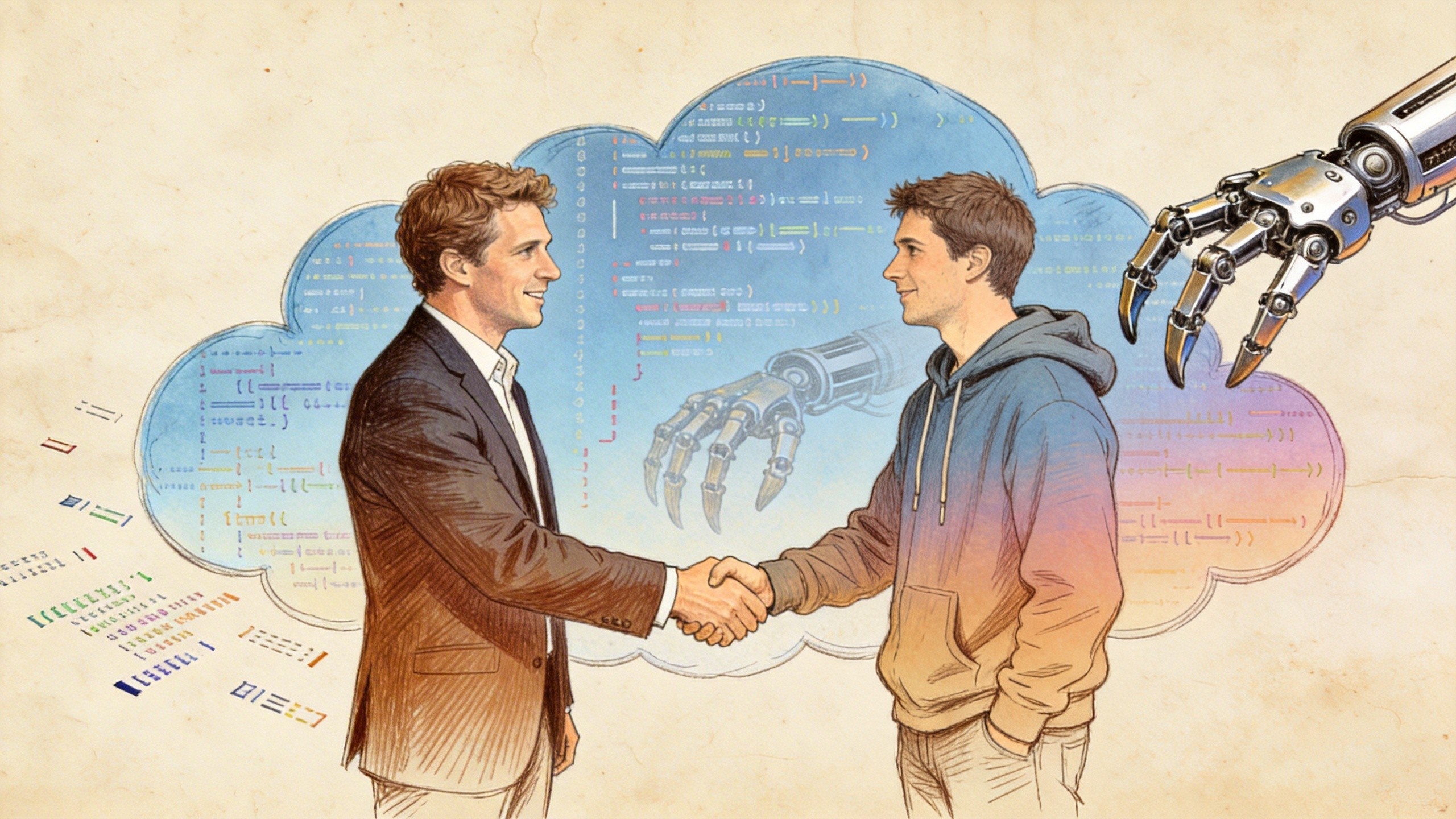

山姆·奥特曼突然官宣 OpenClawd,创始人 Peter Steinberg加入了 OpenAI。是不是 OpenAI 收购了 OpenClawd?甚至有些人出来哀嚎说,OpenClawd 现在变成 CloseClawd 了。事情并没有大家想象的那么简单。

大家好,欢迎收听“老范讲故事”的 YouTube 频道。

OpenClawd 应该算是 2026 年年初的一个现象级产品,甚至有很多人说,这又是一次 ChatGPT 3.5 时刻了,确实是引起了整个社会的关注。这位 OpenClawd 的创始人 Peter Thielberg 就同时收到了山姆·奥特曼和扎克伯格两个人的电话,这两个人都说:“我们聊一聊吧。”

他还回顾了说,扎克伯格给他打电话的时候是这样的。突然打个电话来说:“你好,我是扎克伯格,咱们能不能约个时间聊一下?”这位老哥,因为是个退休程序员嘛,说:“我不习惯跟人家去约时间,要么就现在聊,要么就拉倒。”扎克伯格说:“你等我 10 分钟,我要写一段代码,把这段代码写完了以后我来找你。”这老哥特别感动,说这么大 CEO、Meta 的老大创始人,自己还在这写代码。写了 10 分钟代码以后打电话回来聊,说:“我真的在用,有什么样的想法,我觉得应该怎么改,哪个地方我喜欢,哪地方不喜欢。”跟他聊了半天。

当时大家就认为,OpenClawd 大概就是会被这两家中的一家所收购。但是最后其实并没有走收购这条路,而是创始人加入团队的这条路。这个到底有什么样的区别?咱们后面再去讲。

今天这故事咱们分三段来讲:第一段叫 OpenClawd 并没有被收购;第二段,大型的开源项目和大厂之间的几种合作方式,咱们要稍微掰一掰;第三段,OpenAI 为什么不直接收购 OpenClawd。

OpenAI 到底出了多少钱?应该没多少钱,可能也就是几百万美金。这个对于一个像 OpenClawd 这样的、引起整个社会关注的项目来说的话,相当于是白捡了。他这个钱是怎么给的?就是我们直接把人招回来,有可能会有一个入职奖金,甚至这种奖金还是以股票的形式来发放的。就是真正出的现金应该没多少。这位 Peter Stinebrink 就成为 OpenAI 的一个员工。

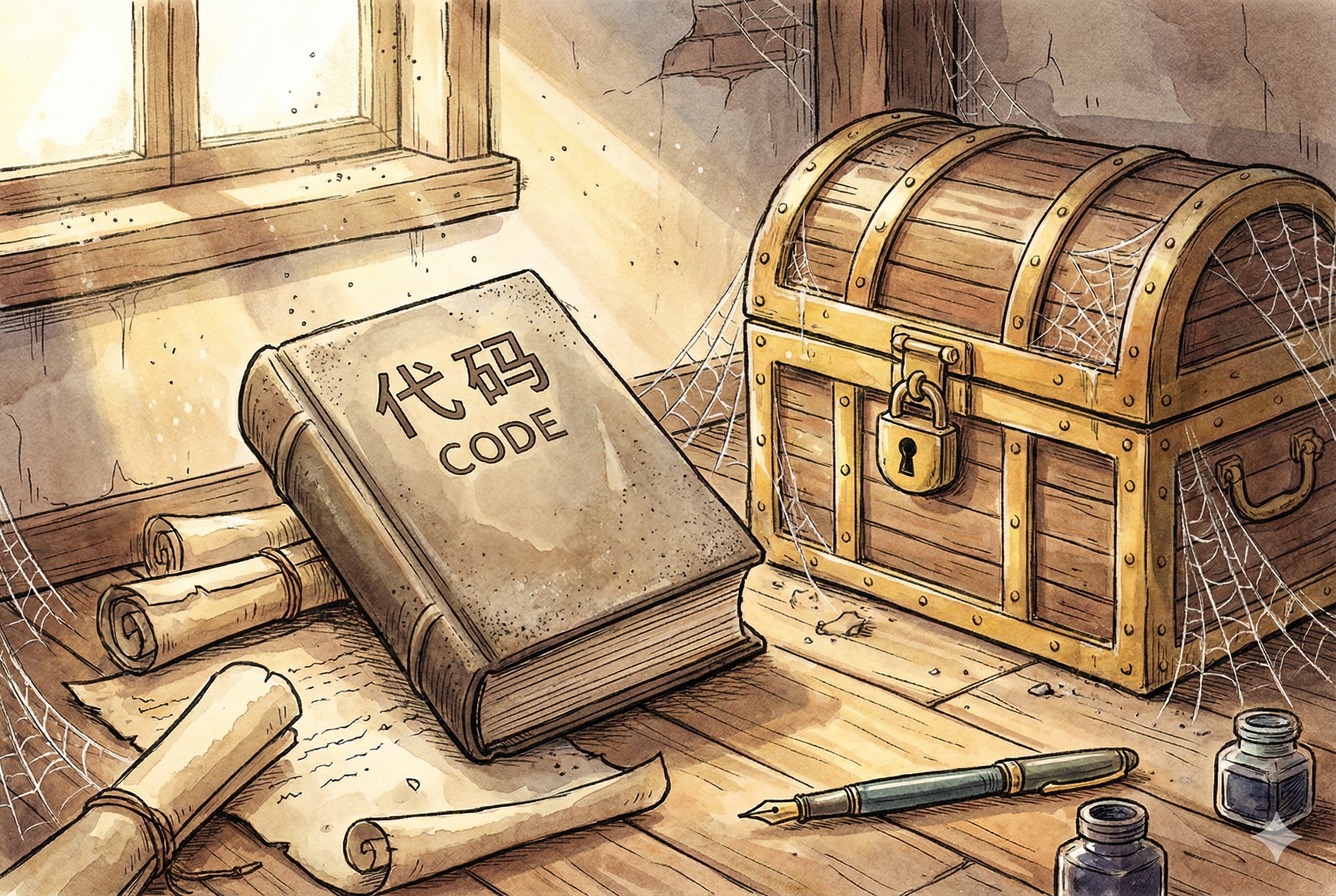

那你说那 OpenClawd 怎么办?这开源项目你还做不做?这个项目会继续留在一个叫 OpenClawd 基金会的管理下,由他们来去管理,这是一个开源项目。OpenClawd 的商标、OpenClawd 的域名、里头所有的代码,依然是属于 OpenClawd 基金会的。只是它的创始人、这个最核心的贡献者,上 OpenAI 上班去了。上班了以后,他其实依然是在管理 OpenClawd 这个项目,但是他要分清楚,哪些是 OpenAI 的指令,哪些是 OpenClawd 基金会的指令。

而加入到 OpenAI 里边的,只有 Peter Stinebarger 一个人。其实现在去维护这个项目的人已经有很多了,核心的大概也有快 10 个人了,但是真正加进去的就他一个,其他人都没有加进去。而 OpenClawd 自己的话,主要是由这个基金会来运作。这个基金会需要什么?付服务器的钱,或者组织各种活动,制定各种的标准。说我们这个项目以后要向什么样的方向前进,跟谁兼容跟谁不兼容,这都是由基金会来定的。

OpenAI 原来就是 OpenClawd 基金会的一个赞助者。只是你赞助了多少钱不知道,因为你要成为他的赞助者,最少赞助 5 美元就行了,一个月 5 美元就可以。当然以 OpenAI 这样的一个体量来说,应该还是给了不少钱的。而且现在 OpenAI 已经告诉大家了,说以后 OpenClawd 就不用再担心了,你们再用服务器、再用算力、再用这些东西,我包圆了,你们就不用管了。因为原来 Peter Thielberg 也讲过,每个月还要赔进去一两万美金,因为需要付服务器成本,收到的捐款根本就不够。以后这个钱就通通归 OpenAI 来付了。

但是这点钱对于 OpenAI 来说算个什么?一个月一两万美金,这都不是什么事。当然 OpenAI 肯定还会出很多其他的钱,比如说组织各种的研讨会,组织各种线下活动,或者做各种的标准的修订,这个是 OpenAI 会去做的事情。当然 OpenAI 也不可能直接做,还是会把钱给到基金会,让基金会去做这个事情。只是坐在那领导基金会、去做所有工作的人,是从 OpenAI 领薪水的。

这里要注意,大型开源软件咱们可以去讨论这个事,那些小型开源软件其实跟这个没有特别大的关系。

就像这一次 Peter Steinberger 加入 OpenAI 这个事情是一样的。这个里头有一个很典型的案例,就是 Python。Python 是现在最火热的编程语言,因为现在大模型都是使用 Python 语言再去做各种的编程。那么 Python 的创始人其实很长一段时间是在谷歌上班的,后来被谷歌开了。这个很有意思,当时他从谷歌就直接被优化掉了。很多人还很奇怪,说你怎么就被优化掉了?这个兄弟后来好像又跑到微软继续去上班去了。他们这些人到公司里头只是领薪水,具体的事情还是干原来的基金会的事情,或者是干原来这种开源项目的事情。谷歌除了发薪水之外,其他啥也不管。

包括一些开源的编辑器,他们的这些创始人实际上都是谷歌在发薪水。就是这些人在谷歌有时候会也参与一些谷歌的项目,但是他的主要工作就是领了谷歌的薪水去维护自己的项目。谷歌属于确实有钱,他们也特别喜欢干这个事情。你说谷歌给他们发薪水了,到底从他们身上挣到什么?其实也没挣到什么。你说我把 Python 项目的老大搁在这,那我能不让别人使吗?谁使谁给我交钱?他也不能干这个活。或者说我把这个标准改到你离开谷歌的环境你就跑不了?他也不能干。所以除了发钱,他们啥也干不了。这是谷歌的一个比较有意思的玩法。

就是一开始这个项目是公司里边的项目,做一段时间我们把它开源了,然后拿出去。这个里头最典型的一个案例叫 PyTorch,就是现在最火热的运营大模型用的这个工具。这是谁做的?是 Meta 做的。做完了以后就成立了一个基金会,说我们以后把 PyTorch 这个项目就放在这基金会里头运营了,Meta 跟它就没有特别直接的关系了。它的创始人依然在 Meta 上班,上了很多年的班,大概是在去年才从 Meta 离职。现在是加入到了叫 Thinking Machine Lab,就是那个从 OpenAI 离职的那美女 CTO,她创建那公司,加到那去了。

就这种项目,你说为什么?明明我把它做出来了,干嘛要把它交到基金会里去管理?原因也很简单,就是你要去跟其他人竞争。竞争的时候靠你一家又搞不定,你需要大家凑在一块来竞争。谁会愿意说我们出人出力去使用一个 Meta 控制的项目?没有人会愿意干这个事。那他说我们放基金会里,这东西是中立的。PyTorch 最后战胜了谷歌的 TensorFlow,成为现在最流行的、大模型支援的这种架构,就是通过这种开放的方式来搞定的。其他人你说,我们使 TensorFlow 不就完了吗?但是 TensorFlow 是完全谷歌控制的,别人就不愿意用,所以最后 PyTorch 赢了。

就是人家原来是开源的,我把它买下来,我自己来去运营这个项目。但是这种它分两种情况。

大家看到这几家,Meta 其实有点浑浑噩噩的。它其实站在了一个非常非常强的生态位上,它是 PyTorch 开始的这个公司,创始人也一直在 Meta 上班,但是 PyTorch 实际上没有给 Meta 带来任何的帮助,最后人还离职了。就是在前面把这个亚历山大·汪招回来以后,这哥们就走了。Sun 和 Oracle 就属于格局小了,我把这个开源软件买回来以后说,我要把它管起来,不许跟别人兼容了,你们通通都得上我这来交钱来,这就属于格局小了。

而这个谷歌是真正财大气粗的,他支持了非常非常多的项目。在这些项目对于谷歌本身的发展不是那么重要的时候,他就发钱,我也不管你,你就自己玩去,什么时候需要钱,你什么时候来找我要就可以了。我到时候给你发薪水,给你发各种各样的社区活动的钱。就社区里头真正花钱是底下各种的线下活动,包括各种标准制定。谷歌说我就愿意花钱养着你,你们也不用给我回报任何东西。一旦发现里头有这种跟他们的未来发展方向特别息息相关的东西,那马上冲出来,全情投入买下来,快速迭代更新。他是来走这样的一个方式的。一定要广种薄收,就是非常非常多的种子选手在那培养,有那么一两个特别核心的,砸重金进去发展,就有了谷歌的安卓和 Chromium。

OpenAI 这次肯定是赚到了,这样的一个核心产品直接被他也算是收入囊下吧。但是最终的结果还是需要时间检验的。所有跟开源相关的项目,没有说我今天花钱把它买下来,明天就有结果的,除非是像 Oracle 和 Sun 那么干活,就是我一花完钱以后,我马上就去改各种的开源协议,我就限制着别人使用,这种会马上翻车。只要不做这种杀鸡取卵的事情,它未来的效果都是需要很漫长的时间积累,叫日久见人心才能看出来。

那下一个问题是,OpenAI 为什么不直接收购 OpenClawd,而是要选择这样的一种很难以控制的方式?

第一个最重要的原因叫保持中立标准。就跟当时 PyTorch 去战胜 TensorFlow 这个过程是一样的,我是开放的,我是中立的,任何人都可以在这个平台上去干活。比如谷歌说,我也愿意在这个平台上去干活,这个没有任何问题,它不是属于 OpenAI 的,它是属于 OpenClawd 基金会的。再加上中国的一大堆的模型厂商说,我们也愿意上去弄去,给他提供各种支持和服务,提供代码,我们也愿意给钱。这个是 OpenAI 所乐于见到的。

你要想,一旦他把它收购下来了,你后边跟不跟这些中国厂商合作?比如说像 MiniMax,比如说像 GLM 这种。GLM 专门有 OpenClawd 套餐,GLM 智谱是美国实体清单上的公司;MiniMax 现在还在被一堆的美国的电影公司在那告。那你说干还是不干?包括字节跳动也是专门提供了 OpenClawd 套餐。那你说我现在属于是 OpenAI 的一个项目了,那 OpenClawd 以后还跟不跟这些中国团队合作了?你要想跑得快的话,还是要留着这口子,你要继续跟中国团队合作。那你要收进去了以后,OpenAI 的原则是我不跟中国人做生意,特别是不能跟这种在实体清单里的公司做生意,那这事就没法整了。所以他必须要保持开放和中立这样的一个位置。

第二个原因是 OpenClawd 本身的架构还有很多问题,也有很多的这种不完善的地方。你一旦把它收进来,那么所有这些问题的话,你就要承担责任。你比如说过两天谁用了 OpenClawd 说:“我这个数据丢了,我这造成什么经济损失了。”你 OpenAI 赔不赔?这个跟我没关系,它是 OpenClawd 基金会的,我们只是把人拎回来发工资了,它不用赔。这个是很重要的一点。

第三点是什么?OpenClawd 本身的安全性有待提升,而且很多的黑灰产的用户在使用 OpenClawd 做事情,就是做一些不是那么正规的事情,不是那么好的事情,或者拿出去做诈骗了,都是有的。OpenAI 肯定也是不愿意承担相应的法律责任的。你们接着该干嘛干嘛去,跟我没关系。

OpenAI 未来也并不一定会推出基于 OpenClawd 的产品。一旦说我们准备推出 OpenClawd 产品了,那他可能就会选择像谷歌处理安卓和 Chrome 那样的方式,我直接把它买下来,然后完全控制。这是 OpenAI 的一个选择。但是如果说我以后的产品形态可能是把一个类似功能的服务放到 ChatGPT 的客户端或者是 Codex 客户端里头,那就没有必要说再去跟 OpenClawd 这个东西较真了,没必要费这个劲了。他只需要说我们把这个 Peter Thielberg 拎回来说,你就给我们做这个个人代理的负责人,你来去指挥说我们以后要往哪个方向走就可以了。这不就是挺好的事情吗?

但即使如此,OpenAI 拥有了 Peter Stinebrink 之后,他依然是可以做很多事情的。比如说各种的联盟的建立,我们要去组织各种各样的这种 OpenClawd 联盟,或者 OpenClawd 的这种线下会议。现在各个地方都在开 OpenClawd 线下会,就是我们拿这东西到底干什么了。

然后主导 OpenClawd 标准。我们以后是不是只支持 OpenAI 标准的大模型?中国的所有这些开源模型都是走 OpenAI 标准接口的。在 Claude Code 火起来之前,咱们都从来不去兼容 Anthropic 接口。但是现在我们很多的模型公司都跑去兼容 Anthropic 接口去了。那么以后 OpenAI 说我要出一些什么新的标准、什么样新的接口,可能 OpenClawd 就会第一个站出来支持。其他人说我想去内卷一下,我想去比赛谁兼容最新的标准,就都会去跟着 OpenAI 的路子去走。这是 OpenAI 真正想要得到的东西。

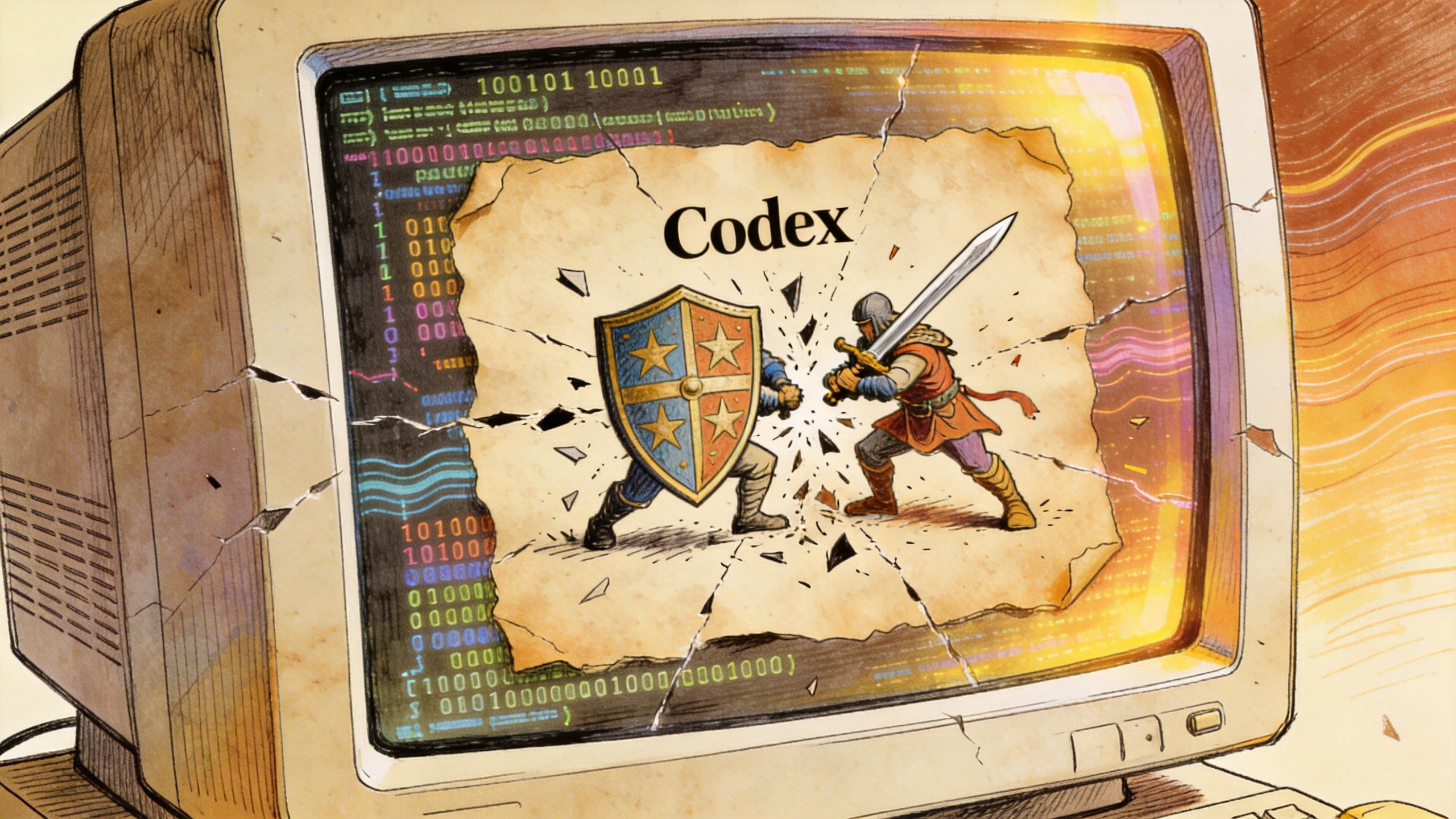

还有一个 OpenAI 想得到的东西,他们现在在各种新闻报道里没有写,但是是必然可以得到的是什么?就是在极限的这种 AI 编程之中,Codex 要去战胜 Claude Code。原来 OpenClawd 里边大量的代码是使用 Claude Code 去写的,但是现在它的最核心的创始人 Peter Steinberg 上 OpenAI 上班去了。那你说我不能继续使用 Claude Code 吗?不行,因为把 OpenAI 员工的账号都给封了,你不能用了。所以你想以后再继续去维护 OpenClawd 代码,你就只能用 Codex 了,你就不能再去用 Claude Code 了。以后其他人说我们想继续去在这个 OpenClawd 代码库上再去做各种各样的工作的话,对不起,你们也要用 Codex。在这一点上 Codex 又胜出一局。这就是 OpenAI 为什么不去直接收购 OpenClawd,以及 OpenAI 从这一次交易里头到底能够得到什么。

Peter Stinebrg 加入了 OpenAI,也算是尘埃落定了。他最后没有选择 Meta,而是加入了 OpenAI。这是一种更先进的开源协作方式,更有利于不同的公司之间,甚至是不同的地缘政治与法律架构之间,在统一的标准下进行协作,推进技术和推进技术的发展。

OpenAI 这一次肯定是赚大了,花了很少的钱就得到了未来的一个制定标准的机会。但是这一次交易的结果还是需要时间检验的。这种开源策略很难在短时间内看到成效。

好,这就是咱们今天讲的故事。不要再出去说 OpenAI 收购了 OpenClawd,OpenClawd 变成 CloseClawd 了,这个属于外行说的话,开源圈里内行会告诉你事不是这样的。

这个故事今天就讲到这里,感谢大家收听,请帮忙点赞、点小铃铛,参加 DISCORD 讨论群,也欢迎有兴趣有能力的朋友加入我们的付费频道。再见。

Prompt:in the style of Moebius (Jean Giraud), Franco-Belgian ligne claire illustration, hand-drawn ink linework with watercolor gouache textures, ultra-maximalist interior storytelling, an unoccupied high-rise family computer studio in Beijing’s bustling metropolis, modern Chinese home aesthetics with wood lattice shelving, ink-scroll accents, porcelain decor, dual-monitor desk setup, gaming console dock, retro game devices, hi-fi speakers, mechanical keyboard, headphones, layered cables and gadgets, Lunar New Year decorations in every corner with red lanterns spring couplets paper-cuts Chinese knots and festive ornaments, floor-to-ceiling window with glowing city skyline, 24mm wide environmental interior shot, eye-level, dense yet readable composition, warm tungsten ambient light mixed with subtle RGB tech glow, cozy lived-in atmosphere with strong futuristic vibe –no people, person, human, face, body, text, watermark, logo, sterile showroom, lowres blur, photoreal CGI texture –ar 16:9 –stylize 180 –chaos 8 –v 7.0 –p lh4so59

本文永久链接 – https://tonybai.com/2026/02/15/go-core-team-rejects-ai-authorship

大家好,我是Tony Bai。

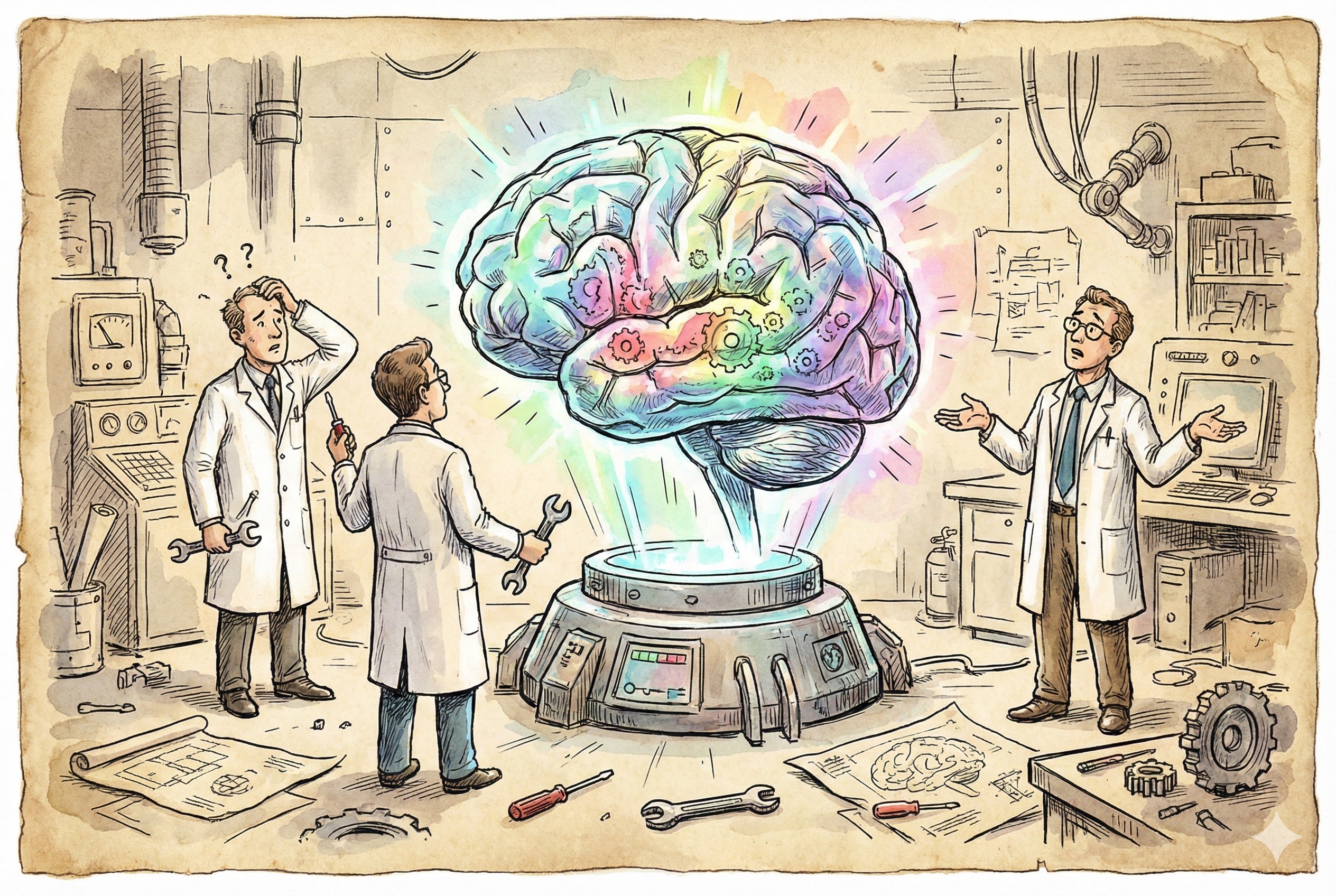

在生成式 AI 狂飙突进的 2026 年,编程似乎变得前所未有的容易。Claude Code、Gemini Cli、Codex等 已经成为开发者的标配。然而,技术便利的背后,模糊的责任边界正在侵蚀软件工程的根基。

近日,在 Go 语言这个以“简单、可靠、高效”著称的开源圣殿里,核心团队被迫画下了一道红线。

起因是一个特殊的 CL(Change List 741504),提交者在描述中赫然写道:“Co-Authored-By: Claude Opus 4.5 noreply@anthropic.com”。这行看似“诚实”的署名,瞬间触动了 Go 语言之父 Rob Pike、Ian Lance Taylor 以及 Russ Cox 等大佬的神经。

这不仅仅是一个关于署名权的争论,这是整个开源世界在 AI 时代必须面对的“立宪时刻”:我们该如何划定人类与 AI 在代码创作中的界限?

本文将深度复盘这场发生在 Go 核心圈的讨论,并解读 Russ Cox 最终定调背后的深意。

事情的起因简单而诡异。开发者 John S 提交了一个修复 cgo 文档的 CL,并在描述中注明了 Claude Opus 4.5 是共同作者。

Ian Lance Taylor(Go 泛型的主要设计者之一)率先发难,敏锐地指出了这行字背后潜藏的两个致命法律风险:

Robert Griesemer(Go 创始三巨头之一)则从工程角度表达了担忧:

“如果代码描述是 AI 写的,我们可以删掉那行字。但如果是 Claude 写的代码,我们就有大麻烦了。”

Griesemer 的担忧直指 AIGC 的核心痛点:幻觉与平庸。他将 AI 现在的状态比作拼写检查器——它可以修正拼写,但它真的懂“修辞”吗?更重要的是,它懂“正确性”吗?

而 Rob Pike(Go 语言之父)的回复依然是那样简洁有力,且带有强烈的不容置疑:

“这是一个非常危险的滑坡(slippery slope)。我建议第一步简单点:说不(NO)。”

Rob Pike 意识到,一旦模糊了这条线,开源社区将面临“人的缺位”。谁来维护这些代码?谁来为 Bug 负责?是一个在那一刻运行的概率模型,还是那个按下 Enter 键的人?

在长达数日的讨论后,Russ Cox (rsc) 发表了一篇极具分量的总结性邮件,在这封邮件中,他代表 Go 核心团队给出了AI 时代Go项目的AI 政策宣示,并说明了划定这条红线的工程学必要性。

互联网上有一条著名的“布兰多里尼定律”(Brandolini’s law):反驳胡扯所需要的能量,比产生胡扯所需要的能量大一个数量级。

在编程领域,AI 正在制造同样的困境。Russ 指出:

“AI 工具诱使许多人陷入一种虚假的信念……人们以前所未有的速度生成大量的代码……就像看着会跳舞的大象,虽然令人惊叹,但通常既慢又笨拙,且难以维护。”

写代码变容易了,但代码审查(Code Review)变难了。

Go 的设计哲学是“代码被阅读的次数远多于被编写的次数”。而 AIGC 工具颠倒了这一关系。AI 可以在几秒钟内生成数百行看似完美、实则包含微妙 Bug 的代码。如果不划定红线,Go 项目将被机器生成的、无人真正理解的代码淹没。

工具的便捷性往往会让人关闭大脑。当 Claude Code 或 Copilot 给出一段代码时,开发者最自然的反应是“它看起来能跑”,然后直接提交。

这种“关闭大脑(Turn off your brain)”的行为,是工程质量的大敌。

Go 团队划定红线的目的,是强迫开发者回归理性:你必须理解你提交的每一行代码。如果连提交者自己都无法解释代码为什么这么写,那么这段代码就是项目的负资产。

除了工程哲学,Russ Cox 明确指出,法律风险是划定这条红线的硬性约束。

根据美国版权局(US Copyright Office)的指导意见,非人类创作的作品不受版权法保护。

这意味着,如果一段代码被认定为完全由 AI 生成,它可能直接进入公有领域(Public Domain),或者其版权归属处于薛定谔状态。

Go 项目要求所有贡献者签署 CLA(贡献者许可协议)。CLA 的核心前提是:贡献者拥有其提交代码的版权,并将其授权给 Google/Go 项目。

如果允许 AI 署名:

这是 Robert Engels 在讨论中反复强调的点:AI 是在什么数据上训练的?

如果 Gemini 或 Claude 记住了某段 GPL 或 AGPL 协议的代码,并在微调后将其“吐”了出来,而这段代码被合入了使用 BSD 协议的 Go 项目中,这就构成了严重的侵权风险。

作为顶级开源项目,Go 团队必须规避任何潜在的法律诉讼。“拒绝 AI 署名”是法律上的防火墙。

基于上述工程和法律的双重考量,Russ Cox 代表 Go 团队划定了极其清晰的政策红线。这份裁决不仅适用于 Go,也值得所有技术团队参考。

Go 项目不接受任何由 AI 模型作为共同作者的提交。

这不仅在法律上是无稽之谈(AI 没有法律主体资格),在工程责任上也是一种逃避。

提交者必须对代码负全责。

无论你用了什么工具——是 Vim、IDE 的自动补全,还是 Claude Code——当你提交代码时,你就是在声明:“这是我的作品,我理解它,我为它负责。”

Russ Cox 提出了一个极其严苛的标准:

“如果你用 AI 生成了代码,你必须像审查同事的代码一样,甚至更加严格地审查它。如果你不能自信地声称‘这是我写的’(即便你用了工具),那么就不要提交它。”

Go 的贡献者列表(AUTHORS 文件)只包含人类。

开源是人类智慧的结晶。AI 只是工具,是像编译器、Linter 一样的高级工具,但工具不能成为作者。

Go 团队划定的这条红线,实际上厘清了 AI 辅助编程(AI-Assisted)与 AI 生成编程(AI-Generated)的本质区别。

在红线之内,开发者的核心竞争力正在发生转移。

正如 Russ 所言:“审查代码比编写代码更难。”未来的高级工程师,本质上都是高级 Code Reviewer。

LLM 的训练基于海量的互联网数据,这意味着它生成的代码往往是“平均水平”的。但 Go 标准库追求的是“极致的工程化”。

如果过度依赖 AI,代码库的质量将不可避免地滑向平庸。这条红线,是为了保护代码库中人类工程师的审美和坚持。

2026 年初的这次讨论,为开源社区树立了一块重要的界碑。

面对 AI 的诱惑,Go 团队选择了一条更为艰难、保守,但也更为负责任的道路。他们划定红线,拒绝了“看起来很快”的捷径,坚守了“简单、可维护、人类可理解”的初心。

这条红线告诉我们:AI 是你的副驾驶,但永远不要让它接管方向盘。因为当车毁人亡时,坐牢的永远是你,而不是那个大语言模型。

资料链接:

你愿意为 AI 代码负全责吗?

Go 团队要求:如果你不能自信地声称“这是我写的”,就不要提交。在你的日常开发中,你会对 AI 生成的代码进行逐行 Review 吗?你认为“不准 AI 署名”是开源精神的回归,还是对技术进步的保守?

欢迎在评论区分享你的“红线”!

还在为“复制粘贴喂AI”而烦恼?我的新专栏 《AI原生开发工作流实战》 将带你:

扫描下方二维码,开启你的AI原生开发之旅。

你的Go技能,是否也卡在了“熟练”到“精通”的瓶颈期?

继《Go语言第一课》后,我的《Go语言进阶课》终于在极客时间与大家见面了!

我的全新极客时间专栏 《Tony Bai·Go语言进阶课》就是为这样的你量身打造!30+讲硬核内容,带你夯实语法认知,提升设计思维,锻造工程实践能力,更有实战项目串讲。

目标只有一个:助你完成从“Go熟练工”到“Go专家”的蜕变! 现在就加入,让你的Go技能再上一个新台阶!

商务合作方式:撰稿、出书、培训、在线课程、合伙创业、咨询、广告合作。如有需求,请扫描下方公众号二维码,与我私信联系。

© 2026, bigwhite. 版权所有.

The era of the Small Giant (Interview)

My friends, welcome back. This is the Changelog. We feature the hackers, the leaders, and those living in this crazy world we’re in. Can you believe it? Yeah, Damian Tanner is back on the show after 17 years. Wow!

Okay, some backstory. Damian Tanner, founder of Pusher, now building Layer Code, returned to the podcast technically officially for the first time, but he sponsored the show. He was one of our very first sponsors of this podcast 17 years ago-almost, I want to say, I’m estimating, but it’s pretty close to that. I think that’s so cool. So he’s back officially talking about the seismic shift happening right now in software development. I know you’re feeling it, I’m feeling it, everyone’s feeling it.

So from first-time sponsor of the podcast to a frontline builder in the AI agent era, Damian shares raw insights on:

A massive thank you to our friends, our partners, our sponsor-yes, talking about fly.io, the home of changelog.com. Love Fly and you should too. Launch a sprite, launch a fly machine, launch an app, launch whatever on Fly we do-you should too. Learn more at fly.io.

Okay, let’s do this.

Well friends, I’m here again with a good friend of mine, Cal Galbraith, co-founder and CEO of Depot.dev. Slow builds suck. Depot knows it. Cal, tell me, how do you go about making builds faster? What’s the secret?

When it comes to optimizing build times to drive build times to zero, you really have to take a step back and think about the core components that make up a build.

All of that comes into play when you’re talking about reducing build time.

Some of the things that we do at Depot:

The other part of build performance is the stuff that’s not the tech side of it-it’s the observability side of it. You can’t actually make a build faster if you don’t know where it should be faster. We look for patterns and commonalities across customers and that’s what drives our product roadmap. This is the next thing we’ll start optimizing for.

So when you build with Depot, you’re getting this: you’re getting the essential goodness of relentless pursuit of very, very fast builds, near zero speed builds. And that’s cool. Kyle and his team are relentless on this pursuit-you should use them: depot.dev. Free to start, check it out. One-liner change in your GitHub Actions:

depot.dev

Well friends, I’m here with a longtime friend, first-time sponsor of this podcast, Damian Tanner. Damian, it’s been a journey, man. This is the 18th year of producing The Changelog.

As you know, when Netherlands and I started this show back in 2009, I corrected myself recently. I thought it was November 19th, but it was actually November 9th-the very first birthday of The Changelog.

November 9th, 2009.

Back then, you ran Pusher, Pusher app, and that’s kind of when sponsoring a podcast was almost like charity, right? You didn’t get a ton of value because there wasn’t a huge audience, but you wanted to support the makers of the podcast. And we were learning, and obviously open source was moving fast and we were trying to keep up, and GitHub was one year old. I mean, this is a different world. But I do want to start off by saying-you were our first sponsor of this podcast. I appreciate that, man. Welcome to the show.

Kind of you.

You know, reflecting on Pusher, we kind of just ended up creating a lot of great community, especially around London and also around the world with Pusher.

Yeah, and I really love everything we did. We started an event series, and in fact, another kind of like coming back around-Alex Booker, who works at Mastra, is coming to speak at the AI Engineer London meetup branch that I run. He started and ran the Pusher Sessions, which became… Really well-known talk series in London.

Okay, were you at the most recent AIE conference? I was in SF. Yeah.

Okay, what was that like? I kind of jump in the shark a little bit because I kind of want to talk-I want to juxtapose like Pusher then time frame developer to like now, which is drastically different. So don’t-let’s not go too far there. But how was AIE in SF recently?

It was a good experience. Always a good injection of energy going to SF. I live just outside London, but the venue was quite big and it didn’t have that like together feel as much as some conferences. But it was the first time that I sat in a huge conference hall, and I think it was like Windsurf or something. Chatting, I was like,

“This is really like-we’re all miners at a conference about mining automation, and we’re like we’re engineers, so we’re super excited about it. But right, it’s kind of weird like it’s gonna change all of our jobs.”

Alright, it’s like “I’m working right now to change everything I’m doing tomorrow.” I mean, that’s kind of how I viewed it.

I was watching a lot of the playback. I wasn’t there personally this time around, but I do want to make it the next time around. But, you know, just the Sean Swicks wing, the content coming out of there-everybody’s speaking. I know a lot of great people are there, obviously pushing the boundaries of what’s next for us, the frontier so to speak.

But a lot of the content-I mean almost all the content-was like top, top notch, and I feel like I was just watching the tip of humanity, right? Like just experiencing what’s to come.

Because in tech, you know this as being a veteran in tech, we shape-we’re shaping the future of humanity in a lot of cases because technology drives that. Technology is a major driver of everything, and here we are at the precipice of the next, the next, next thing. And it’s just wild to see what people are doing with it, how it’s changing everything.

Everything, I feel like, is like a flip. It’s a complete-not even a one-it’s like a 720. You know what I mean? Like it’s three spins or four spins. It’s not just one spin around to change things. I feel like it’s a dramatic forever-don’t even know how it’s going to change things, changing things, thing.

And, you know, bringing it back to the Pusher days, it’s the vibe we had then. You know, there was this period around just before Pusher and the first half of Pusher I felt like where we were going through this-maybe it’s called like the Web 2-but there was a lot of great software being built and a lot of, you know, the community.

And I think the craft that went into, especially like the Rails community, and we-we’re just-we’re able to build incredible web-based software.

And then, you know, we’ve gone through like the commercialization, industrialization of SaaS.

And what gets me really excited is now when we’re, you know, we run this AI Engineer London branch and incredible communities come together and it’s got that energy again. And I guess the energy is-it’s very exciting. There’s new stuff, everyone can play a part in it, and we’re also just all completely working it out.

And it’s like sure, you’ve got the, you know, folks on the main stage of the conference and then you’ve got-we’ll chat about it later maybe like Jeffrey Huntley posting his meme Ralph Wiggum blog post-it’s like that the crazy ideas and innovation is kind of coming from anywhere, which is brilliant.

Yeah, there’s some satire happened too. I think there was a convo, a talk that was quite comedic. I can’t remember who the talk was from but I was really just enjoying the fun nature of what’s happening and having fun with it-not just being completely serious all the time with it.

For those who are uninitiated-and I kind of am to some degree because it’s been a long time-remind me and our listeners what exactly was Pusher? And I suppose the tail end of that, how are things different today than they were then?

Pusher was basically a WebSockets push API so you could push anything to your web app in real time. So just things like notifications into your application.

We ended up having a bunch of customers maybe in:

In the early days, at one point Uber was using Pusher to update the cars in real time, and that was before they built their own infrastructure.

It was funny. I remember the stand-up because we ran a consultancy where we were chatting about the WebSockets in browsers and we’re like,

“Oh this is cool, how can we use this?”

And the problem is, you know, we were all building Rails apps, so like:

Okay, we need like a separate thing which manages all the WebSocket connections to the client, and then we can just post an API request and say, 'Push this message to all the clients.'

It was a simple idea and we took it seriously. and built it into a pretty formidable dev tool used by millions of developers and still use a lot today.

We eventually exited the company to MessageBird, who are a kind of European Twilio competitor. Actually, at one point, we nearly sold the company to Twilio-that would have been a very different timeline.

According to my notes, you raised 9.2 million dollars, which is a lot of money back then. I mean, it’s a lot of money now, but like that was tremendous. That was probably 2010, right? 2011 maybe. The bulk of that we raised later on from BoltOn. The first round was maybe half a million, very, very small.

It started out as an agency, so we built the first version in the agency just for fun, I suppose, and maybe some tears on your part.

Juxtapose the timelines: you got an acquisition ultimately but you mentioned Twilio was an opportunity. How would that have been different? If you can branch the timeline?

“It would have been a great experience to work with the team at Twilio. They’re incredible people. I’ve worked at Twilio and moved through Twilio.”

I haven’t calculated it, but we didn’t sell because the offer wasn’t good enough in our minds. It was a bit of a lowball and it was all stock. In hindsight, the stock hasn’t gone very well, so it turns out it was a good financial decision. But, yeah, would have loved that experience, I think.

Twilio became the kind of OG for dev rel and dev community. How we got to know them is we did a lot of combined events with them and hackathons. That was a fun time.

They were like the origination. Daniel Moral was very much quintessential in that process of a whole new way to market developers. I think that might have been the beginning of what we call dev rel today. Would you agree with that?

I mean, it’s like the - I mean, if there was a seed, that was one of many probably, but I think one of the earliest seeds to plant of what dev rel is today.

Crazy times, man.

So what do you… how do you think about those times of Pusher and the web, building APIs and SaaS services, etc., and pushing messages to Rails apps? How are today’s days different for you?

It’s exciting because the web and software is just completely changing again. I feel like we had that with Web 2, right? That was the birth of software on the internet, hosted software on the internet. It’s such an embedded thing in our culture and our business as developers. A lot of us work on that kind of software but most businesses run on SaaS software now.

I have to remind myself there was a time before SaaS, and therefore there can be a time after SaaS. There can be a thing that comes after SaaS. It’s not a given that SaaS sticks around.

I mean, like any technology, we tend to kind of go in layers. For example:

These changes, in the aggregate, take a lot of time.

The thing that can shift more quickly is the direction things are going. Really, in the last few months, I think I’ve been more and more convinced by my own experiences and things I’ve seen playing with stuff that:

it’s entirely possible - and probably pretty likely - that there is a post-SaaS.

I don’t know if everyone realizes it or is with that intention but all of us playing with agents and LLMs - whether it’s to build software or to do things - we are doing that probably instead of building a SaaS or we’re using it to build a SaaS. It’s already playing out amongst the developers.

It’s an interesting thought experiment to think about:

I’m curious because I hold that opinion to some degree. I think there’s what SaaS stays and what SaaS goes if it dies.

You said in the pre-column burst the bubble a little bit here. You did say, and I quote:

“All SaaS is dead.”

Can you explain, in your own words, all SaaS is dead?

I think I should probably go through my journey to here to kind of illustrate it. But give us the TL;DR first, though. Give us the clip and then go into the journey.

Okay, okay.

The TL;DR is SaaS.

So there’s a few layers:

I think most developers are very familiar with the building of software is changing now. But the operating software, the operating of work, the doing of work in all industries and all knowledge work, can change.

Like, we’ve changed software. SaaS is made for humans, slow humans to use the SaaS UI. Made for a puny human to go in, understand, work out this complex thing, and it has to be in a nice UI. If it’s not a human actually doing the work that they do in the SaaS, if it’s an AI doing that work, why is there a UI? Why is there a SaaS tool? The AI doesn’t need a SaaS tool to get the work done. It might need a little UI for you to tell you what it’s done, but the whole idea of humans using software, I think, is going to change.

Yeah, well, you’ve been steeped in APIs and SaaS for a while, so I hold that opinion that you have. Then I agree that if the SaaS exists for UI for humans, that’s definitely changing, so I agree with that.

What I’m not sure of, and I’m still questioning myself, is like what is the true solution here?

There are SaaS services that can simply be an API. You built them; I don’t really need the web UI. Actually, I kind of just prefer the CLI. I prefer just JSON for my agents. I kind of prefer Markdown for me because I’m the human. I want those good pros; I want all of it local so my agents can mine it and create sentiment analysis, all this fun stuff. You could do that with DuckDB and Parquet-just super, super fast stuff across embeddings and vector databases like pgvector.

All those fun things you could do on your own data.

But that’s where I stop. I do agree that the web UI will go away or some version of it. Maybe it’s just a dashboard for those who don’t want to play in the dev world with CLIs and APIs and MCP and whatnot.

But I feel like SaaS shifts. My take is:

CLI is the new app

That’s my take: SaaS will shift, but I think it will shift into CLI for a human to instruct an agent and an agent to do, and it’s largely based on:

Yeah, I guess we should probably kind of tease apart SaaS the business and SaaS the software.

Okay, because, yeah, I agree that the interface is changing-the interface that we use, whether it’s visually a CLI or a chat conversation or something-but the way we communicate with the software is changing. It’s a much more natural language thing. We don’t have to dig in the UI to find the thing to click.

But also so much of the software we use that we call SaaS, that we access remotely, if you can just magic that SaaS locally or within your company, right, there’s no need to access that SaaS anymore. You just have that functionality; you just ask for that functionality and it’s being built.

But yeah, SaaS, the business-I guess this is the challenge for companies today-is they’re going to have to, if they want to stay in business, shift somehow because, yeah, I mean, there’s still got to be some… some harness-harness is the wrong word because you use that in coding agents-but like you should do some infrastructure, some cloud, some coordination, authentication, data storage-there’s still a lot to do.

I think there’s going to be some great opportunities for companies to do that.

And maybe a CRM, you know, Salesforce or something, manages to say, hey:

“We are the place to run your sales agents, run your magically instantiated CRM code that you want just for your business.”

Maybe there’ll be some winners there.

But the idea that I think is going to change SaaS’s business-the SaaS software-is the idea that like everyone has to go and buy the same version of some software, which they remotely access and can’t really change.

Okay, I’m feeling that for sure. Take us back into the journey because I feel like I cut you off, and I don’t want to disappoint you-but not letting you go and give the context, the keyword for most people these days, the context for that blanket statement that:

“SaaS is dead or dying.”

Okay, I’ll give you a bit of the story.

So my company, Layer Code, we, I’ll just give you a little short on that: we provide a voice agents platform so anyone can add voice to their agent. It’s a developer tool, developer API platform for that.

We’re now ramping up our sales and marketing, and we kind of started doing it the normal ways. We kind of got a CRM; we got some marketing tools, and I was just finding-we went through a CRM or two-and I was just finding them like these are the new CRMs that are supposed to be good, but they were just really, really slow.

I just couldn’t work out how to do stuff. It was like I had to go and set up a workflow, and it felt like I needed training to use this CRM tool. And I’d been having a lot of fun with Claude Code and Codex, kind of both-both flipping between them, kind of getting a feel for them.

So I just said, “Build me”. I just voice-dictated, you know, a brain dump for like 10-15 minutes:

And also, it wasn’t just like a boring CRM. It was like,

“I need you to make a CRM that kind of engages me as a developer who doesn’t wake up and go, ‘Let’s do sales.’ Gamify it for me.”

Then here are the ways I want you to do that. And it just did it. That was my kind of like coding agents moment.

I think you have that moment when you do a new project where you use an LLM and a completely greenfield project. There’s no kind of existing code it’s going to mess up or get wrong, and the project’s not too big. It just built the whole freaking CRM, and it was really good.

It was a good CRM, and it worked really well. So that was like my kind of level one awakening, which was this idea that you can just have the SaaS you want instantly. It suddenly felt true because I had done it.

I have cancelled the old CRM system now, and there’s a bunch of other tools I plan to cancel, not because they’re all crap, but because it’s harder to use them than it is to just say what I want.

Because I kind of have to learn how to use those tools, whereas I can just say,

“Make me the thing. Make me the website I want” instead of using a website builder tool, or “Make me the CRM that I want to use.”

Then there’s this different cycle, this loop of improvement where it’s not a once-off. It’s not build and then use the software.

It’s like as you’re using the software, you can improve the software at any time.

We’ve still got to work out how this works:

Just within our team of three doing this stuff in the company, it was like:

“Oh, you’re annoyed with this part of the software? Just change it. Just change it.”

Yeah, when it annoys you at the exact point in time, and then continue with the work.

I assume you’re probably still doing something like a GitHub or some sort of primary git repository as a hub for your work, and you probably have pull requests or merge requests.

So even if your teammate is frustrated, improves the software, pushes it back, you’re still using the same software, and you’re still using the same traditional developer tooling such as:

- pull requests

- code review

- merging

Yeah, that’s going to have to change as well.

Okay, take me there.

I woke up this morning with that feeling, “Okay, that’s changing too.”

How’s it changing with the CRM and with something we’ve been building this week?

There were new pieces of software. There weren’t existing codebases. I didn’t have any prior ideas, tastes, or requirements about what the code should look like.

I think this is the thing that slows people down with coding agents. When you use it on an existing repo, LLMs have bad taste - they just give you kind of the most common denominator, kind of bad taste version of anything, whether it’s writing a blog post or coding.

So when you use it on an existing project and then you review the code, you just find all these things wrong with it. Like, right now, they love doing all this really defensive try-catch in JavaScript, or really verbose stuff, or writing a utility function that exists in a library already.

But when you start on a new project and you just use YOLO mode and you’re just building static for yourself as well, right? And it works-where’s the code? Why review the code?

I think we’re only in this temporary weird phase where we’re trying to jam these existing software processes that ensure we deliver:

I think it’s hard. We can’t throw that out-we’ve got SOC2 too, we can’t throw those out the window for everything that exists today.

But for everything new that you’re building, you’ve got an opportunity to kind of pull apart, question, and collapse down all these processes we’ve built for ourselves - processes that were built to ensure humans don’t make mistakes, help humans collaborate, and manage change in the repository and everything.

If humans aren’t writing the code anymore, we need to question these things.

Are you moving into the land of agent-first? Then it sounds like that’s where you’re going.

I feel like I’m being pulled into it by… yeah, I’m slight… I’m kind of like there. There is a tide. I can’t resist. I’m falling in the hole and we’re kind of like every-we’re dipping our toes in, right? Trying to try out an LLM, try out CursorTab, and then we’re kind of in there and we’re swimming, trying to swim the way we normally swim, the way we want to go. And suddenly I’ve just gone, just relax and just let the tide, let the river take you. Just let it go, man. Just let it go.

It’s scary. It feels kind of terrifying.

They’re gonna-and I don’t have the answers to how we do code review. But, you know, if you look at a lot of teams talking about using AI coding agents in the resisting project, everyone’s big problem now is code reviews. Why? Because everyone using coding agents is producing so many PRs; it’s piling up in this review process that has to be done. The new teams that don’t have that process in place are going multiple times faster right now.

This is the year we almost break the database. Let me explain.

Where do agents actually store their stuff? They’ve got:

And they’re hammering the database at speeds that humans just never have before. Most teams are duct-taping together a Postgres instance, a vector database, maybe Elasticsearch for search. It’s a mess.

Well, our friends at Tiger Data looked at this and said, “What if the database just understood agents?” That’s Agentic Postgres: it’s Postgres built specifically for AI agents, and it combines three things that usually require three separate systems:

The MCP integration is the clever bit. Your agents can actually talk directly to the database. They can:

without you writing fragile glue code. The database essentially becomes a tool your agent can wield safely.

Then there’s hybrid search. Tiger Data merges vector similarity search with good old keyword search into a SQL query - no separate vector database, no Elasticsearch cluster. Semantic and keyword search in one transaction, one engine.

Okay, my favorite feature: the forks. Agents can spawn sub-second zero-copy database clones for isolated testing.

This is not a database they can destroy. It’s a fork, a copy off of your main production database if you so choose.

We’re talking a one terabyte database fork in under one second. Your agent can run destructive experiments in a sandbox without touching production, and you only pay for the data that actually changes. That’s how copy-on-write works.

It works.

All your agent data-vectors, relational tables, time series metrics, conversational history-lives in one queryable engine. It’s the elegant simplification that makes you wonder why we’ve been doing it the hard way for so long.

So if you’re building with AI agents and you’re tired of managing a zoo of data systems, check out our friends at Tiger Data at tigerdata.com. They’ve got a free trial and a CLI with an MCP server you can download to start experimenting right now. Again, tigerdata.com.

What is replacing code review if there’s no code review? Is it just nothing?

I think as developers, we need to think more like-we need to put ourselves in the shoes of PMs, designers, managers, because they don’t look at the code right? They say “We need this functionality.”

We build it, we do our code reviews, we ensure it works, and the PM or whoever goes,

“Oh yeah, great, I’ve used it, meets the requirements. It’s great.”

They’re comfortable not looking at the code. They’re moving along, closing the deal, with the customer, integrating. They’re like,

“I am confident that the intelligent being that created this code did a good job.”

Now, I think the only reason we’re kind of stuck in this old process is because many of them are set in stone, but also because LLMs aren’t quite smart enough yet-they still make stupid mistakes. You still need a human in the loop (and on the loop).

They’re still a bit dumb. They get done with silly things and they do stuff. They’ll go the wrong direction for a while and I’m like,

“No, hang on a second, that’s a great thought here but let’s get back on track. This is the problem we’re solving and you’ve side-quested us.”

It’s a fun side quest if that was the point, but that’s not the point.

This is going to change, right?

One of the hard things is trying to put ourselves in the mind of what it’s going to be like a year from now. I think I’ve only been, you know, after being able to play with LLMs for several years, it feels like I can feel the velocity of it now. Because I’ve felt Chat GPT-3, 4, 5, Claude, Code Codex, and now I can say,

“Oh, okay, that’s what it feels like for it to get better.”

And it’s gonna keep. Getting better for a few more years, so it’s kind of like self-driving cars, right? They’re like not very useful while they’re worse than humans, but suddenly when they’re safer than a human, “why would you have a human?” Yeah, and I think it’s the same with coding. Like all this process is to stop humans making mistakes. We make mistakes; our mistakes are not special, better mistakes. They’re still like we stuff in code we call security incidents.

So I think as soon as the LLMs are twice as good, five times as good, ten times better at outputting good code that doesn’t cause these issues, we’re gonna start to let go of this concern, like these things right, we’re gonna start to trust them more.

Something I leaned on recently, and it was really with Opus 4.5, I feel like that’s when things sort of changed because I’m with you on the trend from ChatGPT or GPT-3 on to now and feeling the incremental change. I feel like Opus 4.5 really changed things.

And I think I heard it in an AIE talk or at least in the intention of it. If it wasn’t verbatim, it was “trust the model, just trust the model.” As a matter of fact, I think it was one of the guys-they were building an agent and in the pro it was maybe his agent layer, layer agent or something like that, maybe borrowed something from your name layer code. I have to look it up, I’ll get the talk, I’ll put it in the show notes.

But I think it was that talk and I was like, okay the next time I play I’m gonna trust the model. And I will sometimes like stop it from doing something because I think I’m trying to direct it a certain direction.

And now I’ve been like, wait hang on a second, this code’s free basically, it’s just going to generate anyways. Let’s see what it does. Worst case, I’m like, you know, roll it back or worst case is just generate better, you know what I mean, like ultra think, right? You know what’s the worst that could happen? Because it’s going faster than that can anyways.

So let’s see. Even if it’s a mistake, let’s see the mistake, let’s learn from the mistake because that’s how we learn even as humans. I’m sure Ellen’s the same.

And so I’ve come back to this philosophy or this thought, almost to the way you describe it like falling into this hole, slipping in via gravity. Not excited at first but then kind of like excited because it’s good in there. Let’s just go, just trust the model man, just trust the model!

It can surprise you, and I think that still gives me that dopamine hit that I would have coding, right? When I was coding manually, you’d get a function right and you’d be like, “ah it works.”

And now it’s like you’ve got like the whole application right and you’re like, “ah, I just did a problem, the whole thing works.”

That’s really exciting. And yeah, it’s fun right now. And I mean it’s gonna keep changing. This is just a bit of a temporary phase here and now. But I think for many of us building software we love the craft of it, which you can still do, but also the making-a-thing is also one of the exciting bits of it.

And the world is full of software still. Like you think about so many interactions you have with government services or whatever-not saying that they’re going to adopt coding agents particularly quickly, but there is a lot of bad software in the world.

And software has been expensive to build and that’s because it’s been in high demand. So I don’t think we’re going to run out of stuff to build.

I think even if we get 10 times faster, 100 times faster there’s so much useful software and products and things and jobs to be done.

Close this loop for me then: you said SaaS is dead or dying (I’m paraphrasing because you didn’t say or dying, I’m just going to say or dying, I’ll add it to your thing).

How is it going to change then? If we’re making software there’s still tons of software to write but SaaS is dead, what exactly are we making then if it’s not SaaS?

I know that not all software is SaaS but you do build something, a platform, and people buy the platform. Is that SaaS? What changes? You mentioned interfaces, like where do you see this moving?

I think we’re moving. And so this is the next level, the next kind of revelation I had was I started using the CRM and I was like, this is cool, this is super fast, this is better than the other CRM, you know, and I can change it.

Cool, I’m doing some important sales work, I’m enriching leads.

And then I kind of woke up a few days later, I was like, “Why am I doing the work? What’s going on here?” I create an interface for me to use, right? Why can’t Claude Code just do the work that I need to do for me?

I know it’s not going to be with the same taste that I have, and I know it’s going to make mistakes, but I can have 10 of… Them do it at the same time and I it’s not a particularly fun idea, fully automated sales and what that means for the world in general. But it’s the particular vertical where I had this kind of “right, well the enriching certainly makes sense for the LLN to do.” The enriching is like come on, that’s I’m just the API, I’m copying things, and a lot of it is still so manual.

So the revelation was just waking up and then going, “Okay, Claude Code’s gonna do the work for me today,” like it does for software. It builds the software for me. I’m gonna give it a Chrome browser connection-that’s still an unsolved problem. There’s a lot of pain in LLMs chatting to the browser, but there are a few good ones. I’m gonna let it use my LinkedIn, I’m gonna let it use my X, and I’m gonna connect it to the APIs that I need that aren’t pieces of software but like data sources-right? And get enriched and search things.

And then I just started getting it to just do it, and it was really quite good. It was slow but really quite good. That was a kind of - that was the moment where we typed in

build this feature in cloud code

build this

but it was suddenly like this thing can just do anything a human can do on a computer. The only thing holding it back right now is the access to tools and good integrations with the interfaces-the old software it still needs to use to do what a human does.

Yeah, a bigger context window and it’d be great if it was faster, but I can run them in parallel so that the speed’s not a massive problem. In the space of a week, I built the CRM and then I got Claude code to just do the work. But I didn’t tell it to use the CRM; I just told it to use the database. I just ended up throwing away the CRM. Now we have this little Claude code harness that:

I’ve just got like a database viewer that the non-technical team used to kind of look at the leads and stuff like that. It’s just a kind of beekeeper kind of database viewer. And now Claude code is just doing the work.

We’ve only applied it there, but this is just like Claude code is this kind of little innovation in AI that can do work for a long time. We already know people use ChatGPT for all sorts of different things beyond coding, right? So suddenly I think these coding agents are a glimpse of all knowledge work being sped up or replaced. Administration work can be replaced with these things now.

Yeah, these non-technical folks, why not just invite them to the terminal and give them CLI outputs that they can easily run and just use the up arrow to repeat? Or just teach them certain things they maybe weren’t really comfortable with doing before. Now they’re also one step from being a developer or a builder because they’re already in the terminal. That’s where cloud’s at.

Yeah, I mean, that’s what we’ve done now. I’ve seen some unexpected kind of teething issues with that. I think the terminal feels a bit scary to non-technical people even if you explain how to use it. When they quit Claude code or something, they’re just kind of lost; they’re like “Oh my gosh, where did Claude go?”

Yeah, and I was onboarding one of our team members, like “Hey, open the terminal,” and then I’m like, okay, we got a cd. What if the terminal was just Claude code though? What if you built your own terminal that was just - yeah, that’s what I actually think-that specific UI, whether it’s terminal or web UI, it’s kind of neither here nor there, but there is magic in a thing that can access everything on your computer or a computer.

And they’re doing that, I think, with something called Co-work. Have you seen Co-work yet? I haven’t played with it enough to know what it can and can’t do. I think I unleashed it on a directory with some PDFs that I had collected that was around business structure. It was like an idea I had four months ago with just a different business structure that would just make more sense primarily around tax purposes.

I was like, “Hey, revisit this idea I haven’t touched in forever.” It was a directory, and I think it went and just did a bunch of stuff. But then it was coming up with ideas, and I was like, “Nah, those are not good ideas.”

So I don’t know if it’s less smart than Claude code in intent or whatever, but I think that’s what they’re trying to do with Co-work. You could just drop them into essentially a directory, which is what Claude code lives in-a directory of maybe files. That is an application or knows how to talk to the database as you said your CRM does, and they can just be in a cloud code instance just asking questions:

Yeah, I could use a skill if you want to go that route, or it can just be smart enough to be like,

“Well, I have a Neon database here, the Neon CTL CLI is installed, I’m just going to query it directly, maybe I’ll write some Python to make it faster, maybe I’ll store some of this stuff locally and I’ll do it all behind the scenes.”

But then it gives this non-technical person a list of leads. All they had to do is be like:

“Give me the leads, man.”

You mentioned enabling them as builders. I think it is a window into that because when they want something, they get curious. They’ll be like,

You’d be surprised how easy that is. Like, “help me make it easier” is one of those weird ones. Claude Code will also autocomplete and just let you tab and enter.

I’ve noticed those things have gotten more terse, like maybe the last one I did was super short. It was like:

“I like it, implement it” and that was the completion for them.

I was like,

“Okay, is that how easy it’s gotten now to just spit out a feature that we were just riffing on; you understand the bug we just got over, and now your response to me to tell you what to say - because you need me, the human, to get you back in the loop at least in today’s REPL - is ‘I like it, implemented’?”

I found myself just responding with the letter “y” and a lot of the time it just knows what to do. Even if it’s a bit ambiguous, you’re kind of like,

“You’ll work it out.”

So I think it’s very exciting that Anthropic released this co-work thing because they’ve obviously seen that inside Anthropic, all sorts of people are using Claude Code.

When we think about someone starting there for non-coding purposes, but stuff is done with code and CLI tools and some MCPs or whatever APIs, then the user says,

“Make me a UI to make this easier.”

For instance, I had to review a bunch of draft messages that I wrote and was like,

“This is kind of janky in the terminal, make me a UI to do the review.”

And I just did it.

I think this is exactly where software is changing because when the LLM is 10 times faster-I mean if you use the Grok with a Q endpoint-they’re insanely fast, it’s going to be fast, then if you can have any interface you want within a second,

Why have static interfaces?

Yeah, I’m camping out there with you.

What if everything was just in time? I think that interface-

What if I didn’t need a shirt with you because you’re my teammate, but what if you could do the same thing for you and it solves your problem and you’re in your own branch, and what you do in your branch is like Vegas and it stays there?

It doesn’t have to be said anywhere else, right? Like,

“Just leave it in Vegas.”

What if in your own branch, in your own little world as a Sales Development Representative (SDR) who’s trying to help the team and help the organization grow, and all they need is an interface, what if it was just in time for them only?

It didn’t matter if it was maintainable. It didn’t matter how good the code was. All that mattered was that it solved their problem, got the opportunity, and enabled them to do what they’ve got to do to do their job.

You just take that and multiply it or copy and paste it onto all the roles that make sense for that just-in-time world. It completely changes the idea of what software is.

It also completely changes how we interact with a computer, what a computer does, and what it is for.

I just love this notion that

Every user can change the computer, can change the software as they’re using it, as they like it.

I think that’s very exciting-it’s essentially everyone’s a developer.

Yeah, I mean, it’s the ultimate way to use a computer. All the gates are down. There’s no geeky pretty more.

If I want software the way I want software, so long as I have authentication and authorization, I got the keys to my kingdom. I want to make it my way.

And I think also the agents can preempt. I haven’t tried this yet, but I was thinking of giving it a little sales thing - we have a little prompt where it says,

Even if a web UI is going to be better for the user to do this review, just do it.

So instead of you asking it to do some work, it just comes. Back and be like “oh, what I’ve made you this UI where I’ve displayed it all for you. Have a look at it, let me know if you’re happy with it.” I mean, this is getting kind of wild-a bit of an idea-but it’s kind of how we can think about how we communicate with each other as humans, as employees. We have back-and-forth conversations. We have email, which is a bit more asynchronous.

You know, we put up a preview URL of something. I think all of those communication channels can be enabled in the agent you’re chatting to. I haven’t liked this kind of product companies sell-the initial messaging where people are sort of like digital employees. But something like that’s going to happen, and I don’t think it’s the exciting bit.

For me, the exciting bit is the human-computer interaction. It’s like, yeah, this is how it is-it’s quite exciting in the context of Layer code and why we love voice. Voice is this OG communication method, whereas humans-we started speaking before we were writing.

It’s a quite rich communication medium and a terrific way-if your agents can be really multi-medium, whether it’s:

There doesn’t have to be these strict modes or delineations between those things. Well, let’s go there-I didn’t take us there yet, but I do want to talk to you about what you’re doing with Layer code.

I obviously produce a podcast, so I’m kind of interested in speech-to-text to some degree because transcripts, right? Then you have the obvious version which is like you start out with speech and get something or even a voice prompt.

What exactly is Layer code? I suppose we’ve been 51 minutes deep on nerding out on AI essentially, and not at all on your startup and what you’re doing, which was sort of the impetus of even getting back in touch. I saw you had something new you were doing, and I’m like, well, I haven’t talked to Damian since he sponsored the show almost 17 years ago. It’s probably a good time to talk, right?

So there you go, that’s how it works out.

Has your excitement and your dopamine hits on the daily or even by minute by minute changed how you feel about what you’re building with Layer code, and what exactly are you trying to do with it?

Well, and we’ve talked a lot about the building of a company and the building of software now. I think founders today have that as important as the thing they’re building because if you just head into your company and operate it like you did even a few years ago-using no AI, using all your slow development practices, using slow sales and marketing practices-you’re going to really get left behind.

So there is a lot to be done in working out and exploring:

I’m very excited about the idea that we can build large companies with small teams.

I think a lot of developers-well, I mean, there is a lot of HR, politics, and culture change that happens when teams get truly large and companies get truly large. This was one of the founding principles when we started our startup:

“Let’s see how big we can make this with a small team.”

And that’s very exciting because I think you can move fast and keep a great culture.

So that’s why we invest a lot of our energy into the building of the company and what we build and provide right now. Our first product is a voice infrastructure-a voice API for real-time building of voice AI agents.

This is currently a pretty hard problem. We focus a lot on the real-time conversational aspect, and there’s a lot of wicked problems in that:

If you’re a developer building an agent-whether it could be your sales agent or a developer coding agent-and you want to add voice AI, there’s a bunch of stuff you’ll bump into when you start building that.

It’s interesting. We kind of see our customers, and we can predict where they are on that journey because there are a bunch of problems you don’t preempt, and then you quickly slam into them.

We’ve solved a lot of those problems. So with Layer code, you can just take our API, plug it into your existing agent backend.

You can use:

- Any backend you want

- Any agent LLM library you want

- Any LLM you

The basic example is a Next.js application that uses the Versele AI SDK. We’ve also got Python examples as well. You connect to the Voice Layer code and put in our browser SDK, and then you get a little voice agent microphone button and everything within the web app.

We also connect to the phone over Twilio, and for every turn of the conversation, whenever the user finishes speaking, we ship your backend that transcript. You call the LLM of your choice and do your tool calls-everything you need to generate a response as you normally do for a text agent. Then you start streaming the response tokens back to us. As soon as we get that first word, we start converting that text to speech and start streaming it back to the user.

There’s a lot of complexity to make that really low latency and a real-time conversation where you’re not waiting more than a second or two for the agent to respond. We put a lot of work into refining that. There’s also a lot of exciting innovation happening in the model space for voice models, whether it’s transcription or text to speech.

We give you the freedom to switch between those models. You can try out different voice models:

You can find the right trade-off for your experience. There’s a lot of trade-offs in voice between:

We let users explore that and find the right fit for their voice agent.

That is interesting. So, the Next.js SDK streaming latency-is it meant to be the middleware between implementation and feedback to the user?

Yeah, we handle everything related to the voice basically, and we let you just handle text like a text chatbot. There’s no heavy MP3 or WAV file coming down-everything is streaming.

The very interesting problem to solve is that the whole system has to be real-time. The whole thing we call a pipeline. I don’t know if that’s a great name for it because it’s not like an ETL loading pipeline or something, but we call it a pipeline.

The real-time agent system backend, when you start a new session, runs on Cloudflare Workers. It’s running right near the user who clicked to chat with your agent with voice. From that point on, everything is streaming.

The hardest part is working out when the user has finished speaking. It is so difficult because people pause, make sounds, pause again, and start again. Conversation is very dynamic-it’s like a game almost.

We have to do some clever things and use other AI models to help detect when the user has ended speaking. When we have enough confidence-there’s no certainty, but enough confidence-that the user has finished their thought, we finalize that transcript.

We finish transcribing that last word and ship you the whole user utterance. Whether it’s a word, sentence, or paragraph the user has spoken, we bundle it up and choose an end.

The reason we have to do this bundling and can’t stream the user utterance continuously is because LLMs don’t take streaming input.

You can stream input, but you need the complete question to send to the LLM to then make a request and start generating a response. There is no duplex LLM that takes input and generates input/output simultaneously.

Here’s a conceptual question:

What if you constantly wrote to a file locally or wherever the system is, and then at some point, it just ends and you send a call that signals the end versus packaging it all up and sending once it’s done? Like incrementally line by line?

I’m not sure how to describe it, but that’s how I think about it. You constantly write to something and then say,

“Okay, it’s done,” and what was there becomes the final input.

So yes, we can do that in terms of having partial transcripts. We can stream those partial transcripts and then say,

“Okay, now it’s done, now make the LLM call.”

Then you make the LLM call.

Interestingly, sending text is actually super fast in the context of voice, very fast compared to all other steps involved. And actually the default example, this is crazy, I didn’t think this would work until we tried it. But it just uses a webhook. When the user finishes speaking, the basic example sends your Next.js API a webhook with the user text. And it turns out the webhook - sending a webhook with a few sentences in it - that’s fine, that’s fast.

It’s all the other stuff like then waiting for the LLM to respond. Yeah, that’s actually not the hard part. I mean, you have maybe a millisecond-ish or a few milliseconds, but it’s not going to be a dramatic shift, right? The way I described it versus how, yeah.

And we’ve got a web socket endpoint now, so we can kind of shave off that HTTP connection and everything. But yeah, then the big heavy latency items come in, so:

Generating an LLM response. Most LLMs we use right now - the ones we’re using, coding agents - they’re optimized for intelligence, not really speed.

When people optimize for speed, LLM labs tend to optimize for just token throughput. Very few people optimize for time to first token.

And that’s all that matters in voice: I give you the user utterance, how long is the user going to have to wait before I can start playing back an agent response to them? And time to first token is that, right? How long before I get the first kind of word or two that I can turn into voice, and they can start hearing?

The only major LLM lab that actually optimizes for this or maintains a low latency of TTFT (time to first token) is:

OpenAI, most voice agents now are doing it this way. We’re using GPT-4o or Gemini Flash. GPT-4o has some annoying, open API points with some annoying inconsistencies in latency, and that’s kind of the killer in voice, right?

It’s a bad user experience if it works - the first few turns of the conversation are fast, and then suddenly the next turn the agent takes three seconds to respond. You’re like:

“Is the agent wrong? Is the agent broken?”

But then once you get that first token back, then you’re good, because then you can send that text to us, start streaming text to us, and then we can start turning it into full sentences.

And then again, we get to this batching problem. The voice models that do text to voice, again, they don’t stream in the input. They require a full sentence of input before they can start generating any output, because again, how you speak and how things are pronounced depends on what comes later.

So you have to buffer the LLM output into sentences, ship the buffered sentences one by one to the voice model, and then as soon as we get that first chunk of 20 millisecond audio, we chunk it up into streams, stream that straight back down web sockets from the Cloudflare worker straight into the user’s browser, and can start playing the agent response.

Friends, you know this - you’re smart - most AI tools out there are just fancy autocompletes with a chat interface. They help you start the work, but they never do the fun thing you need to do, which is finish the work. That’s what you’re trying to do:

Those pile up into your Notion workspace - looks like a crime scene. I know mine did.

I’ve been using Notion Agent, and it’s changed how I think about delegation - not delegation to another team member, but delegation to something that already knows how I work, my workflows, my preferences, how I organize things.

And here’s what got me: as you may know, we produce a podcast. It takes prep, a lot of details - there’s emails, calendars, notes here and there, and it’s kind of hard to get all that together.

Well, now my Notion Agent helps me do all that. It organizes it for me. It’s got a template based on my preferences, and it’s easy.

Notion brings all your notes, docs, projects into one connected space that just works. It’s:

You spend less time switching between tools, and more time creating that great work you do - the art, the fun stuff. And now, with Notion Agent, your AI doesn’t just help you with your work; it finishes it for you, based on your preferences.

Since everything you’re doing is inside Notion, you’re always in control. Everything the agent does is:

You can trust it with your most precious work.

As you know, Notion is used by us - I use it every day. It’s used by over 50 percent of Fortune 500 companies and some of the fastest-growing companies out there like:

They all use Notion Agent to help their teams:

So try Notion now with Notion Agent at:

notion.com/changelog

That’s all lowercase letters: notion.com/changelog to try your new AI. Teammate Notion Agent today, and we use our link as you know you’re supporting your favorite show: the changelog once again notion.com/changelog.

You chose TypeScript to do all this. We’re pretty set on Cloudflare Workers from day one, and it just solves so many infrastructure problems that you’re going to run into later on.

I like-I don’t think we’ll need a devops person ever. It’s such a- That’s interesting. It’s such a wonderful-there are constraints you have to build to, right? You’re using V8 JavaScript, browser JavaScript, in a Cloudflare Worker. Tons of Node APIs don’t work there. There is a bit of a compatibility layer; you do have to do things a bit differently.

But what do you get in return?

There’s often quite big spikes, like 9 a.m.-everyone’s calling up, there’s an agent somewhere, asking to kind of book an appointment or something. You get these big spikes. You want to be able to scale, and you need to scale very quickly because you don’t want people waiting around.

If you throw tons of users on the same system and start overloading it, then suddenly people get this problem where the agent starts responding in three seconds instead of one second. It sounds weird, but yeah, Cloudflare gives you an incredible amount of that for no effort.

Compared to Lambda and similar platforms, it’s also pretty nice: the interface is just an HTTP interface to your worker. There’s nothing in front, and you can do WebSockets very nicely.

There’s this crazy thing called Durable Objects, which I think is a bad name and also kind of a weird piece of technology, but it’s basically:

You can have it take a bunch of WebSocket connections and do many SQL writes to its SQLite database it has attached. You don’t have to do any kind of special stuff dealing with concurrency and atomic operations.

A simple example is to implement a rate limiter or a counter or something like that very simply in Durable Objects.

You can have as many Durable Objects as you want. Each one has a SQLite database attached. You can have 10 gigabytes per one, and you can do whatever you want.

For example:

- You could have a Durable Object per customer that tracks something that you need to be done in real time.

- You could have a Durable Object per chat room.

As long as you don’t exceed the compute limits of a Durable Object, you can use it for all sorts of magical things.

I think it is a real under-known thing that Cloudflare has. Coming from Pusher, it’s like the kind of real-time primitive now. A lot of the stuff we’d reach for something like Pusher, Durable Objects, especially when building fully real-time systems, is really, really valuable.

You chose TypeScript based on Cloudflare Workers because that gave you:

For those who choose Go-or I don’t think you choose Rust for this because it’s not the kind of place you’d put Rust-but Go would compete for the same kind of mind share for you.

How would the system have been different if you chose Go? Or can you even think about that?

I haven’t actually written any Go, so I don’t know if I can give a good comparison. From the perspective of what we do have out there, there are similar real-time voice agent platforms in Python. I think because many people building the voice models then built coordination systems like layer code for coordinating real-time conversations, Python was the language they chose.

I think what’s more important is the patterns rather than the specific languages.

We actually wrote the first implementation with RxJS, which has implementations in most popular languages. I hadn’t used it before, but we chose it for stream processing. It’s not really for real-time systems, but it gives you… Subjects channel these kinds of has its own names for these things but basically it’s like a pub-sub kind of thing. Then it’s got this kind of functional chaining thing where you can pipe things, filter messages, split messages, and things like that.

That did allow us to build the first version of this quite dynamic system.

We didn’t touch on it, but interruptions are another really difficult dynamic part. Whilst the agent is speaking its response to you, if the user starts speaking again, you need to decide in real time whether the user is interrupting the agent or just agreeing with the agent -

“Oh gosh” or are they trying to say “oh stop”?

That’s a hard problem to solve.

We still have to be transcribing audio even when the user is hearing it. We have to deal with background noise and everything. Then, when we’re confident the user is trying to interrupt the agent, we’ve got to do this whole kind of state change where we tear down all of this in-flight LLM request and in-flight voice generation request, and then as quickly as possible, start focusing on the user’s new question.

Especially if their interruption is really short, like:

Suddenly you’ve got to tear down all the old stuff, transcribe that word stop, then ship that as a new LLM request to the back end, generate the response, and get the agent speaking back as quickly as possible.

And that’s all happening down one pipe, as it were, at the end of the day - audio from the browser microphone, then audio replaying back.

We would have bugs like:

You’re kind of in Audacity or some audio editor, trying to work out:

“Why does it sound like this?”

You’re rearranging bits of audio, going:

“Ah, okay, the responses are taking turns every 20 milliseconds, it’s interleaving the two responses.”

Real, real pain in the ass.

When you solve that problem of the interruption:

How do you direct that interrupt? It really depends on the use case - how you configure the voice agent, really depends on how the voice agent is being used.

For example:

When we call those audio environments, it’s often an early issue users have, like:

Big problem with audio transcription is that it just transcribes any audio it hears. If someone’s talking behind you, it just transcribes that. The model doesn’t know that’s irrelevant conversation.

If you imagine the therapy voice agent, it needs to:

Maybe even tears or crying, or just some sort of human interrupt - but not a true interrupt. It’s something you should maybe even capture in parentheses.

You can choose a few different levels of interruption:

By default, we interrupt when we hear any word that’s not a filler word, so we filter out things like “um”, “uh”, etc.

If you need more intelligence, you can ship off the partial transcripts to an LLM in real time.

For example, let’s say the user starts interrupting the agent every word or every few words, you:

Here's the previous thing the user said, here's what the agent said, here's what the user just said.

Yes or no, do you think they're interrupting the agent?

You get that back in about 250-300 milliseconds.

As you get new transcripts, you:

Then you get the response from that and can make a quite intelligent decision.

These things feel very hacky but they actually work very well.

The first thing I think about there is that Gemini Flash is not local, so you do have to deal with:

Or in the Claude I would… Say Cloud Web’s case, most recently, a lot of downtime occurred because of really heavy usage. The last two days, I’ve had more interruptions on the web than ever, and I’m like that’s because, yeah, it’s the Ralph effect. I’m like, okay cool, I get it. You know, I’m not upset with you because I empathize with how in the world do you scale those services.

So, why does your system not allow for a local LM to be just as smart? Then Gemini Flash might be, to answer that very simple question-like an interrupt, it’s a pretty easy thing to determine.

Yeah, I think smaller LMs can do that. Gemini is just incredibly fast, I think because of their TPU infrastructure. They’ve got an incredibly low TTFT (time to first token), which is the most important thing. But I agree that there are smaller LMs, and actually, I think probably maybe one of the Grok with a Q, Llamas, actually might even be a bit faster. We should try that.

You make a point about reliability. People really notice it in voice agents when it doesn’t work right, especially if a business is relying on it to collect a bunch of calls for them.

So, that is one of the other helpful things that platforms like ours provide-even just cost. I imagine over time, cost is a factor. Right now, you’re probably fine with it because you’re innovating and maybe finding out things like:

You’re sort of just-in-time building a lot of this stuff, and you might be okay with the inherent cost of innovation. But at some point, you may flatten a little bit and think, “You know what? If it had been running locally for the last little bit, we just saved 50 grand.” I don’t know what the number is, but the local model becomes a version of free when you own:

- The hardware

- The compute

- The pipe to it

You can own the SLA latency to it as well as the reliability that comes from that.

There are some cool new transcription models from NVIDIA, and they’ve got some voice models. There was a great demo of a fully open-source local voice agent platform done with Pipecat, which is the Python coordination agent infrastructure open source project that I was mentioning.

They’ve got a really great pattern: a plug-in in plug-in pattern for their voice agent. I think that’s the right pattern. We’ve adopted a similar one, and other frameworks have done that. We’ve adopted a similar pattern for ours when we rebuilt it recently.

The important thing is the plugins. These are kind of independent things that you can test in isolation. That was the biggest problem we had with RxJS-the whole thing was kind of like mixing, kind of audio mixing things where you have cables going everywhere. It was kind of like that with RxJS subjects going absolutely everywhere.

It was hard for us as humans to understand. It was the kind of code where you come back to a week later and ask, “What was happening here?” Often, we’d write code where the code at the top of the file was actually the thing that happened last in execution, just because that’s how RxJS was telling us to do it or guiding us on how we had to initialize things.

One of the key things we did was move to a plug-in architecture. We moved to a very basic system with no kind of RxJS style stream processing plugin-just all very simple JavaScript with async iterables. We just pass a waterfall of messages down through plugins. It’s so much better.

We can take out a plugin if we need to, unit test the plugin, write integration tests, and mock out plugins up and down. We’re about to launch that, and that’s just a game changer.

Interestingly, tying back to LLMs, we ended up here because with the first implementation, we found it hard as developers to understand the code we’d written. The LLMs were hopeless; they just could not hold the state of this dynamic, crazy multi-subject stream system in their head. The context was everywhere-it was here and there.

Even if I would take the whole file, copying and pasting files into ChatGPT Pro, being like:

“You definitely have all the context here, fix this problem.”

And they would solve the problem.

Part of the problem was that complexity-not having the ability to test things in isolation meant we couldn’t have a kind of TDD loop, whether with a human or with an agent.

Because of that, we couldn’t use agents to add features to this. The platform to the core of the platform was slowing us down, and so that’s when we really started to use coding agents called Code and Codex like really properly and hard. I spent two weeks just with Code, Codex, and the mission was:

“If I can get the coding agent to write the new version of this, it was kind of not even a refactor; it had to be rewritten start from scratch, first principles.”

Then, by virtue of it writing it, it’ll understand it, and I’ll be able to use coding agents to add features.

I started with literally the API docs for our public API because I didn’t want to change that, and the API docs of all the providers and models we implement, with like the speech-to-text and text-to-speech model provider endpoints, and just some ideas about