This post

Is intended to supply a detailed proofs of the Riemann mapping theorem.

Riemann mapping theorem. Every simply connected region $\Omega \subsetneq \mathbb{C}$ is conformally equivalent to the open unit disc $U$.

Fortunately the proof can be found in many textbooks of complex analysis, but the proof is fairly technical so it can be painful to read. This post can be considered as a painkiller. In this post you will see the proof being filled with many details. However, the writer still encourage the reader to reproduce the proof by their own pen and paper. The writer also hopes that this post can increase the accessibility of this theorem and the proof.

However, there is a bar. We need to assume some background in complex analysis, although they are very basic already. Minimal prerequisite is being able to answer the following questions.

Contour integration, Cauchy’s formula.

Almost uniform convergence. Let $\Omega \subset \mathbb{C}$ be open and suppose that $f_j \in H(\Omega)$ for all $j=1,2,\dots$, and $f_j \to f$ uniformly on every compact subset $K \subset \Omega$. Does $f \in H(\Omega)$? What is the uniform limit of $f’_j$? Informally, we call the phenomenon that a sequence of functions uniformly converges on every compact subset almost uniform convergence. This has nothing to do with almost everywhere in integration theory. In fact, this post does not require background in Lebesgue integration theory.

Open mapping theorem (complex analysis version).

Maximum modulus principle and some variants.

Rouché’s theorem. Or even more, the calculus of residues.

Preparation

Despite of the prerequisites, we still need some preparation beforehand.

Simply Connected

Definition 1. Let $X$ be a connected topological space. We say $X$ is simply connected if every curve is null-homotopic. Let $\gamma:[0,1] \to X$ be a closed curve, i.e., it is a continuous map such that $\gamma(0)=\gamma(1)$. We say $\gamma$ is null-homotopic if it is homotopic to a constant map $\gamma_0:[0,1] \to \{x\}$ with $x \in X$.

Intuitively, if $X$ is simply connected, then $X$ contains no “hole”. For example, the unit disc $U$ is simply connected. However, $U \setminus \{0\}$ is not. On the other hand, $U \setminus [0,1)$ is still simply connected. Another satisfying result is that every convex and connected open set is simply connected. This is up to a convex combination.

There are a lot of good properties of simply connected region, which will be summarised below.

Proposition 1. For a region (open and connected subset of $\mathbb{R}^2$), the following conditions are equivalent. Each one can imply other eight.

- $\Omega$ is homeomorphic to the open unit disc $U$.

- $\Omega$ is simply connected.

- $\operatorname{Ind}_\gamma(\alpha)=0$ for every path $\gamma$ in $\Omega$ and $\alpha \in S^2 \setminus \Omega$, where $S^2$ is the Riemann sphere.

- $S^2 \setminus \Omega$ is connected.

- Every $f \in H(\Omega)$ can be approximated by polynomials, almost uniformly..

- For every $f \in H(\Omega)$ and every closed path $\gamma$ in $\Omega$,

- Every $f \in H(\Omega)$ has anti-derivative. That is, there exists an $F \in H(\Omega)$ such that $F’=f$.

- If $f \in H(\Omega)$ and $1/f \in H(\Omega)$, then there exists a $g \in H(\Omega)$ such that $f=\exp{g}$.

- For such $f$, there also exists a $\varphi \in H(\Omega)$ such that $f=\varphi^2$.

5~9 are pretty much saying, calculus is fine here and we are not worrying about nightmare counterexamples, to some extent. Most of the implications $n \implies n+1$ are not that difficult, but there are some deserve a mention. 4 implying 5 is a consequence of Runge’s theorem. In the implication of 7 to 8, one needs to use the fact that $\Omega$ is connected. When we have $f=\exp{g}$, then we can put $\varphi=\exp\frac{g}{2}$ from which we obtain $f=\varphi^2$. 9 implying 1 is partly a consequence of the Riemann mapping theorem. Indeed, if $\Omega$ is the plane then the homeomorphism is easy: $z \mapsto \frac{z}{1+|z|}$ is a homeomorphism of $\Omega$ onto $U$. But we need the Riemann mapping theorem to give the remaining part, when $\Omega$ is a proper subset.

If you know the definition of sheaf, you will realise that $(\mathbb{C},H(\cdot))$ is indeed a sheaf. For each open subset $\Omega \subset \mathbb{C}$, $H(\Omega)$ is a ring, even more precisely, a $\mathbb{C}$-algebra. The exponential map $\exp:g \mapsto e^g$ is a sheaf morphism. However, we now see that it is surjective if and only if $\Omega$ is simply connected. I hope this can help you figure out an exercise in algebraic geometry. You know, that celebrated book by Robin Hartshorne.

Since we haven’t prove the Riemann mapping theorem, we cannot use the equivalence above yet. However, we can use 9 right away. This gives rise to Koebe’s square root trick.

Equicontinuity & Normal Family

Equicontinuity is quite an important concept. You may have seen it in differential equation, harmonic function, maybe just sequence of functions. We will use it to describe a family of functions, where almost uniform convergence can be well established.

Definition 2. Let $\mathscr{F}$ be a family of functions $(X,d) \to \mathbb{C}$ where $(X,d)$ is a metric space.

We say that $\mathscr{F}$ is equicontinuous if, to every $\varepsilon>0$, there corresponds a $\delta>0$ such that whenever $d(x,y)<\delta$, we have $|f(x)-f(y)|<\varepsilon$ for all $f \in \mathscr{F}$. In particular, by definition, all functions in $\mathscr{F}$ are uniformly continuous.

We say that $\mathscr{F}$ is pointwise bounded if, to every $x \in X$, there corresponds some $0 \le M(x) < \infty$ such that $|f(x)| \le M(x)$ for every $f \in \mathscr{F}$.

We say that $\mathscr{F}$ is uniformly bounded on each compact subset if, to each compact $K \subset X$, there corresponds a number $M(K)$ such that $|f(z)| \le M(K)$ for all $f \in \mathscr{F}$ and $z \in K$.

These concepts are talking about “a family of” continuity and boundedness. In our proof of the Riemann mapping theorem, we do not construct the map explicitly, instead, we will use these concepts above to obtain one (which is a limit) that exists. In this post we simply put $X=\Omega \subset \mathbb{C}$, a simply connected region and $d$ is the natural one.

A famous result of equicontinuity is Arzelà-Ascoli, which says that pointwise boundedness and equicontinuity implies almost uniform convergence.

Theorem 1 (Arzelà-Ascoli) Let $\mathscr{F}$ be a family of complex functions on a metric space $X$, which is pointwise bounded and equicontinuous. $X$ is separable, i.e., it contains a countable dense set. Then every sequence $\{f_n\}$ in $\mathscr{F}$ has then a subsequence that converges uniformly on every compact subset of $X$.

Here is a self-contained proof.

Certainly it is OK to let $X$ be a subset of $\mathbb{R}$, $\mathbb{C}$ or their product. We use this in real and complex analysis for this reason. We will need this almost uniform convergence to establish our conformal map. To specify its application in complex analysis, we introduce the concept of normal family.

Definition 3. Suppose $\mathscr{F} \subset H(\Omega)$, for some region $\Omega \subset \mathbb{C}$. We call $\mathscr{F}$ a normal family if every sequence of members of $\mathscr{F}$ contains a subsequence, which converges uniformly on every compact subset of $\mathscr{F}$. The limit function is not required to be in $\mathscr{F}$.

We now apply Arzelà-Ascoli to complex analysis.

Theorem 2 (Montel). Suppose $\mathscr{F} \subset H(\Omega)$ is uniformly bounded, then $\mathscr{F}$ is a normal family.

Proof. We need to show that $\mathscr{F}$ is “almost” equicontinuous, since uniformly boundedness clearly implies pointwise boundedness, we can apply Arzelà-Ascoli later.

Let $\{K_n\}$ be a sequence of compact sets such that (1) $\bigcup_n K_n = \Omega$ and (2) $K_n \subset K^\circ_{n+1} \subset K_{n+1}$, the interior of $K_{n+1}$. Then for every $z \in K_n$, there exists a positive number $\delta_n$ such that

where $D(a,r)$ is the disc centred at $a$ with radius $r$. If such $\delta_n$ does not exist, then there exists a point $z \in K_{n}$ such that whenever $\delta>0$, $D(z,\delta) \setminus K_{n+1} \ne \varnothing$, which is to say, $z$ is a boundary point. But this is impossible because $z$ lies in the interior of $K_{n+1}$ by definition.

For such $\delta_n$, we pick $z’,z’’ \in K_n$ such that $|z’-z’’| < \delta_n$. Let $\gamma$ be the positively oriented circle with centre at $z’$ and radius $2\delta_n$, i.e. the boundary of $D(z’,2\delta_n)$. Recall that the Cauchy formula says

We will make use of this. By the formula above, we have

Now we make use of our choice of $z’$, $z’’$ and $\gamma$. By definition, for $\zeta \in \gamma^\ast$ (the range of $\gamma$), we have $|\zeta-z’|=2\delta_n$. Since $|z’-z’’|<\delta_n$, we have $|\zeta-z’|=2\delta_n=|\zeta-z’’+z’’-z|\le |\zeta-z’’|+|z’’-z’|$. Therefore $|\zeta-z’’| \ge 2\delta_n-|z’’-z’|>\delta_n$. Bearing this in mind, we see

This may looks confusing so we explain it a little more. Since $D(z’,2\delta_n) \subset K^\circ_{n+1}$, we must have $\overline{D}(z’,2\delta_n) \subset K_{n+1}$, therefore whenever $\zeta \in \gamma^\ast=\partial D(z’,2\delta_n)$, we have $|f(\zeta)| \le M(K_{n+1})$. This is where we use the hypothesis of uniformly bounded. we have $|(\zeta-z’)(\zeta-z’’)|>2\delta_n\delta_n$. The integral of the norm of the integrand $\frac{f(\zeta)}{(\zeta-z’)(\zeta-z’’)}$, is therefore bounded by $\frac{M(K_{n+1})}{2\delta_n^2}$. The integral over $\gamma$ is therefore bounded by $\frac{M(K_{n+1})}{2\delta_n^2}$ times $2\pi\delta_n$ and the result follows.

What does this inequality imply? For $\varepsilon>0$, if we pick $\delta=\min\{\delta_n,\frac{2\delta_n\varepsilon}{M(K_{n+1})}\}$, then $|f(z’)-f(z’’)|<\varepsilon$ for every $f \in \mathscr{F}$ and $|z’-z’’|<\delta$. That is, for each $K_n$, the restrictions of the members of $\mathscr{F}$ to $K_n$ form an equicontinuous family.

Now consider a sequence $\{f_j\}$ in $\mathscr{F}$. For each $n$, we apply Arzelà-Ascoli theorem to the restriction of $\mathscr{F}$ to $K_n$, and it gives us an infinite subset $S_n \subset \mathbb{N}$ such that $f_j$ converges uniformly on $K_n$ as $j \to \infty $ and $j \in S_n$. Note we can make sure $S_n \supset S_{n+1}$ because if the subsequence converges uniformly within $S_{n+1}$ then it converges uniformly within $S_n$ as well. Pick a new sequence $\{s_j\}$ where $s_j \in S_j$, then we see $\lim_{j \to \infty}f_{s_j}$ converges uniformly on every $K_n$ and therefore on every compact subset $K$ of $\Omega$. The statement is now proved. $\square$

Remarks. We have no idea what the limit is, and this happens in our proof of the Riemann map theorem as well.

The sequence $\{K_n\}$ can be constructed explicitly, however. In fact, for every open set $\Omega$ in the plane there is a sequence $\{K_n\}$ of compact sets such that

- $\bigcup_n K_n=\Omega$.

- $K_n \subset K_{n+1}^\circ$.

- For every compact $K \subset \Omega$, there is some $n$ such that $K \subset K_n$.

- Every component of $S^2 \setminus K_n$ contains a component of $S^2 \setminus \Omega$.

The set is constructed as follows and can be verified to satisfy what we want above. or each $n$, define

Then $K=S^2 \setminus V_n$ is what we want.

The Schwarz Lemma

Is another important tool for our proof of the Riemann mapping theorem. We need this lemma to establish important inequalities. This lemma as well as its variants show the rigidity of holomorphic maps. We make use of the maximum modulus theorem. For simplicity, let $H^\infty$ be the Banach space of bounded holomorphic functions on $U$, equipped with supremum norm $| \cdot |_\infty$.

Theorem 3 (Schwarz lemma). Suppose $f:U \to \mathbb{C}$ is a holomorphic map in $H^\infty$ such that $f(0)=0$ and $|f|_\infty \le 1$, then

on the other hand, if $|f(z)|=|z|$ holds for some $z \in U \setminus \{0\}$, or if $|f’(0)|=1$ holds, then $f(z)=\lambda{z}$ for some complex constant $\lambda$ such that $|\lambda|=1$.

Proof. Since $f(0)=0$, $f(z)/z$ has a removable singularity at $z=0$. Hence there exists $g \in H(U)$ such that $f(z)=zg(z)$. Fix $0<r<1$. For any $z \in U$ such that $|z|<r$, we have

Therefore when $r \to 1$, we see $|g(z)| \le 1$ for all $z \in U$. Therefore $|f(z)| \le |z|$ follows. On the other hand, if $|g(z)|=1$ at some point, the maximum modulus forces $g(z)$ to be a constant, say $\lambda$, from which it follows that $|\lambda|=|g(z)|=1$ and $f(z)=\lambda{z}$. $\square$

There are many variances of the Schwarz lemma, and we will be using Schwarz-Pick.

Definition 4. For any $\alpha \in U$, define

This family is a subfamily of Möbius transformation, but we are not paying very much attention to this family right now. We need the fact that such $\varphi_\alpha$ is always a one-to-one mapping which carries $S^1$ (the unit circle) onto $S^1$ and $U$ onto $U$ and $\alpha$ to $0$. This requires another application of the maximum modulus theorem. A direct computation shows that

Theorem 4 (Schwarz-Pick lemma). Suppose $\alpha,\beta \in U$, $f \in H^\infty$ and $| f|_\infty \le 1$, $f(\alpha)=\beta$. Then

Proof. Consider

We see $g \in H^\infty$ and $|g|_\infty \le 1$. What’s more important, $g(0)=\varphi_\beta \circ f(\alpha)=\varphi_\beta(\beta)=0$. By the Schwarz lemma, $|g’(0)| \le 1$. On the other hand, we see

and therefore

In particular, equality holds if and only if $g(z)=\lambda{z}$ for some constant $\lambda$. If this is the case, then

The story can go on but we halt here and continue our story of the Riemann mapping theorem.

The Riemann Mapping Theorem

Each $z \ne 0$ determines a direction from the origin, which can be described by

Let $f:\Omega \to \mathbb{C}$ be a map. We say $f$ preserves angles at $z_0 \in \Omega$ if

exists and is independent of $\theta$.

Conformal mappings preserves angles in a reasonable way. A function $f$ is conformal if it is holomorphic and $f’(z) \ne 0$ everywhere. We have a theorem describes that, but it is pretty elementary so we are not including the proof in this post.

Theorem 5. Let $f$ map a region $\Omega$ into the plane. If $f’(z_0)$ exists at some $z_0 \in \Omega$ and $f’(z_0) \ne 0$, then $f$ preserves angles at $z_0$. Conversely, if the differential $Df$ exists and is different from $0$ at $z_0$, and if $f$ preserves angles at $z_0$, then $f’(z_0)$ exists and is different from $0$.

There is no confusion about $f’(z_0)$. By differential $Df$ we mean a linear map $L:\mathbb{R}^2 \to \mathbb{R}^2$ such that, writing $z_0=(x_0,y_0)$, we have

where $\eta(x,y) \to 0$ as $x \to 0$ and $y \to 0$. To prove this, one can assume that $z_0=f(z_0)=0$. When the differential exists, one writes

We say that two regions $\Omega_1$ and $\Omega_2$ are conformally equivalent if there is a conformal one-to-one mapping of $\Omega_1$ onto $\Omega_2$. The Riemann mapping theorem states that

Theorem 6 (Riemann mapping theorem). Every proper simply connected region $\Omega$ in the plane is conformally equivalent to the open unit disc $U$.

As a famous example, the upper plane $\mathbb{H}$ is conformally equivalent to $U$ by the Cayley transform.

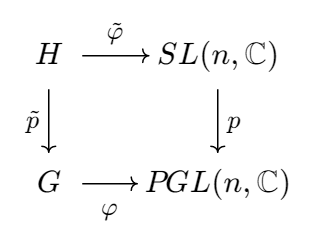

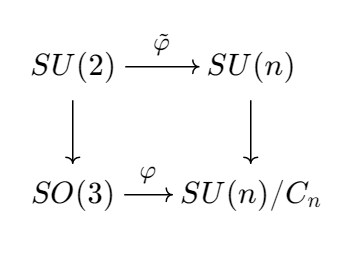

As one may expect, this theorem asserts that the study of a simply connected region $\Omega$ can be reduced to $U$ to some extent. But a conformal equivalence is not just about homeomorphism. If $\varphi:\Omega_1 \to \Omega_2$ is a conformal one-to-one mapping, then $\varphi^{-1}:\Omega_2 \to \Omega_1$ is also a conformal mapping. In the language of algebra, such a mapping $\varphi$ induces a ring isomorphism

Therefore, the ring $H(\Omega_2)$ is algebraically the same as $H(\Omega_1)$. The Riemann mapping theorem also states that, if $\Omega$ is a simply connected region, then $H(\Omega) \cong H(U)$. From this we can exploit much more information on top of homeomorphism. One can also extend the story to $S^2$, the Riemann sphere, but that’s another story.

The Proof by Arguing A Normal Family

The proof is fairly technical. But it is a good chance to attest to our skill in complex analysis. The bread and butter of this proof is the following set:

Our is to prove that there is some $\psi \in \Sigma$ such that $\psi(\Omega)=U$. Note, once the non-emptiness is proved, since $|\psi|<1$ uniformly, we see $\Sigma$ is a normal family.

Step 1 - Prove Non-emptiness Using Koebe’s Square Root Trick

Pick $w_0 \in \mathbb{C} \setminus \Omega$. Then $g(z)=z-w_0 \in H(\Omega)$ and what is more important, $\frac{1}{g} \in H(\Omega)$. By 9 of proposition 1, there exists $\varphi \in H(\Omega)$ such that $\varphi^2(z)=g(z)$, i.e., informally, $\varphi(z)=\sqrt{z-w_0}$ in $\Omega$. If $\varphi(z_1)=\varphi(z_2)$, then $\varphi(z_1)^2=\varphi(z_2)^2=z_1-w_0=z_2-w_0$ and then $z_1=z_2$. Therefore $\varphi$ is one-to-one. On the other hand, if $\varphi(z_1)=-\varphi(z_2)$, we still have $\varphi^2(z_1)=\varphi^2(z_2)=z_1-w_0=z_2-w_0$, and $z_1=z_2$. This is shows that the “square-root” is well-defined here. This is the Koebe’s square root trick.

Since $\varphi$ is an open mapping, there is an open disc $D(a,r) \subset \varphi(\Omega)$, where $a \in \varphi(\Omega)$, $a \ne 0$ and $0<r<|a|$. But by arguments above we have $-a \not\in \varphi(\Omega)$, and therefore $D(-a,r) \cap \varphi(\Omega) = \varnothing$. For this reason, we can put

It follows that

and therefore $\psi(\Omega) \subset U$. Since $\varphi$ is one-to-one, $\psi$ is one-to-one as well and we deduce that $\psi \in \Sigma$, this set is not empty.

Remark. You may have trouble believing that $D(-a,r) \cap \varphi(\Omega)=\varnothing$. But if we pick any $w \in D(-a,r) \cap \varphi(\Omega)$, we have some $z’ \in \Omega$ such that $\varphi(z’)=w$. We also have $|-a-w|<r$ but this implies $|a-(-w)|=|a+w|=|-a-w|<r$, and therefore $-w \in D(a,r) \subset \varphi(\Omega)$. There exists some $z’’ \in \Omega$ such that $\varphi(z’’)=-w$. Hence $-w=w=0$. It follows that $|a|<r$ and this is a contradiction.

Since $D(-a,r) \cap \varphi(\Omega)=\varnothing$, we have $|\varphi(z)-(-a)|>r$ for all $z \in \Omega$ and therefore $|\psi(z)|<1$ is not a problem either.

Step 2 - Enlarge the Range

If $\psi \in \Sigma$ and $\psi(\Omega) \subsetneqq U$, and $z_0 \in \Omega$, then there exists a $\psi_1 \in \Sigma$ such that $|\psi_1’(z_0)|>|\psi’(z_0)|$.

This step shows that we can “enlarge” the range in some way.

For convenience we use the Möbius transformation

Pick $\alpha \in U \setminus \psi(\Omega)$. Then $\varphi_\alpha \circ \psi \in \Sigma$ and $\varphi_\alpha \circ \psi$ has no zero in $\Omega$. Hence there is some $g \in H(\Omega)$ such that

Since $\varphi_\alpha \circ \psi$ is one-to-one, another application of Koebe’s square root trick shows that $g$ is one-to-one. Therefore we have $g \in \Sigma$ as well. If $\psi_1=\varphi_\beta \circ g$ where $\beta=g(z_0)$, we have $\psi_1 \in \Sigma$ (one-to-one). In particular, $\psi_1(z_0)=0$.

By putting $s(z)=z^2$, we have

If we put $F(z)=\varphi_{-\alpha} \circ s \circ \varphi_{-\beta}(z)$, then the chain rule shows that

(Note we used the fact that $\psi_1’(z_0)=0$.) If we can prove that $0<|F’(0)|<1$ then this step is complete. Note $F$ satisfy the condition in Schwarz-Pick lemma and therefore

The first equality does not hold because $F$ is not of the form $\varphi_{-\sigma}(\lambda\varphi_{\eta}(z))$ for $|\lambda|=1$. On the other hand we have

Therefore $0<|F’(0)|<1$ and the this step is complete.

Step 3 - Find the Function with Largest range, Namely the Disc

We take the contraposition of step 2:

Fix $z_0 \in \Omega$. If $h \in \Sigma$ is an element such that $|h’(z_0)| \ge |\psi’(z_0)|$ for all $\psi \in \Sigma$, then $h(\Omega)=U$.

The proof is complete once we have found such a function! To do this, we use the fact that $\Sigma$ is a normal family. Put

By definition of $\eta$, there is a sequence $\{\psi_n\}$ such that $|\psi_n’(z_0)| \to \eta$ in $\Sigma$. By normality of $\Sigma$, we pick a subsequence $\varphi_k=\psi_{n_k}$ that converges uniformly on compact subsets of $\Omega$. Put the uniform limit to be $h \in H(\Omega)$. It follows that $|h’(z_0)|=\eta$. Since $\Sigma \ne \varnothing$ and $\eta \ne 0$, $h$ cannot be a constant. Since $\varphi_n(\Omega) \subset U$, we must have $h(\Omega) \subset \overline{U}$. But since $h$ is open, we are reduced to $h(\Omega) \subset U$.

It remains to show that $h$ is one-to-one. Fix distinct $z_1, z_2 \in \Omega$. Put $\alpha=h(z_1)$ and $\alpha_n=\varphi_n(z_1)$, then $\alpha_n \to \alpha$. Let $\overline{D}$ be a closed disc in $\Omega$ centred at $z_2$ with interior denoted by $D$ such that

- $z_1 \not\in \overline{D}$.

- $h-\alpha$ has no zero point on the boundary of $\overline{D}$.

We see $\varphi_n -\alpha_n$ converges to $h-\alpha$, uniformly on $\overline{D}$. They have no zero in $D$ since they are one-to-one and have a zero at $z_1$. By Rouché’s theorem, $h-\alpha$ has no zero in $D$ either, and in particular $h(z_2)-\alpha = h(z_2)-h(z_1) \ne 0$. This completes the proof. $\square$

Remark. First of all, such a $\overline{D}$ is accessible. This is because zero points of $h-\alpha$ has no limit point in $\Omega$, i.e., they are discrete (when defining $\overline{D}$, we don’t know how many are there yet).

Our choice of $\overline{D}$ enables us to use Rouché’s theorem (chances are you didn’t get it). Since $h-\alpha$ has no zero on the boundary, we have $\zeta=\inf_{z \in \partial D}|h(z)-\alpha|>0$. When $n$ is big enough, we see

The second inequality is another application of the maximum modulus theorem. Rouché’s theorem applies here naturally as well. $\square$

This proof is a reproduction of W. Rudin’s Real and Complex Analysis. For a comprehensive further reading, I highly recommend Tao’s blog post.