CS231n Lecture Note VI: CNN Architectures and Training

In this note, we will continue discussing CNNs.

Normalization Layers

Normalization layers standardize activations and then apply a learned scale and shift:

The difference between methods is which dimensions are used to compute and (for input shape ):

- BatchNorm: per channel, over ; depends on batch statistics

- LayerNorm: per sample, over all features; independent of batch

- InstanceNorm: per sample & channel, over ; removes instance contrast

- GroupNorm: per sample, over channel groups + spatial dims; stable for small batches

Key idea: same formula, different normalization axes → different behavior and use cases.

Regularization: Dropout

In each forward pass, randomly set a subset of activations to zero with probability (a hyperparameter, often ). To keep the expected activation unchanged, the remaining activations are scaled:

- This forces the network to have redundant representation, preventing co-adaptation of neurons

- Acts like implicit ensemble averaging

- Applied only during training; disabled at inference (no randomness)

With inverted dropout, no test-time scaling is needed because the activations were already rescaled during training.

- Training: Add some kind of randomness

- Testing: Average out randomness (sometimes approximate)

Activation Functions

Goal: Introduce non-linearity to the model.

- Sigmoid:

- Problem: the grandient gets small with many layers.

- ReLU (Rectified Linear Unit):

- Does not saturate

- Converge faster than Sigmoid

- Problem: not zero centered; dead when .

- GELU (Gaussian Error Linear Unit):

- Smoothness facilitates training

- Problem: higher computational cost; large negative values get small gradients

Case Study

AlexNet, VGG

Small filters, deep network.

The very first CNNs.

ResNet

Increasing the depth of a CNN does not always improve performance; in fact, very deep plain networks can exhibit the degradation problem, where training error increases as layers are added.

Although a deeper model should theoretically perform at least as well as a shallower one (since additional layers could learn identity mappings), in practice, standard layers struggle to approximate identity functions, making optimization difficult.

ResNet addresses this by reformulating the learning objective: instead of directly learning a mapping , each block learns a residual function , so the output becomes .

The shortcut (skip) connection that adds the input back to the learned residual improves gradient flow and makes it significantly easier to train very deep networks, especially when the desired transformation is close to identity.

Weight Initialization

If the value is too small, all activations tend to zero for deeper network layers.

If too large, activations blow up quickly.

The fix: Kaiming Initialization

ReLU correction: std = sqrt( 2/Din )

1 | dims = [4096] * 7 |

This makes the std almost constant. Activations are nicely scaled for all layers.

Data Preprocessing

Image Normalization: center and scale for each channel

- Subtract per-channel mean and Divide by per-channel std (almost all modern models) (stats along each channel = 3 numbers)

- Requires pre-computing means and std for each pixel channel (given your dataset)

Data Augmentation

We can do flips, random crops and scales, color jitters, random cutouts to enhance our training data.

Transfer Learning

How do we train without much data?

We can start with a large dataset like the ImageNet and train a deep CNN, learning general features.

With a small dataset, we can use the pretrained model and freeze most layers. We replace and reinitialize the final layer to match our new classes. We train only this last layer (a linear classifier).

With a bigger dataset, we can start from the pretrained model and initialize all layers with the pretrained weights. We then finetune more (or all) layers.

Deep learning frameworks provide pretrained models that we can transfer from:

- PyTorch: https://github.com/pytorch/vision

- Huggingface: https://github.com/huggingface/pytorch-image-models

Hyperparameters

A structured approach to stabilizing and optimizing deep learning training:

Step 1: Check Initial Loss

Verify the loss starts at a reasonable value (e.g., \log(\text{num_classes}) for classification). Large deviations typically indicate implementation issues such as incorrect labels or loss computation.

Step 2: Overfit a Small Sample

Train on a tiny subset (10–100 samples) and confirm the model can achieve near-zero loss. Failure here suggests problems with model capacity, optimization, or data preprocessing.

Step 3: Find a Working Learning Rate

Using the full dataset and a small weight decay (e.g., ), test learning rates:

Run ~100 iterations and select the rate that yields a clear, stable loss decrease.

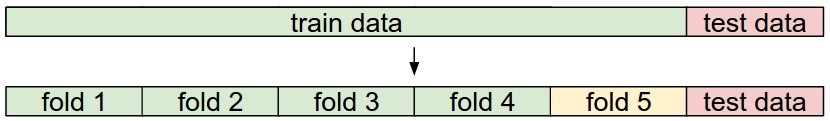

Step 4: Coarse Hyperparameter Search

Explore a broad range of values (learning rate, weight decay, batch size, model size) using grid or random search. Train each configuration for ~1–5 epochs to identify promising regions.

Step 5: Refine the Search

Narrow the search around strong candidates and train longer. Optionally introduce schedules (e.g., cosine decay) or warmup.

Step 6: Analyze Curves

Inspect training vs. validation loss and accuracy:

- Overfitting → increase regularization

- Underfitting → increase capacity or training time

- Instability → adjust learning rate or optimizer

Summary Insight

Most failures are due to basic setup issues (bad LR, incorrect loss, broken pipeline), not fine-grained hyperparameter choices. Systematic debugging is more effective than premature tuning.